Adapting Formal Logic for Everyday Mathematics

Antti Valmari

a

University of Jyv

¨

askyl

¨

a, Faculty of Information Technology, PO Box 35, FI-40014 University of Jyv

¨

askyl

¨

a, Finland

Keywords:

High School Mathematics, Elementary University Mathematics, Higher Order Thinking Skills.

Abstract:

Although logic is considered central to mathematics and computer science, there is evidence that teaching

logic has not been a great success. We identify three issues where what is typically taught conflicts with what

is needed by those who are supposed to apply logic. First, what is taught about the notion of implication often

disagrees with human intuition. We argue that in some cases human intuition is wrong, and in some others

teaching is to blame. Second, the formal concepts of logical consequence, logical equivalence and tautology

are not the similar concepts that everyday mathematicians and computer scientists need. The difference is

small enough to go unnoticed but big enough to cause confusion. Third, how to deal with undefined opera-

tions such as division by zero is left informal and perhaps fuzzy. These problems also harm development of

computer tools for education. We present suggestions about how to address them in teaching.

1 INTRODUCTION

Logical skills are widely believed to be important

for mathematics and computer science. For instance,

please see (Bronkhorst et al., 2020), (Hammack,

2018) and (Association for Computing Machinery

(ACM) and IEEE Computer Society, 2013).

Despite this, logic has never been a great success

in computer science education. Among the 75 top-

ics whose importance were surveyed by (Lethbridge,

2000), it is perhaps not a surprise that Specific pro-

gramming languages, Data structures, Software de-

sign and patterns, and Software architecture were

considered the four most important. More surpris-

ingly, typical engineering mathematics topics such as

Differential / integral calculus were near the oppo-

site end. Logic was in the middle ground. More

recent surveys have made similar findings. Please

see (Niemel

¨

a et al., 2018) for a discussion.

The situation of logic in computer science is pre-

sented vividly in the Preface to 2003 edition of a

textbook that was originally published commercially

as (Reeves and Clarke, 1990). The 2003 edition was

made, because of repeated demands from around the

world for more copies of the book. However, it was

not officially published but instead made freely avail-

able on the web, because “One is that no company,

today, thinks it worth publishing . . . The publishers

look around at all the courses which teach short-term

a

https://orcid.org/0000-0002-5022-1624

skills rather than lasting knowledge and see that logic

has little place, and see that a book on logic for com-

puter science does not represent an opportunity to

make monetary profits.”

(Mathieu-Soucy, 2016) wrote on the situation of

logic in mathematics: “This paper shows once again

that extensive knowledge of formal logic is not neces-

sary to do mathematics. However, what this research

brings is the whole idea of alertness to logical charac-

teristics, which is an interesting asset for mathematics

students. . . . This brings us to expand our reflection to

the teaching of logic: what kind of knowledge should

be taught and in what way to promote students’ un-

derstanding and diminish logical mistakes, in order to

make logic courses as efficient as possible?”

Many concepts in formal logic have been devel-

oped for theoretical studies on what can be expressed

and proven, not for actually carrying out proofs. As a

result, some of them do not serve applications well.

For instance, while 1 and < have a fixed meaning

in everyday mathematics, the formal notion of logi-

cal consequence assumes that their meanings can vary

within the limits set by the assumptions.

Therefore, “if x < 1 then x ≤ 1” is not a logical

consequence. It fails, for instance, if the meanings of

< and ≤ are swapped. Instead, x ≤ 1 is a logical con-

sequence of the two formulas x < y ↔x ≤y∧¬(x = y)

and x < 1. It is denoted by {x < y ↔x ≤y ∧¬(x = y),

x < 1} |= x ≤ 1. Indeed, formal logic has notation

for “I derive x ≤ 1 from the laws of real numbers and

Valmari, A.

Adapting Formal Logic for Everyday Mathematics.

DOI: 10.5220/0011063300003182

In Proceedings of the 14th International Conference on Computer Supported Education (CSEDU 2022) - Volume 2, pages 515-524

ISBN: 978-989-758-562-3; ISSN: 2184-5026

Copyright

c

2022 by SCITEPRESS – Science and Technology Publications, Lda. All rights reserved

515

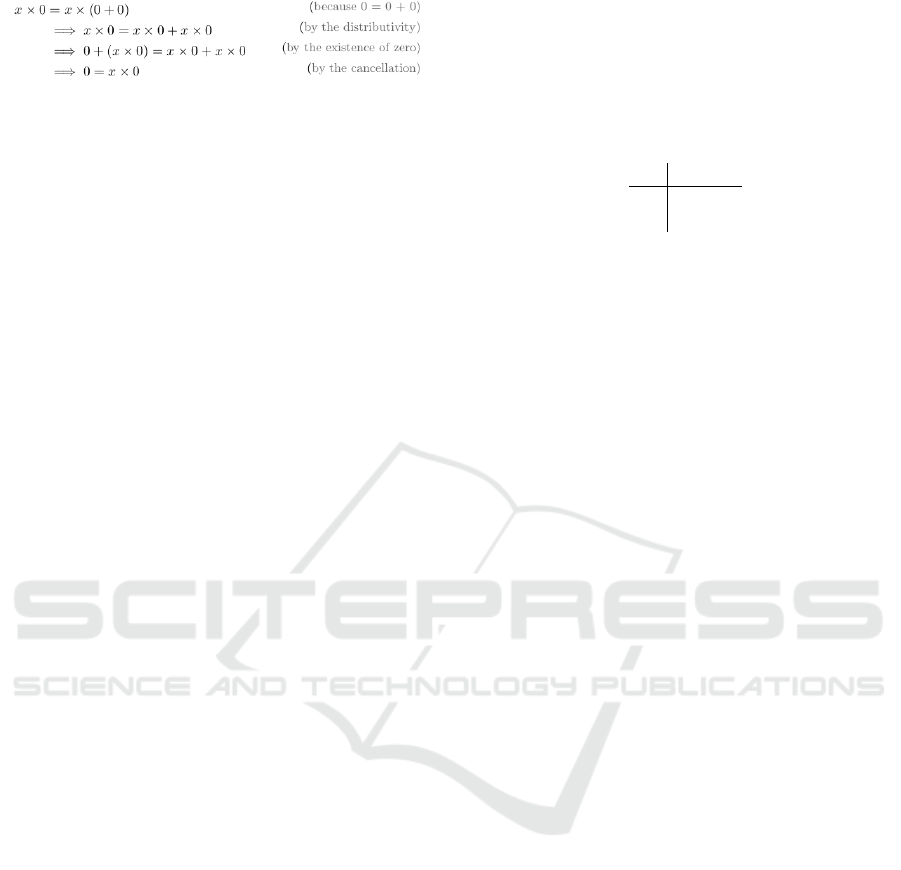

Figure 1: A proof from (Stefanowicz, 2014) page 14.

x < 1” but not for “I derive x ≤ 1 from x < 1, taking

the laws of real numbers for granted”.

So formal logic lacks practical notation for ex-

pressing reasoning chains. This has made many to use

⇒ for the purpose. Figure 1 shows an example. How-

ever, when ⇒ is taught, this meaning is almost never

taught. Instead, ⇒ is often taught to mean so-called

material implication and sometimes to mean logical

consequence. This causes confusion. The situation is

made worse by the fact that some correct aspects of

implication seem counter-intuitive to many at first.

In Section 2 we introduce material implication,

implication as an operator for expressing a reasoning

step or reasoning rule, and logical consequence. It

is a main claim of this paper that one of the reasons

why students have difficulties with logic is failure to

clarify the differences between these three concepts.

Some difficulties with material implication are

discussed in Section 3. First, Wason’s famous selec-

tion task is used to argue that original human intuition

on implication is not necessarily correct. However,

after explanation, there is no disagreement about the

correct solution. This is different from the next topic:

principle of explosion. It is so central to everyday

mathematics that it cannot be avoided. It is also so

counter-intuitive that there has been a long debate re-

lated to it among researchers. Finally an example is

analysed where “if . . . then . . . ” cannot be intepreted

as material implication but can as a reasoning rule,

demonstrating that the difference between these con-

cepts is important to understand.

Section 4 presents ⇒ as a reasoning operator. Its

meaning is explained in a way which we believe eas-

ier for students than contrasting ⇒ to logical conse-

quence. Our ⇒ has proven suitable for educational

software that checks reasoning (Valmari, 2021).

A different problem for both humans and educa-

tional software is that standard systems of logic lack

machinery for undefined operations, such as division

by 0. As a consequence, teaching about how to deal

with them in reasoning is scarce and mostly consists

of informal rules. This is unsatisfactory, because un-

defined operations are ubiquitous in mathematics and

computer science. Section 5 addresses this problem.

The paper ends with a brief conclusions sec-

tion. Throughout the paper we make recommenda-

tions about how these diffculties could be addressed

in teaching.

2 IMPLICATION AND RELATED

CONCEPTS

Let us denote the truth values with F (false) and T

(true). Implication as a connective is a propositional

logic connective that inputs two truth values and out-

puts a truth value according to the following table:

F T

F T T

T F T

It is also called conditional, material implication and

material conditional. It is usually denoted with →

(especially in theoretical sources) or ⇒. We use →.

The only case when material implication yields F

is when its first argument yields T and the second ar-

gument yields F. That is, P → Q is logically equiva-

lent to ¬P ∨Q. (That they are logically equivalent, is

sometimes called the rule of material implication.)

The connective ↔ or ⇔ is called biconditional,

material equivalence or material biconditional. We

have P ↔ Q if and only if (P → Q) ∧(Q →P).

The symbols ⇒ and ⇔ are also often used infor-

mally in a somewhat different meaning, to express a

reasoning step that may be chained to other reason-

ing steps, like in Figure 1. On page 1680 (Bronkhorst

et al., 2020) write “If people smoke or inhale partic-

ulate matter, then it will affect their health and thus

shorten their life”, and then represent it as “(smoking

∨ inhaling particulate matter) ⇒unhealthy ⇒shorter

life”. When solving a pair of equations, it is handy to

write 2x + 2y = 8 ∧3x −2y = 7 ⇒ 5x = 15 ⇔ x = 3.

These symbols are also often used to express rules

that justify such steps, such as x < y ⇔x ≤y ∧x 6= y.

In mathematics, “if x < 1 then x ≤1” and “if x ≤1

then x < 1” may be interpreted as material implica-

tions x < 1 → x ≤ 1 and x ≤ 1 → x < 1. The former

yields T for all values of x, and the latter yields F

when x = 1 and T for all other values of x. However,

it is often more natural to interpret them as a correct

reasoning rule x < 1 ⇒ x ≤ 1 and an incorrect rule

x ≤ 1 ⇒ x < 1. The former is correct, because x ≤ 1

is a mathematical consequence of x < 1. The latter is

incorrect, because x < 1 is not a mathematical conse-

quence of x ≤1, since x = 1 is a counter-example.

In everyday mathematics, the difference between

material implication and implication as a reason-

ing operator is often ignored or confused. For in-

stance, (Hammack, 2018) writes on page 44: “In

mathematics, whenever we encounter the construc-

tion “If P, then Q,” it means exactly what the truth

table for ⇒ expresses.” This clearly refers to material

implication. On the other hand, on page 57 we have

““If P, then Q,” is a statement. This statement is true

if it’s impossible for P to be true while Q is false. It is

CSEDU 2022 - 14th International Conference on Computer Supported Education

516

false if there is at least one instance in which P is true

but Q is false.” This is definitely not material impli-

cation. It resembles much more a reasoning rule.

Hammack’s idea becomes understandable on page

56: “In mathematics, whenever P(x) and Q(x) are

open sentences concerning elements x in some set X

(depending on context), an expression of form P(x) ⇒

Q(x) is understood to be the statement ∀x ∈X , P(x) ⇒

Q(x)”. That is, he always treats ⇒ in a reasoning

operator -like fashion, but for closed formulas it is

equivalent to material implication, and he uses this

fact to explain his approach. Around page 44, he tried

to restrict P and Q to closed formulas, but failed. (A

formula is closed if and only if every occurrence of

every variable in it is quantified by ∀ or ∃. Ham-

mack’s “statement” is a slightly different notion, but

this is not essential for the present discussion.)

We recommend using → for material implication

and ⇒ as a reasoning operator. In this notation (and :

instead of ,), Hammack’s idea is that P(x) ⇒ Q(x) is

correct if and only if ∀x ∈ X : P(x) → Q(x) holds.

This idea works well in the basic case. Indeed, we

will show in Section 3.4 that it resolves a much de-

bated paradox. On the other hand, it runs into trouble

in some other cases. For instance, it is true that if x is

non-negative, then if x

2

≥1 then x ≥1. It might seem

natural to express this as x ≥ 0 ⇒ (x

2

≥ 1 ⇒ x ≥ 1).

However, transforming it as above yields ∀x : x ≥0 →

(∀x : x

2

≥ 1 → x ≥ 1). Because (−1)

2

≥ 1 but not

−1 ≥1, the closed formula ∀x : x

2

≥1 →x ≥1 yields

F, making the translated formula false as a whole.

Also another difficulty remains. We will address

it in Section 5. It can be illustrated with the following

reasoning. Its conclusion is unacceptable, although

each step matches the interpretation of ⇒ discussed

above: On real numbers x > y if and only if not x ≤y.

Furthermore, if

1

x

> 0, then x > 0. By contraposition,

if x ≤ 0, then

1

x

≤ 0. We can similarly derive that if

x ≥0, then

1

x

≥0. Because 0 ≤0, letting x = 0 we get

both

1

0

≤ 0 and

1

0

≥ 0. So

1

0

= 0.

Yet another potential problem is confusing ⇒

with the notion of logical consequence in formal

logic. Roughly speaking, the latter means a conse-

quence that holds for every possible interpretation of

all proposition, constant, function and relation sym-

bols other than =, for all value combinations of vari-

ables. The precise definition is too complicated to be

shown here, because it needs the notions of signature,

domain of discourse, structure, assignment, interpre-

tation and model. Two (sets of) formulas are logically

equivalent, if and only if in both directions, one is a

logical consequence of the other.

The essential difference is that in everyday math-

ematics, constant symbols (for instance, 35 and π),

function symbols (for instance, + and

√

) and rela-

tion symbols (for instance, ≤) are assumed to have

fixed meanings, while in formal logic their meanings

may vary (with the exception that many authors fix

the meaning of = to its familiar meaning in mathe-

matics). As was explained in Section 1, x ≤ 1 is not

a logical consequence of x < 1, because ≤ and < can

be given meanings and x a value such that x < 1 holds

but x ≤ 1 does not (for instance, swap their standard

meanings and choose x = 1).

It seems that “logical consequence”, “logically

equivalent” and “tautology” are typically taught via

truth tables. This matches their meaning in formal

propositional logic, but leaves it open how to use them

elsewhere. Because the idea of varying the meaning

of 0, + and ≤ is strange to students, and because no

other phrase has been taught, this runs the risk of stu-

dents thinking that, for instance, x ≤1 is a logical con-

sequence of x < 1. We recommend teaching that it is

a mathematical consequence. When the meaning of

0, +, ≤ and so on may vary, we have “logical conse-

quence”, “logically equivalent” and “tautology”; and

when their meaning is fixed to the standard meaning,

we suggest using mathematical consequence, mathe-

matically equivalent and mathematical fact.

Logical consequence is denoted with Γ |= P,

where Γ is a set of formulas and P is a formula. For

the time being, we define P ⇒Q as Γ ∪ϒ ∪{P} |= Q,

where Γ specifies the properties of the mathematical

system in question (such as real numbers) and ad-

ditional symbols (such as < in terms of ≤), and ϒ

lists the local assumptions (such as the specification

of a case in a proof by cases). So ⇒ and ⇔ denote

mathematical consequence and mathematical equiva-

lence. “Reasoning step / rule” refer to applications of

⇒ and ⇔. Formally, step / rule are the same, but typi-

cally “step” is used about reasoning, and “rule” about

what justifies it. We will fine-tune the definition and

present it in a more student-friendly form in Section 4.

In formal logic, Γ `P denotes that P can be proven

from Γ. Modus ponens is the rule that {P, P → Q} `

Q. Deduction theorem says that if Γ ∪{P} ` Q, then

Γ ` P → Q. Both hold in standard systems of logic.

Together they constitute a tight link between → and

⇒, partially explaining why → and ⇒ are often con-

fused with each other. Modus ponens will remain

appreciated throughout this paper, but we will find a

problem with Deduction theorem in Section 5.

As evidence to the fact that ⇒ may be confused

with both → and |=, we cite the Wikipedia page

on Logical equivalence: “The logical equivalence of

p and q is sometimes expressed as p ≡ q,” . . . “or

p ⇐⇒ q, depending on the notation being used.

However, these symbols are also used for material

Adapting Formal Logic for Everyday Mathematics

517

equivalence, so proper interpretation would depend

on the context. Logical equivalence is different from

material equivalence, although the two concepts are

intrinsically related.”

3 PROBLEMS WITH

IMPLICATION

3.1 Wason’s Selection Task

Wason’s selection task (Wason, 1968) is a fa-

mous psychological experiment on material implica-

tion. The literature discussing it is extensive. We

used (Ragni et al., 2017) as our source.

The original experiment was of the following

kind. There are four cards on the table. The partic-

ipants are told that each card has a letter on one side

and a number on the opposite side. The visible sides

of the cards on the table show D, K, 3 and 7. The par-

ticipants are asked to choose as few cards as possible

so that by looking at their hidden sides one can check

whether or not the four cards obey the following rule:

If a card has D on one side, then it has 3 on the

other side.

The only way to violate this rule is to have D on the

letter side and some other number than 3 on the num-

ber side. So the correct answer is D and 7.

Please notice that in this task, implication occurs

in a bare-boned form. The task does not depend on

whether implication is thought of as a connective or as

a reasoning operator. Those issues do not arise where

material implication is an inapproriate formalization

of implication in natural language.

In the original experiment, less than 10 % of

the participants chose the right cards. In the meta-

analysis by (Ragni et al., 2017), 19 % solved a similar

task correctly; 36 % chose only the equivalent of D,

39 % chose the equivalent of D and 3, and 5 % chose

the equivalent of D, 3 and 7.

However, the results improve dramatically, if the

abstract letters and numbers are replaced by concrete

familiar items. For instance, one side of each card

could contain the name of a drink (beer or orange

juice), the opposite side the age of a person (16 or

25), and the rule could be

If a person is drinking beer, then the person

must be over 19 years of age.

Now in the meta-analysis, 64 % chose the right cards,

13 % chose only the equivalent of beer, 19 % chose

the equivalent of beer and 25, and 4 % chose the

equivalent of beer, 16 and 25.

(Ragni et al., 2017) have recognized 15 distinct

theories that aim at explaining this or related results.

We believe that the result can be explained by the idea

of two modes of human thinking: a fast instinctive

mode and a slower more rational mode (Kahneman,

2011). Apparently the fast mode of many people lacks

correct treatment of even the bare-boned material im-

plication in its abstract form, while has rules for con-

crete applications in familiar situations such as age

and alcohol.

On the other hand, the slower mode seems to mas-

ter the bare-boned version. In the words of (van

Benthem, 2008): “A psychologist, not very well-

disposed toward logic, once confessed to me that de-

spite all problems in short-term inferences like the

Wason Card Task, there was also the undeniable fact

that he had never met an experimental subject who

did not understand the logical solution when it was

explained to him, and then agreed that it was correct.”

We suggest telling students about results on Wa-

son’s selection task or something similar, to make

them realize that their original intuition may lead

them astray. This may encourage them to learn, un-

derstand, trust and use the laws of propositional logic

instead.

3.2 The Debate on Carroll’s Paradox

In (Carroll, 1894), the following question was pre-

sented in this form and in the form of a story:

There are two Propositions, A and B. It is

given that

1. If C is true, then, if A is true, B is not true;

2. If A is true, B is true.

The question is, can C be true?

In the story there was a barbershop with three barbers,

Allen, Brown and Carr. At least one of them is not

out, giving rise to (1), where A denotes that Allen is

out, and similarly with B and C. Allen is very shy and

does not go out without Brown, hence (2).

The barbershop paradox is not a problem for mod-

ern propositional logic. It is possible that C is true and

A is false (and B may be either). So C can be true.

It is, however, worth noticing that it definitely was

a problem at its time. In the story, Uncle Joe reasoned

that if C is true, then we have simultaneously “if A

is true, B is not true” and “if A is true, B is true”,

which is a contradiction, because the same premise

A leads simultaneously to the conflicting conclusions

B and not-B. Since this contradiction was obtained

by assuming C, the principle of reductio ad absurdum

yields that C cannot be true. (Carroll, 1894) men-

tioned that the dispute had already lasted more than

CSEDU 2022 - 14th International Conference on Computer Supported Education

518

a year, with conflicting opinions by several practised

logicians. Indeed, John Cook Wilson, the Wykeham

Professor in Logic of the University of Oxford, held

Uncle Joe’s view (Moktefi, 2007). The dispute con-

tinued for more than a decade (Jones, 1905).

Uncle Joe was wrong in claiming that “if A is true,

B is not true” and “if A is true, B is true” cannot hold

simultaneously. Instead, that happening means sim-

ply that A is not true. However, this forces us to ac-

cept that a false claim may simultaneously imply a

claim and its negation, because A, B and not-B pro-

vide an example. We will return to this observation in

the next subsection.

Because material implication was not easy for

professional logicians, we should not expect it to be

easy for students either.

The barbershop paradox can also be used to illus-

trate the power of formal manipulation. The premises

(1) and (2) can be represented as (C → (A → ¬B)) ∧

(A → B). Replacing each P → Q by ¬P ∨Q results

in (¬C ∨¬A ∨¬B) ∧(¬A ∨B), which simplifies to

¬A ∨(B ∧¬C). So either Allen is in (and Brown and

Carr may be anywhere), or Brown is out and Carr is

in (and Allen may be anywhere).

3.3 Principle of Explosion

Material implication is truth-functional, that is, its

result is a truth value which depends on nothing

else than the incoming truth values. This sometimes

clashes with the feeling by many people that for “if

P then Q” to be true, Q must somehow depend on

P. For instance, “if it is not sunny tomorrow, I will

stay at home” sounds sensible, while “if I do not stay

at home tomorrow, it will be sunny” sounds odd, be-

cause it seems to suggest that I could cause sunshine

by leaving home. However, as material implications,

they are logically equivalent.

This has led to a vast body of research; see (Egr

´

e

and Rott, 2021) for an up-to-date survey. Many ap-

proaches have rejected truth-functionality at the cost

of making the logic more complicated. However, fol-

lowing that path would take us far from everyday

mathematical practice.

Instead, we keep material implication, and sug-

gest to emphasize students that it does not capture

every aspect that our intuitive notion of implication

may cover. In particular, material implication does

not pay attention to what is the cause and what is

the effect. It only deals with what combinations of

truth values are possible and what are impossible. If

P → Q, then P true and Q false is impossible, and

the remaining three combinations are possible (unless

something else makes them impossible). That is all.

It is also worth emphasizing students that within

its scope, material implication is reliable, while intu-

ition tends to give wrong results every now and then.

The students may also be told that there have been at-

tempts to find notions of implication that match intu-

ition better, but the results have not been good enough

to replace material implication.

A related problem with intuition arises if P can-

not be true or Q cannot be false, because then P →Q

yields T, although there is not necessarily any sensi-

ble connection between P and Q. For instance, the

following are true:

• If Earth is flat, then nobody likes coffee.

• If Earth is flat, then every natural number can be

expressed as a sum of three squares of natural

numbers.

They are true because the only way to make P → Q

yield F is to make P true and Q false, but “Earth is

flat” cannot be made true. On the other hand, “nobody

likes coffee” and “. . . sum of three squares . . . ” do

not depend on “Earth is flat”. This makes the above

examples seem counter-intuitive.

Indeed, in standard logic, any false claim implies

just anything. This is called the principle of explo-

sion. It underlies proof by contradiction and proof by

contrapositive, which are central in everyday mathe-

matics. In the experience of the present author, this

principle is difficult for many students. Apparently it

was difficult also for John Cook Wilson. The barber-

shop paradox illustrates that it is necessary to accept

that a false claim may simultaneously imply a claim

and its negation. Although accepting it does not nec-

essarily mean accepting the principle of explosion in

its full generality, it is at least a long step in that di-

rection.

Now assume that a person who is held in a secure

prison says “if it rains tomorrow, I will stay in prison”.

The sentence is true, but sounds ironic, because the

prisoner cannot leave the prison, no matter what the

weather is. This is an example of material implica-

tion that is true not because of a sensible connection

between P and Q, but because Q cannot be made false.

Indeed, in standard logic, any true claim is implied by

just anything. This principle can be thought of as a

dual to the principle of explosion.

We recommend teaching students to rely on the

idea that a claim is true if and only if it has no counter-

examples. Similarly, a reasoning rule is incorrect

if and only if it has a counter-example. A counter-

example consist of a combination of values of vari-

ables that is allowed by the assumptions made in the

context where the claim or reasoning rule is stated,

and makes the claim false or rule incorrect. For in-

stance, in the case of real numbers, x =

1

2

and y = 0

Adapting Formal Logic for Everyday Mathematics

519

is a counter-example to x > y → x ≥ y + 1. How-

ever, in the case of integers, x cannot be

1

2

. Indeed,

x > y →x ≥y + 1 is true on integers.

If P cannot be true, then we cannot have P true and

Q false. So P →Q has no counter-examples. That is,

the principle of explosion agrees with the idea that a

claim is true if and only if it has no counter-examples.

3.4 Confusion with Reasoning Operator

This example has been modified from the Wikipedia

page on Paradoxes of material implication. The fol-

lowing is assumed to hold:

3. If John is in London then he is in England.

If “if . . . then . . . ” is interpreted as material impli-

cation, then the following can be proven, although it

seems plain wrong:

4. If John is in London then he is in France, or

if he is in Paris then he is in England.

To prove it, let (5) and (6) denote “if John is in London

then he is in France” and “if he is in Paris then he is

in England”, respectively. Now (4) can be rewritten

as ((5) or (6)). If “John is in London” is true, then

by (3) also “he is in England” is true. Then by the

dual to the principle of explosion, also (6) is true. If

“John is in London” is not true, then by the principle

of explosion, (5) is true. So no matter where John is,

at least one of (5) and (6) is true. Therefore, ((5) or

(6)) is true. Q.E.D.

A perhaps even more striking example is “if John

is in London then he is in Paris, or if he is in Paris then

he is in Brussels.” Namely, if John is in Paris then the

first implication holds, and if he is not in Paris then

the second implication holds. Indeed, no matter what

P, Q and R are, (P → Q) ∨(Q →R) yields T.

Hammack’s idea in Section 2 chases this paradox

away. That is, each material implication P → Q gives

rise to the reasoning rule P ⇒ Q. The rule P ⇒ Q

is correct if and only if P → Q yields T for all inter-

pretations of P and Q that are possible in the context.

In other words, P ⇒ Q is incorrect if and only if the

context allows at least one interpretation that makes

P true and Q false. This is similar to mathematics,

where “if x ≥ 1 then x > 1” is doomed incorrect by

the fact that it fails when x = 1, although it works

okay for all other values of x.

When understood as a reasoning rule, (3) is cor-

rect by assumption. However, (5) and (6) are incor-

rect, because the real Europe is a counter-example

that is allowed by (3). Furthermore, (4) must be in-

terpreted as saying that (5) is a correct reasoning rule

or (6) is a correct reasoning rule. Under this interpre-

tation, (4) is indeed wrong, matching intuition.

More formally, let x ∈ L denote that John is in

London, x ∈ E that he is in England, and so on. The

reasoning rule interpretation of (4) yields (∀x : (x ∈L

→ x ∈ F)) ∨(∀x : (x ∈ P → x ∈ E)), while the ma-

terial implication interpretation and the proof of (4)

only provide ∀x : ((x ∈L →x ∈F)∨(x ∈P →x ∈E)).

This resolution of the paradox suggests that peo-

ple do not think of each “if John is in x then he is in y”

as material implication but as a reasoning rule. It re-

sembles the strict implication in Section 3.1 of (Egr

´

e

and Rott, 2021), but aims at less deviation from stan-

dard logic.

Unfortunately, we saw in Section 2 that this idea

does not always work. Therefore, we recommend to

teach that “if . . . then . . . ” may translate to material

implication or to a reasoning rule, and the student has

to choose the right one. If neither one matches intu-

ition, then the student should perhaps ask for clarifi-

cation on the intended meaning of the sentence. If it

translates to a reasoning rule but is part of a bigger

formula like ((5) or (6)) above, then ∀ may have to be

added as was illustrated above. It is a task of the stu-

dent to choose how many ∀ are added and where they

are added to capture the intended meaning. No simple

general rule can be given, because different choices

are needed by (4) and by the “if x is non-negative,

then if x

2

≥ 1 then x ≥ 1” example in Section 2.

4 REASONING OPERATORS

In this section we describe the meaning of ⇒, ⇔ and

⇐ as reasoning operators. The ideas have been devel-

oped from (Valmari and Hella, 2017).

A reasoning chain is of the form P

0

∼

1

P

1

∼

2

.. . ∼

n

P

n

, where n ≥1, each P

i

is a formula and each

∼

i

is from the set {⇒, ⇔, ⇐}. The same reasoning

chain may not contain both ⇒and ⇐. Each P

i−1

∼

i

P

i

is a reasoning step.

Each reasoning chain occurs in a context. It speci-

fies those properties of proposition, constant, variable,

function and relation symbols that are allowed to be

used in writing formulas and reasoning. To write for-

mulas one needs to know such thing as + denotes a

function from a pair of real numbers to a real number;

variable x contains a real number; and variable ~v con-

tains a 3-dimensional vector. To reason one needs to

know that if Allen is out then Brown is out as well;

and for every x we have x + 0 = x. The specification

need not be exhaustive. For instance, the barbershop

paradox allows 5 and rules out only 3 combinations of

locations of persons. As another example, the group

axioms have infinitely many different models.

The context is not a concrete piece of text but an

CSEDU 2022 - 14th International Conference on Computer Supported Education

520

abstract entity. Properties that the reader may be ex-

pected to already know, need not be mentioned ex-

plicitly. For instance, if it is said that the domain of

discourse is real numbers, then the familiar properties

of 0, π, +, cos and so on are automatically available.

Each proposition, constant, function and relation

symbol has the same meaning in every formula to

which the same context applies, and ∀ and ∃ cannot

be applied to them. The values of variables are not

specified by the context. They may vary between for-

mulas, and within a formula as determined by ∀ or ∃.

Information may be temporarily added to the context

by such phrases as: “to derive a contradiction assume

that p is not a prime number”, “consider first the case

that p is even” and “so there is an integer k ≥ 2 such

that p = 2k”.

The difference between reasoning steps and ma-

terial implication is easier to keep in mind, if we do

not think of the former returning a truth value, but be-

ing correct or incorrect. A reasoning step is correct

if and only if it has no counter-examples. A counter-

example to P ⇒ Q is any combination of variable val-

ues that is allowed by the context such that P yields T

but Q does not yield T. The step P ⇐ Q is correct if

and only if Q ⇒ P is correct. The step P ⇔ Q is cor-

rect if and only if both P ⇒ Q and P ⇐ Q are correct.

That is, for each value combination of variables that

is allowed by the context, either both P and Q yield

T, or neither of them yields T. A reasoning chain is

correct if and only if every step in it is correct.

A reasoning rule is a reasoning step. Typically

“rule” is used for steps whose instantiations (for ex-

ample, 2n + 1 in place of x) are intended for later use.

By the above definition, P ⇒ Q ⇒ R is correct if

and only if both P ⇒ Q and Q ⇒ R are correct, and

similarly with P ⇒ Q ⇔R, and so on. Figure 1 illus-

trates that this is how ⇒ is used by many. Because

reasoning operators occur between, not in, formulas,

P ⇒ (Q ⇒ R) is a syntax error and thus means noth-

ing. This is analogous to common practice with re-

lation symbols in mathematics, where, for instance,

0 ≤ x < 1 means 0 ≤ x ∧x < 1 and x ∈ A ⊆ B means

x ∈A∧A ⊆B; and 0 ≤(x < 1) means nothing. It saves

us from having to decide whether ¬(x ≥ 0 ⇒ x ≥ 1)

means 0 ≤ x < 1 or that x ≥ 0 ⇒x ≥ 1 is an incorrect

reasoning step.

It would be analogous to interpret P → Q → R as

(P → Q) ∧(Q → R), but few, if any, do so. Instead,

many interpret it as P → (Q → R), some interpret it

as (P → Q) → R, and many reject it as ambiguous

because of lacking ( and ). All these interpretations

are different. For instance, if P is x ≥ 0, Q is x

2

≥ 1

and R is x ≥1, then

formula yields T

(P → Q) ∧(Q →R) when −1 < x < 0 ∨x ≥1

P → (Q → R) for every x

(P → Q) → R when x ≥0

That is, → is treated like + and ∪ in mathemat-

ics. It is also how other binary logical connectives are

treated. Indeed, P ∨Q ∨R definitely does not mean

the same as (P ∨Q) ∧(Q ∨R)!

We observe that in literature, when used as a log-

ical symbol, → almost always denotes material im-

plication, while the use of ⇒ is somewhat fuzzy but

has aspects of a reasoning operator. This is why we

recommend teaching → and not ⇒ as the material

implication, making it clear what reasoning operators

are, and teaching ⇒ as a reasoning operator.

We recall from Section 2 that ⇒ as a reasoning

operator is different from logical consequence, be-

cause it uses the standard meaning of 0, +, ≤ and

so on, while logical consequence uses every meaning

allowed by Γ. Therefore, to students whose interest

is in applying logic, ⇒ is both more useful and much

easier to teach than logical consequence.

In this framework, solving an equation, inequation

or a system of them consists of deriving a formula that

is mathematically equivalent to the original system

and shows explicitly the value combinations of vari-

ables that make the formula yield T. We have already

started an example by deriving 2x+2y = 8∧3x−2y =

7 ⇒ x = 3. We may continue 3x −2y = 7 ∧x = 3 ⇒

9 −2y = 7 ⇔ y = 1 and 2x + 2y = 8 ∧3x −2y = 7 ⇔

x = 3 ∧y = 1. That there are no roots is expressed by

F, and that every value combination is a root by T.

For instance, with real numbers, x

2

+ 1 = 0 ⇔ F.

We believe that many students learn solving (sys-

tems of) (in)equations first as a mechanical procedure

that they follow without asking why it yields the cor-

rect roots. Seeing that it is actually application of

more general logical reasoning may widen their un-

derstanding and promote high-level thinking skills.

5 DEALING WITH UNDEFINED

EXPRESSIONS

In Section 2 we presented a fake proof that

1

0

= 0. It

was based on ignoring the fact that

1

0

is undefined.

Standard systems of logic assume that every applica-

tion of each function symbol is defined, and are thus

unable to deal with undefined operations. Textbooks

on mathematics may warn about them, but it is hard to

find a systematic discussion. Compared to the amount

of effort devoted to teaching the zero product property

or the truth table of →, little is done to give students

tools for avoiding such errors as in our proof of

1

0

= 0.

Adapting Formal Logic for Everyday Mathematics

521

3

p

|x|−1 = x + 1

⇔ (x < 0 ∧3

√

−x −1 = x + 1) ∨ (x ≥0 ∧3

√

x −1 = x + 1) x < 0 and |x| = −x or x ≥ 0 and |x| = x

⇔ (x < 0 ∧x = −1) ∨ (x ≥0 ∧(x = 2 ∨x = 5)) solve each equation elsewhere

⇔ x = −1 ∨x = 2 ∨x = 5 combine roots

Figure 2: A solution via analysis by cases presented in logical notation.

Some mathematicians, logicians and computer

scientists have taken this problem seriously. As a mat-

ter of fact, there is a debate on how undefined ex-

pressions should be treated. Unfortunately, none of

the earlier solutions seems to match everyday math-

ematical thinking. Therefore, (Valmari and Hella,

2017) presented an initial version of a system of our

own. Since then it has been given a sound and G

¨

odel-

complete proof system and compared to many other

solutions, please see (Valmari and Hella, 2021).

Perhaps the first (insufficient) idea is that when us-

ing

1

x

, the domain of discourse is not R but R \{0};

and with

√

x, it is {x ∈R | x ≥0}. It runs into trouble

with the reasoning in Figure 2. It shows the split-

ting of an equation to two cases and the combination

of the results of the cases, with the cases solved via

other reasoning chains that are not shown. The idea

makes the domain of discourse of the second line be

the empty set.

Despite this, the idea works well and is widely

used with arithmetic comparison chains such as

x

2

−9

x

2

+x−6

=

(x+3)(x−3)

(x+3)(x−2)

=

x−3

x−2

. In the absence of a re-

mark to the effect that x 6= −3, the second = is widely

considered incorrect, but the first = is often accepted.

The unconscious rule seems to be that when both

sides of a comparison are undefined for precisely the

same combinations of values of variables, then the re-

mark need not be made. This does not mean, however,

treating undefined as equal to itself, since mathemati-

cians do not accept 0 as a root of

1

2x

+ x =

1

x

.

In logic, a term is an expression whose value is in

the domain of discourse (as opposed to a truth value).

Many computer scientists entertain the idea that ev-

ery undefined term yields a value from the domain

of discourse, but we do not necessarily know what

value (Gries and Schneider, 1995). This approach is

not G

¨

odel-complete. It makes it unknown whether 0

is a root of

1

x

= x, while in everyday mathematics it is

definitely not a root. Furthermore, it makes

1

0

∈R and

thus

1

0

+ 0 =

1

0

, so 0 becomes a root of

1

2x

+ x =

1

x

.

In our fake proof that

1

0

= 0, the error took place

in the step from “if

1

x

> 0, then x > 0” to “if x ≤ 0,

then

1

x

≤0”. There

1

0

≤0 was derived from ¬(

1

0

> 0).

However, mathematicians consider both

1

0

> 0 and

1

0

≤ 0 as not true. This suggest that an undefined for-

mula is neither false nor true. Indeed, many people

share this idea. For instance, when over 200 software

developers were asked how various undefined situa-

tions should be interpreted, between 74 % and 91 %

chose “error/exception” instead of “true”, “false”, and

“other (provide details)” (Chalin, 2005).

Therefore, we introduce a third truth value U (un-

defined) and have that ¬U yields U. At least three

options for ∧ and ∨ have been suggested in the liter-

ature. The one that matches the intention in Figure 2

is obtained by thinking of F less true than U which is

less true than T, and letting ∧ and ∀ pick the mini-

mum and ∨ and ∃ the maximum of the truth values of

their arguments. A relation yields U if and only if at

least one of its arguments is undefined.

For 3

p

|x|−1 = x + 1 ⇔ x = −1 ∨x = 2 ∨x = 5

to hold when x = 0, we have to accept that U ⇔F. To

not lose the symmetry of ⇔, we also have F ⇔ U. To

avoid reasoning U ⇔¬U ⇔¬F ⇔T, we no longer let

P ⇔ Q give permission to replace P by Q in a bigger

formula R(P) of which P is a part.

Instead, we introduce a new reasoning operator ≡

that does not treat U as equivalent to F. That is, P ≡Q

if and only if for every value combination of variables

that is allowed by the context, P and Q yield the same

truth value. If P ≡ Q, then P ⇔ Q and R(P) ≡ R(Q),

and thus R(P) ⇔ R(Q).

We let every function symbol f have a corre-

sponding formula bf e that specifies when f is de-

fined. For instance, with real numbers, b

√

x e is x ≥0,

b

x

y

e is y 6= 0 and bx + ye is T, denoting that x + y is

always defined. To ensure that bf e itself is always

defined, we require that if it mentions any function

symbol g, then bge is T.

This idea extends naturally to terms and formulas.

For instance,

f

g

is defined if and only if both f and

g are defined and g does not yield 0. That is, b

f

g

e is

bf e∧bge∧g 6= 0. Furthermore, bf ≤ ge is bf e∧bge,

b¬Pe is bPe, and bP ∧ Qe is (bPe∧ bQe) ∨(bPe ∧

¬P) ∨(bQe∧¬Q). For any formula P and combi-

nation of values of variables, precisely one of P, ¬P

and ¬bPe yields T.

Assume that P(Q) is a formula that contains no

other logical connectives or quantifiers than ¬, ∧, ∨,

∀ and ∃, and has Q as a sub-formula. If Q is within

the scope of an even number of ¬, then P(Q) ⇔

P(bQe∧Q), and otherwise P(Q) ⇔ P(¬bQe ∨ Q).

CSEDU 2022 - 14th International Conference on Computer Supported Education

522

This facilitates reduction of problems to a form where

nothing is undefined, and then solving them using

classical two-valued logic. For instance,

x

2

−9

x

2

+x−6

= 2

⇔ x

2

+ x −6 6= 0 ∧x

2

−9 = 2(x

2

+ x −6)

⇔ x

2

+ x −6 6= 0 ∧(x = −3 ∨x = 1)

⇔ x = 1.

It is also correct to reason

x

2

−9

x

2

+x−6

= 2 ⇒ x

2

−9 =

2(x

2

+ x −6) ⇔ x = −3 ∨x = 1 and then check the

roots, rejecting −3 and accepting 1.

This idea has been used in computer science, and

also many mathematicians seem to use it. Its correct-

ness is not trivial to prove. Furthermore, it fails if a

connective ∗ is allowed such that ∗F ≡ ∗T ≡ T and

∗U ≡ F. Please notice that b e is not such a connec-

tive, because it is not a connective, because it acts on

formulas instead of truth values.

Familiar laws on the domain of discourse remain

correct, such as x −x = 0 and 0x = 0; but an is-

sue arises regarding their use: clearly

1

x

−

1

x

= 0 and

0 ·

1

x

= 0 are not correct when x = 0. Therefore, we

have to teach students to distinguish between values

and terms. Constant and variable symbols (such as

1 and x) denote values and are always defined. How-

ever, when a more complicated term (such as

1

x

) is put

in the place of x (this is called instantiation of x with

1

x

), it is not necessarily always defined.

Fortunately, there are special cases where every-

thing works like in classical two-valued logic, and

there are theorems that tell how to deal with many of

the remaining cases. We cannot cover here the topic

in full, but we try to give a feeling.

Let f and g be terms and P(x) a formula. If for

each variable value combination that is allowed by

the context either f = g or both f and g are unde-

fined, then P( f ) ≡ P(g). Therefore, if f = g has

been promised under the convention mentioned ear-

lier without any remark about the domain, we need

not worry about the domain. For instance, we may

replace

(x+3)(x−3)

(x+3)(x−2)

for

x

2

−9

x

2

+x−6

even if x may be −3.

In particular, the condition holds automatically

with instantiations of =-laws whose both sides con-

tain precisely the same variables. So the law may

be instantiated without worrying whether what goes

in the place of the variables is defined. For instance,

x + y = y + x is such, but x −x = 0 is not.

Unfortunately, the same does not apply to ⇔- and

⇒-laws. For instance, the rule that a product is 0 if

and only if at least one of its factors is 0, is vulnerable

to mis-reasoning x ·

1

x

= 0 ⇔ x = 0 ∨

1

x

= 0 ⇔ x =

0. We recommend teaching that a product is 0 if and

only if at least one of its factors is 0 and all factors

are defined. This is different from the law x > y ⇔

¬(x ≤y), which does not need a similar remark. Even

x > y ≡¬(x ≤y) holds unconditionally.

To deal with such cases as

x

x

= 1 or

1

x

−

1

x

= 0,

there is a theorem that assumes that the logic has a

property known as regularity. A sufficient but not

necessary condition for regularity is that there are no

other connectives and quantifiers than ¬, ∧, ∨, ∀ and

∃. Then bf e ⇒ f = g implies P( f ) ⇒ P(g).

In addition to the above, the presence of U affects

propositional reasoning. For instance, the law of con-

traposition now has many forms, including “if P ⇒Q,

then ¬Q ∨¬bQe ⇒ ¬P ∨¬bPe” and “if P ⇒ ¬Q ∨

¬bQe, then Q ⇒¬P ∨¬bPe”. Of course, P∨¬P ⇔ T

must be replaced by P ∨¬P ∨¬bPe ≡ T. The law

P ∧¬P ⇔ F still holds, but only because U ⇔ F; we

do not have P ∧¬P ≡ F. We have P ∧¬P ∧bPe ≡ F.

These effects are mostly easy to take into account in

reasoning. Furthermore, almost all widely mentioned

propositional laws that do not use → or ↔ are valid

also in the presence of U.

An interesting exception is that P ∧(¬P ∨Q) and

P ∨(¬P ∧Q) are no longer equivalent to P ∧Q and

P∨Q. If P yields F, U and T, then P∧(¬P∨Q) yields

F, U and the same as Q yields, respectively. This

is exactly how the “and” operator of many progam-

ming languages behaves, if U is interpreted as pro-

gram crash. Three-valued logic thus naturally repre-

sents a phenomenon that is important in progamming

but ignored by classical two-valued logic.

There are two main conventions of what →should

mean in three-valued logic: one by Jan Łukasiewicz

and another by Stephen Cole Kleene. Modus po-

nens holds for both, but Deduction theorem for nei-

ther. Neither of them matches ⇒, because U ⇔ F.

Łukasiewicz’s convention is not regular but Kleene’s

is, since it makes P → Q equivalent to ¬P ∨Q.

Let f be free for x in P(x). The law ∀x : P(x) ⇒

P( f ) must be replaced by bf e∧∀x : P(x) ⇒ P( f ). If

the logic is not regular, then P( f ) ⇒ ∃x : P(x) must

be replaced by bf e∧P( f ) ⇒ ∃x : P(x).

There is strong evidence that the phenomena we

discussed above, cover all deviations from familiar

reasoning rules. Namely, so-called first-order logic

covers much of the use of predicate logic. Unlike

higher-order logics, it is complete in the sense that

all logical consequences can be proven. (Valmari and

Hella, 2021) proved that also our three-valued first-

order logic is complete. Furthermore, only five proof

rules of our complete proof system differ from the

corresponding system for classical two-valued logic:

the above-mentioned quantifier rules,

/

0 ` P ∨ ¬P ∨

¬bPe, {P} ` bPe, and {bf e} ` f = f .

Adapting Formal Logic for Everyday Mathematics

523

6 CONCLUSIONS

The success of teaching of formal logic as a practical

tool has been mediocre. We pointed out issues that

may be problematic for students.

We suggested showing students examples where

intuition has led people astray, such as Wason’s selec-

tion task or the debate on Carroll’s paradox. It may

help them reject incorrect intuitive rules of reasoning

in favour of rules of formal logic.

We suggested teaching students the following:

Trust on the principle that “if . . . then . . . ” holds if

and only if it has no counter-examples. The principle

of explosion follows from this, so accept it, although

it may seem counter-intuitive at first. “If . . . then . . . ”

may mean material implication or a reasoning rule.

Use ∀ appropriately to capture the intended meaning.

Denote material implication with →, and the similar

reasoning rule with ⇒. Use the phrases “mathemati-

cal consequence” and so on instead of “logical conse-

quence” and so on, because the latter do not assume

that 0, +, ≤ and so on have their standard meaning.

We presented conventions for reasoning operators

and the treatment of undefined operations. They have

proven well-defined and rigorous enough to be used in

educational software written by us (Valmari, 2021).

REFERENCES

Association for Computing Machinery (ACM) and IEEE

Computer Society (2013). Computer Science Curric-

ula 2013: Curriculum Guidelines for Undergraduate

Degree Programs in Computer Science. ACM, New

York, NY, USA.

Bronkhorst, H., Roorda, G., Suhre, C., and Goedhart, M.

(2020). Logical reasoning in formal and everyday rea-

soning tasks. International Journal of Science and

Mathematics Education, 18:1673–1694.

Carroll, L. (1894). A logical paradox. Mind, 3(11):436–

438.

Chalin, P. (2005). Logical foundations of program as-

sertions: What do practitioners want? In Aich-

ernig, B. K. and Beckert, B., editors, Third IEEE In-

ternational Conference on Software Engineering and

Formal Methods (SEFM 2005), 7-9 September 2005,

Koblenz, Germany, pages 383–393. IEEE Computer

Society.

Egr

´

e, P. and Rott, H. (2021). The Logic of Conditionals. In

Zalta, E. N., editor, The Stanford Encyclopedia of Phi-

losophy. Metaphysics Research Lab, Stanford Univer-

sity, Winter 2021 edition.

Gries, D. and Schneider, F. B. (1995). Avoiding the un-

defined by underspecification. In Computer science

today, volume 1000 of Lecture Notes in Comput. Sci.,

pages 366–373. Springer, Berlin.

Hammack, R. (2018). Book Of Proof. Self-published, 3rd

edition. Approved by the American Institute of Math-

ematics’ Open Textbook Initiative.

Jones, E. E. C. (1905). Lewis Carroll’s logical paradox.

Mind, 14(56):576–578.

Kahneman, D. (2011). Thinking, fast and slow. Farrar,

Straus and Giroux, New York.

Lethbridge, T. (2000). What knowledge is important to a

software professional? IEEE Computer, 33(5):44–50.

Mathieu-Soucy, S. (2016). Should university students know

about formal logic: an example of nonroutine prob-

lem. In INDRUM 2016: First conference of the Inter-

national Network for Didactic Research in University

Mathematics. hal-01337943.

Moktefi, A. (2007). Lewis Carroll and the British

nineteenth-century logicians on the barber shop prob-

lem. In Cupillari, A., editor, Proceedings of The Cana-

dian Society for the History and Philosophy of Math-

ematics’ Annual Meeting, pages 189–199.

Niemel

¨

a, P., Valmari, A., and Ali-L

¨

oytty, S. (2018). Al-

gorithms and logic as programming primers. In

McLaren, B. M., Reilly, R., Zvacek, S., and Uho-

moibhi, J., editors, Computer Supported Education

- 10th International Conference, CSEDU 2018, Fun-

chal, Madeira, Portugal, March 15-17, 2018, Revised

Selected Papers, volume 1022 of Communications in

Computer and Information Science, pages 357–383.

Springer.

Ragni, M., Kola, I., and Johnson-Laird, P. (2017). The Wa-

son selection task: A meta-analysis. In Proceedings

of the 39th Annual Meeting of the Cognitive Science

Society, pages 980–985.

Reeves, S. and Clarke, M. (1990). Logic for Computer Sci-

ence. Addison-Wesley.

Stefanowicz, A. (2014). Proofs and Mathematical Reason-

ing. University of Birmingham, Mathematics Support

Centre.

Valmari, A. (2021). Automated checking of flexible

mathematical reasoning in the case of systems of

(in)equations and the absolute value operator. In

Csap

´

o, B. and Uhomoibhi, J., editors, Proceedings

of the 13th International Conference on Computer

Supported Education, CSEDU 2021, Online Stream-

ing, April 23-25, 2021, Volume 2, pages 324–331.

SCITEPRESS.

Valmari, A. and Hella, L. (2017). The logics taught and used

at high schools are not the same. In Karhum

¨

aki, J.,

Matiyasevich, Y., and Saarela, A., editors, Proc. of the

Fourth Russian Finnish Symposium on Discrete Math-

ematics, number 26 in TUCS Lecture Notes, pages

172–186. Turku Centre for Computer Science.

Valmari, A. and Hella, L. (2021). A completeness proof for

a regular predicate logic with undefined truth value.

arXiv:2112.04436.

van Benthem, J. (2008). Logic and reasoning: Do the facts

matter? Studia Logica, 88(1):67–84.

Wason, P. C. (1968). Reasoning about a rule. Quarterly

Journal of Experimental Psychology, 20(3):273–281.

CSEDU 2022 - 14th International Conference on Computer Supported Education

524