IMITATING THE KNOWLEDGE MANAGEMENT OF

COMMUNITIES OF PRACTICE

Juan Pablo Soto

1

, Aurora Vizcaíno

1

, Javier Portillo

1

, Oscar M. Rodríguez-Elias

2

and Mario Piattini

1

1

Alarcos Research Group, Information Systems and Technologies Department, UCLM-Soluziona Research and

Development Institute, University of Castilla – La Mancha, Spain

UABC, Facultad de Ciencias, Ensenada México

Keywords: Reputation, multi-agent architecture, communities of practice, knowledge management.

Abstract: Advances in technology have led to the development of knowledge management systems with the intention

of improving organizational performance. Nevertheless, implementation of this kind of mechanisms is not

an easy task due to the necessity of taking into account social aspects (such as reputation) that improve the

exchange of information between groups of people. Considering, the advantages of working with groups

with similar interests we have modelled communities of agents which represent communities of people

interested in similar topics. In order to implement this model we propose a multi-agent architecture in

charge of evaluating the relevance of the knowledge in a knowledge base and the degree of reputation that a

person has as the contributor of information. We pay particular attention to showing how the use of the

agents works by using a prototype system to search for knowledge related to a particular domain of a

community of practice. Several communities of agents integrated into an organization have the capacity to

follow the interaction process of employees when carrying out their daily activities.

1 INTRODUCTION

For several decades human behaviour has been

studied with the objective of imitating certain

aspects of it in computational systems (Schaeffer et

al, 1996). Based on this idea we have studied how

the people obtain and increase their knowledge in

their daily work. From this study we realise that

frequently, employees exchange knowledge with

people who work on similar topics as them and

consequently, either formally or informally,

communities are created which can be called

“communities of practice”, by which we mean

groups of people with a common interest where each

member contributes knowledge about a common

domain (Wenger, 1998).

Communities of practice enable their members to

benefit from each other’s knowledge. This

knowledge resides not only in people’s minds but

also in the interaction between people and

documents. An interesting fact is that individuals are

frequently more likely to use knowledge built by

their community team members than those created

by members outside their group (Desouza et al,

2006). This factor occurs because people trust more

in the information offered by a member of their

community than in that supplied by a person who

does not belongs to that community. Thus, a new

concept takes place in the process of obtaining

information. This concept is “trust” and can be

defined as “confidence in the ability and intention of

an information source to deliver correct

information” (Barber & Kim, 2004). Therefore,

people, in real life in general and in companies in

particular, prefer to exchange knowledge with

“trustworthy people” by which we mean people they

trust. Of course, the fact of belonging to the same

community of practice already implies that they

have similar interests and perhaps the same level of

knowledge about a topic. Consequently, the level of

trust within a community is often higher that which

exists out of the community. Because of this, as is

claimed in (Desouza et al, 2006), knowledge reuse

tends to be restricted within groups.

Bearing in mind that people exchange

information with “trustworthy knowledge sources”

we have designed a multi-agent architecture in

which agents try to emulate humans evaluating

173

Pablo Soto J., Vizca

´

ıno A., Portillo J., M. Rodr

´

ıguez-Elias O. and Piattini M. (2007).

IMITATING THE KNOWLEDGE MANAGEMENT OF COMMUNITIES OF PRACTICE.

In Proceedings of the Fourth International Conference on Informatics in Control, Automation and Robotics, pages 173-178

Copyright

c

SciTePress

knowledge sources with the goal of fostering the use

of knowledge bases in companies where agents

provide “trustworthy knowledge” to the employees.

Thus, in section 2 the multi-agent architecture is

described. Then, in section 3 we illustrate how the

architecture has been used to implement a prototype

which detects and suggests trustworthy documents

for members in a community of practice. In section

4 related works are outlined. Finally in section 5

conclusions are described.

2 A MULTI-AGENT

ARCHITECTURE TO

DEVELOP TRUSTWORTHY

KNOWLEDGE BASES

Many organizations worried about their competitive

advantage use knowledge bases to store their

knowledge. However, sometimes the knowledge

which is put into a system is not very valuable. This

decreases the trust that employees have in their

organizational knowledge and reduces the

probability that people will use it. In order to avoid

this situation we have developed a multi-agent

architecture in charge of monitoring and evaluating

the knowledge that is stored in a knowledge base.

To design this architecture we have taken into

account how people obtain information in their daily

lives. Bearing in mind the advantages of working

with groups of similar interests we have organized

the agents into communities of people who are

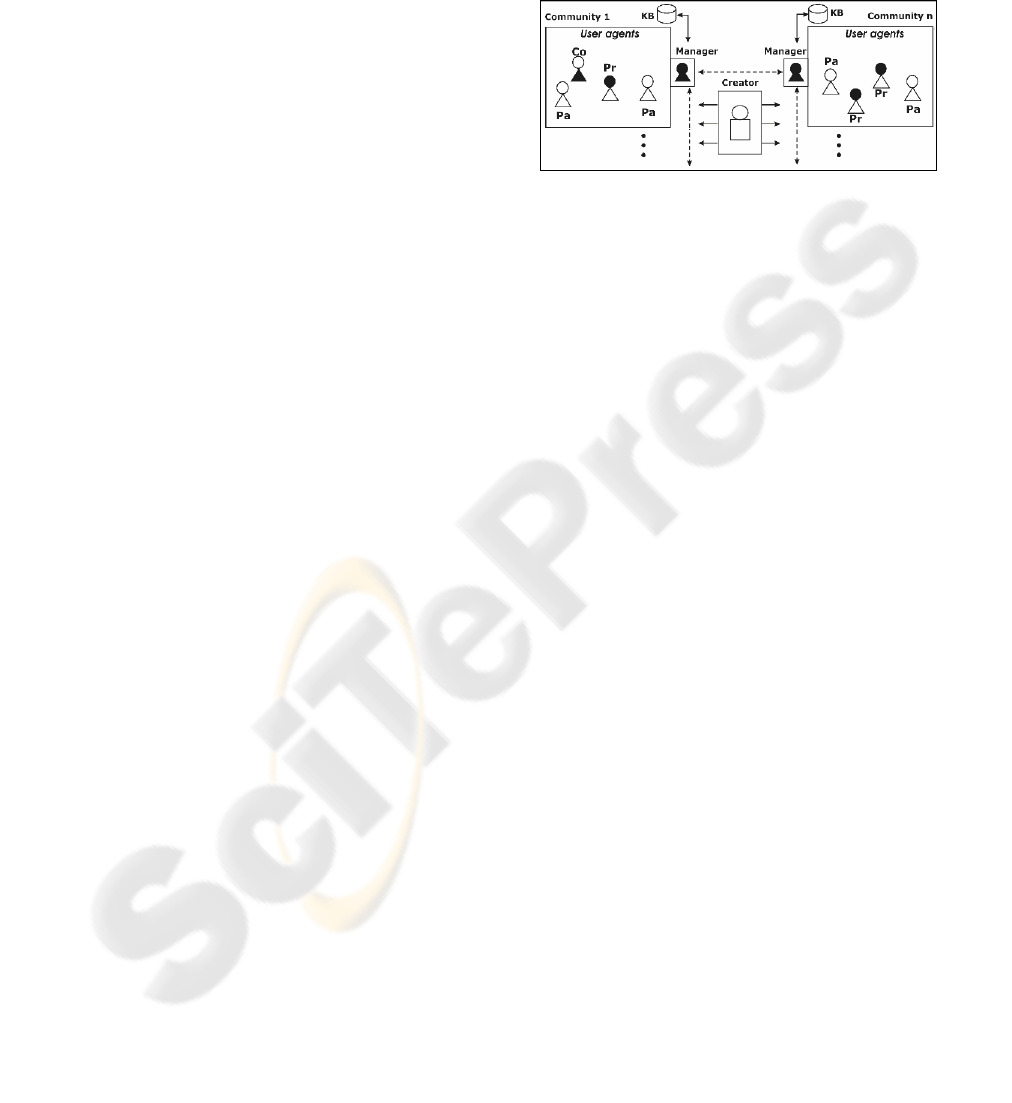

interested in similar topics. Thus, Figure 1 shows

different communities where there are two types of

agents: the User Agent and the Manager Agent. The

former is used to represent each person that may

consult or introduce knowledge in a knowledge

base. The User Agent can assume three types of

behaviour or roles similar to the tasks that a person

can carry out in a knowledge base. Therefore, the

User Agent plays one role or another depending

upon whether the person that it represents carries out

one of the following actions:

The person contributes new knowledge to

the communities in which s/he is registered.

In this case the User Agent plays the role of

Provider (Pr).

The person uses knowledge previously

stored in the community. Then, the User

Agent will be considered as a Consumer

(Co).

The person helps other users to achieve their

goals, for instance by giving an evaluation

of certain knowledge. In this case the role is

of a Partner (Pa). So, Figure 1 shows that

in community 1 there are two User Agents

playing the role of Partner, one User Agent

playing the role of Consumer and another

being a Provider.

Figure 1: Multi-agent architecture.

The fact that this agent can act both as

consumers and also as providers of knowledge may

lead to better results because they aim to motivate

the active participation of the individual in the

learning process, which often results in the

development of creativity and critical thinking (Kan,

1999).

The conceptual model of this agent, whose goals

are to detect trustworthy agents and sources, is based

on two closely related concepts: trust and reputation.

The former can be defined as confidence in the

ability and intention of an information source to

deliver correct information (Barber & Kim, 2004)

and the latter as the amount of trust an agent has in

an information source, created through interactions

with information sources. This definition is the most

appropriate for our research since the level of

confidence in a source is based on previous

experience of this. It is for this reason that the

remainder of the paper deals solely with reputation.

However, if we attempt to imitate the behaviour of

the employees in a company when they are

exchanging and obtaining information we observe

that apart from the concept of reputation other

factors also influence. These are:

Position: employees often consider

information that comes from a boss as being

more reliable than that which comes from

another employee in the same (or a lower)

position as him/her (Wasserman &

Glaskiewics, 1994). However, this is not a

universal truth and depends on the situation.

For instance in a collaborative learning

setting collaboration is more likely to occur

between people of a similar status than

between a boss and his/her employee or

between a teacher and pupils (Dillenbourg,

1999). Because of this, as will be explained

ICINCO 2007 - International Conference on Informatics in Control, Automation and Robotics

174

later, in our research this factor will be

calculated by taking into account a weight

that can strengthen this factor to a greater or

to a lesser degree.

Expertise: this term can be briefly defined as

the skill or knowledge of a person who

knows a great deal about a specific thing.

This is an important factor since people

often trust in experts more than in novice

employees. Moreover, tools such as

expertise location (Crowder et al, 2002) are

being developed with the goal of promoting

the sharing of expertise knowledge

(Rodríguez-Elias et al, 2004).

Previous experience: People have greater

trust in those sources from which they have

previously obtained more “valuable

information”. Therefore, a factor that

influences the increasing or decreasing

reputation of a source is “previous

experience” and this factor can help us to

detect invaluable sources or knowledge. One

problem occurring in organizations is that

some employees introduce information

which is not particularly useful in a

knowledge base with the only objective of

trying to simulate that they are contributing

information in order to generate points or

benefits such as incentives or rewards

(Huysman & Wit, 2000). When this

happens, the information stored is generally

not very valuable and it will probably never

be used.

Taking all these factors into account we have

defined an own “concept of reputation” (see Figure

2).

Figure 2: Reputation module.

That is, the reputation of agent

s

about agent

i

is a

collective measure defined by the previously

describe factors and computed as follows:

n

R

si

= w

e

*E

i

+ w

p

*P

i

+

(∑ QC

i

)/n

i=1

where R

si

denotes the reputation value that

agent

s

has in agent

i

(each agent in the community

has an opinion about each one of the agent members

of the community) .

w

e

and w

p

are weights with which the Reputation

value can be adjusted to the needs of the

organizations.

E

i

is the value of expertise which is calculated

according to the degree of experience that a person

has in a domain.

P

i

is the value assigned to the position of a

person. This position is defined by the

organizational diagram of the enterprise. Therefore,

a value that determines the hierarchic level within

the organization can be assigned to each level of the

diagram.

In addition, previous experience should also be

calculated. To accomplish this it is supposed that

when an agent A consults information from another

agent B, the agent A should evaluate how useful this

information was. This value is called QC

i

(Quality of

i’s Contribution). To attain the average value of an

agent’s contribution, we calculate the sum of all the

values assigned to their contributions and we divide

it between their total. In the formula n represents the

total number of evaluated contributions.

In this way, an agent can obtain a value related to

the reputation of another agent and decide to what

degree it is going to consider the information

obtained from this agent.

The second type of agent within a community is

called the Manager Agent (represented in black in

Figure 1) which is in charge of managing and

controlling its community. In order to approach this

type of agent the following tasks are carried out:

Registering an agent in its community. It

thus controls how many agents there are

and how long the stay of each agent in that

community is.

Registering the frequency of contribution of

each agent. This value is updated every

time an agent makes a contribution to the

community.

Registering the number of times that an

agent gives feedback about other agents’

knowledge. For instance, when an agent

“A” uses information from another agent

“B”, the agent A should evaluate this

information. Monitoring how often an

agent gives feedback about other agents’

information helps to detect whether agents

contribute to the creation of knowledge

flows in the community since it is as

important that an agent contributes with

new information as it is that another agent

contributes by evaluating the relevance or

importance of this information.

Registering the interactions between agents.

Every time an agent evaluates the

contributions of another agent the Manager

agent will register this interaction. But this

IMITATING THE KNOWLEDGE MANAGEMENT OF COMMUNITIES OF PRACTICE

175

interaction is only in one direction, which

means, if the agent A consults information

from agent B and evaluates it, the Manager

records that A knows B but that does not

means that B knows A because B does not

obtain any information about A.

Besides these agents there is another in charge of

initiating new agents and creating new communities.

This agent has two main roles: the “creator” role is

assumed when there is a petition (made by a User

Agent) to create a new Community and the

“initiator” role is assumed when the system is

initially launched. This agent, which is not included

in any of the communities, is located in the centre of

Figure 1, and is called the Creator Agent.

3 USING THE ARCHITECTURE

In order to evaluate the architecture and to gradually

improve it we have developed a prototype system

into which people can introduce documents and

where these documents can also be consulted by

other people. The goal of this prototype is that

agents software help employees to discover the

information that may be useful to them thus

decreasing the overload of information that

employees often have and strengthening the use of

knowledge bases in companies. In addition, we try

to avoid the situation of employees storing valueless

information in the knowledge base.

The main feature of this system is that when a

person searches for knowledge in a community, and

after having used the knowledge obtained, that

person then has to evaluate the knowledge in order

to indicate whether:

The knowledge was useful

How it was related to the topic of the search

(for instance a lot, not too much, not at all).

User Agents will use this information to

construct a “trust net”. Thus, these agents can know

how reliable the contributions of each person are and

also what each contribution was. This information is

very important to companies since by consulting it,

it is possible to know which employees are the best

contributors. From this information other

information can be obtained. For instance, who

should be consulted when there is a problem in a

concrete domain, since we agree with (Ackoff,

1989) who claim that knowledge management

systems should encourage dialogue between

individuals rather than simply directing them to

repositories.

In the next sub-sections, we describe different

situations or scenarios to show how the agents work

in this prototype. These situations will represent

some general community rules and will show the

main interactions between agents in a community.

3.1 A New User Arrives in a

Community

This situation happens when, for instance, a user

wants to join to a new community. To do this, the

person will choose a community from all the

available communities. In this case the Manager

Agent will ask whether there is any agent that knows

the new user in order to set a trust value on this

person (this process is similar to the socialization

stage of the SECI model (Nonaka & Takeuchi,

1995), where each one indicates its experience about

a topic, in this case about another person).

When a user wants to join to a community in

which no member knows anything about him/her,

the reputation value assigned to the user in the new

community is calculated on the basis of the

reputation assigned from others communities where

the user is or was a member. In order to do this, the

User Agent called, for instance, j, will ask each

community manager where he/she was previously a

member to consult each agent which knows him/her

with the goal of calculating the average value of

his/her reputation (R

j

). This is calculated as:

n

R

j

=

(∑ R

ij

)/n

i=1

where n is the number agents who know j and R

ij

is the value of j’s reputation in the eyes of i. In the

case of being known in several communities the

average of the values R

j

will be calculated. Then,

the User Agent presents this reputation value

(similar to when a person presents his/her

curriculum vitae when s/he wishes to join in a

company) to the community manager to which it is

“applying”. In the case of the user being new in the

system (s/he has never been in a community) then

this user is assigned a “new” label in order for the

situation to be identified.

Once the Community Manager has obtained a

Reputation value for j it is added to the community

member list.

3.2 Using Community Documents and

Updating Reputation Values

People can search for documents in every

community in which they are registered. When a

ICINCO 2007 - International Conference on Informatics in Control, Automation and Robotics

176

person searches for a document relating to a topic

his/her User Agent consults the Manager Agent

about which documents are related to their search.

Then, the Manager agent answers with a list of

documents. The User Agent sorts this list according

to the reputation value of the authors, which is to say

that the contributions with the best reputations for

this Agent are listed first. On the other hand, when

the user doesn’t know the contributor then the User

Agent consults the Manager Agent about which

members of the community know the contributors.

Thus, the User Agent can consult the opinions that

other agents have about these contributors, thus

taking advantage of other agents’ experience. To do

this the Manager consults its interaction table and

responds with a list of the members who know the

User Agent Then, this User Agent contacts each of

them. If nobody knows the contributors then the

information is listed, taking their expertise and

positions into account. In this way the User Agent

can detect how worthy a document is, thus saving

employees’ time, since they do not need to review

all documents related to a topic but only those

considered most relevant by the members of the

community or by the person him/herself according

to previous experience with the document or its

authors.

Once the person has chosen a document, his/her

User Agent adds this document to its own document

list (list of consulted documents), and if the author

of the document is not known by the person because

it is the first time that s/he has worked with him/her,

then the Community Manager adds this relation to

the interaction table explained in section 2. This step

is very important since when the person evaluates

the document consulted, his/her User Agent will be

able to assign a QC for this document.

4 RELATED WORK

This research can be compared with other proposals

that use agents and trust communities in knowledge

exchange. In literature we found several trust and

reputation mechanisms that have been proposed for

large open environments, for instance: e-commerce

(Zachaira et al, 1999), peer-to-peer computing

(Wang & Vassileva, 2003), etc.

There are others works on trust and reputation

(Griffiths, 2005; Yu & Singh, 2000). We shall only

mention those works that are most related to our

approach.

In (Schulz et al, 2003), the authors propose a

framework for exchanging knowledge in a mobile

environment. They use delegate agents to be spread

out into the network of a mobile community and use

trust information to serve as a virtual presence of a

mobile user. Another interesting work is (Wang &

Vassileva, 2003). In this work the authors describe a

trust and reputation mechanism that allows peers to

discover partners who meet their individual

requirements through individual experience and

sharing experiences with other peers with similar

preferences. This work is focused on peer-to-peer

environments.

Barber and Kim present a multi-agent belief

revision algorithm based on belief networks (Barber

& Kim, 2004). In their model the agent is able to

evaluate incoming information, to generate a

consistent knowledge base, and to avoid fraudulent

information from unreliable or deceptive

information source or agents. This work has a goal

similar to ours. However, the means of attaining it

are different. In Barber and Kim’s case they define

reputation as a probability measure, since the

information source is assigned a reputation value of

between 0 and 1. Moreover, every time a source

sends knowledge the source should indicate the

certainty factor that the source has of that

knowledge. In our case, the focus is very different

since it is the receiver who evaluates the relevance

of a piece of knowledge rather than the provider as

in Barber and Kim’s proposal.

Therefore, the main difference between our work

and previous works is that we take into account

factors that might influence the level of trust that a

person has in a piece of knowledge and in a

knowledge source. Moreover, we present a general

formula to define the reputation concept. This

formula can be adapted, by modifying the value of

the weights, to different settings. This is an

important difference from other works which are

focused on particular domains.

5 CONCLUSIONS

Communities of practice have the potential to

improve organizational performance and facilitate

community work. Because of this we consider it

important to model people’s behaviour within

communities with the purpose of imitating the

exchange of information in companies that are

produced in those communities. Therefore, we

attempt to encourage the sharing or information in

organizations by using knowledge bases. To do this

we have designed a multi-agent architecture where

the artificial agents use similar parameters to those

IMITATING THE KNOWLEDGE MANAGEMENT OF COMMUNITIES OF PRACTICE

177

of humans in order to evaluate knowledge and

knowledge sources. These factors are: Reputation,

expertise and of course, previous experience.

This approach implies several advantages for

organizations as it permits them to identify the

expertise of their employees and to measure the

quality of their contributions. Therefore, it is

expected that a greater flow of communication will

exist between them which will consequently produce

an increase in their knowledge.

In addition, this work has illustrated how the

architecture can be used to implement a prototype.

The main functionalities of the prototype are:

Controlling whether employees try to

introduce valueless knowledge with the

goal of obtaining some profit such as

points, incentives, rewards,, etc

Providing the most suitable knowledge for

the employee’s queries, by using the

reputation and relevance values that the

agents have obtained from previous

experiences.

Detecting the expertise of the employees

within an organization.

All these advantages provide organizations with

a better control of their knowledge base which will

have more trustworthy knowledge and it is

consequently expected that employees will feel more

willing to use it.

ACKNOWLEDGEMENTS

This work is partially supported by the ENIGMAS

(PIB-05-058), and MECENAS (PBI06-0024)

project, Junta de Comunidades de Castilla-La

Mancha, Consejería de Educación y Ciencia, both in

Spain. It is also supported by the ESFINGE project

(TIN2006-15175-C05-05) Ministerio de Educación

y Ciencia (Dirección General de Investigación)/

Fondos Europeos de Desarrollo Regional (FEDER)

in Spain.

REFERENCES

Ackoff, R., 1989, From Data to Wisdom. Journal of

Applied Systems Analysis, Vol. 16, pp: 3-9.

Barber, K., Kim, J., 2004, Belief Revision Process Based

on Trust: Simulation Experiments. In 4th Workshop on

Deception, Fraud and Trust in Agent Societies,

Montreal Canada.

Crowder, R., Hughes, G., Hall, W., 2002, Approaches to

Locating Expertise Using Corporate Knowledge.

International Journal of Intelligent Systems in

Accounting Finance & Management, Vol. 11, pp: 185-

200.

Desouza, K., Awazu, Y., Baloh, P., 2006, Managing

Knowledge in Global Software Development Efforts:

Issues and Practices. IEEE Software, pp: 30-37.

Dillenbourg, P., 1999, Introduction: What Do You Mean

By "Collaborative Learning"?. Collaborative Learning

Cognitive and Computational Approaches.

Dillenbourg (Ed.). Elsevier Science.

Griffiths, N., 2005, Task Delegation Using Experience-

Based Multi-Dimensional Trust, AAMAS'05, pp: 489-

496.

Huysman, M., Wit, D., 2000, Knowledge Sharing in

Practice. Kluwer Academic Publishers, Dordrecht.

Kan, G., 1999, Gnutella. Peer-to-Peer: Harnessing the

Power of Disruptive Technologies. O'Reilly, pp: 94-

122.

Nonaka, I., Takeuchi, H., 1995, The Knowledge Creation

Company: How Japanese Companies Create the

Dynamics of Innovation. Oxford University Press.

Rodríguez-Elias, O., Martínez-García, A., Favela, J.,

Vizcaíno, A., Piattini, M., 2004, Understanding and

Supporting Knowledge Flows in a Community of

Software Developers. LNCS 3198, Springer, pp: 52-

66.

Schaeffer, J., Lake, R., Lu, P., Bryant, M., 1996,

CHINOOK: The World Man-Machine Checkers

Champion. AI Magazine, Vol. 17, pp: 21-29.

Schulz, S., Herrmann, K., Kalcklosch, R., Schowotzer, T.,

2003, Trust-Based Agent -Mediated Knowledge

Exchange for Ubiquitous Peer Networks. AMKM,

LNAI 2926, pp: 89-106.

Wang, Y., Vassileva, J., 2003, Trust and Reputation

Model in Peer-to-Peer Networks. Proceedings of IEEE

Conference on P2P Computing.

Wasserman, S., Glaskiewics, J., 1994, Advances in Social

Networks Analysis. Sage Publications.

Wenger, E., 1998, Communities of Practice: Learning

Meaning, and Identity, Cambridge U.K.: Cambridge

University Press.

Yu, B., Singh, M., 2000, A Social Mechanism of

Reputation Management in Electronic Communities.

Cooperative Information Agents, CIA-2000, pp: 154-

165.

Zachaira, G., Moukas, A., Maes, P., 1999, Collaborative

Reputation Mechanisms in Electronic Marketplaces.

32nd Annual Hawaii International Conference on

System Science (HICSS-32).

ICINCO 2007 - International Conference on Informatics in Control, Automation and Robotics

178