LEARNING AND RETENTION OF LEARNING IN AN ONLINE

POSTGRADUATE MODULE ON COPYRIGHT LAW AND

INTELLECTUAL PROPERTY

Carmel McNaught

1

, Paul Lam

1

, Shirley Leung

2

and Kin-Fai Cheng

1

1

Centre for Learning Enhancement And Research

2

University Library System

The Chinese University of Hong Kong, Hong Kong

Keywords: Specific facts, schematic knowledge, schema theory, knowledge retention, e-learning, copyright laws,

intellectual property.

Abstract: Various forms of knowledge can be distinguished. Low-level learning focuses on recognition and

remembering facts. Higher level learning of conceptual knowledge requires the development of some form

of mental structural map. Further, application of knowledge requires learners to put theories and concepts

into use in authentic and novel situations. This study concerns learning at a number of levels. The context is

a fully online module on copyright laws and intellectual property, designed as an introductory course for all

postgraduates at a university in Hong Kong. The paper also explores whether the knowledge learnt through

the web-based medium was retained after three to six months. Findings ascertained the effectiveness of the

new medium, not only in delivering facts but also for assisting the learning of higher level knowledge. As

expected, the performance of students declined in the delayed post-tests but not to any alarming degree.

Retention of factual knowledge, however, was much lower than retention of other forms of knowledge. This

perhaps suggests that the role of e-learning, just as in face-to-face classes, should focus on concepts and the

applied knowledge, rather than on memorization of facts alone.

1 LEVELS OF COGNITIVE

REASONING

Learning involves different levels of cognitive

activities. Levels of cognitive reasoning are often

described by Bloom’s taxonomy (Bloom, 1956),

namely: knowledge, comprehension, application,

analysis, synthesis and evaluation. The knowledge

level of the original taxonomy is concerned with the

retention of information. Comprehension refers to

the understanding of this retained knowledge. At the

application level, learners apply the theories and

concepts to practical situations. At the analysis

cognitive level, learners are able to break down the

knowledge and concepts in a scenario into their sub-

components. The last two levels of cognitive

reasoning are synthesis and evaluation. Synthesis

focuses on the assembly and putting together of the

learned knowledge in new ways. Evaluation is

concerned with learners making value judgments

about what they have learnt and produced.

There are has been a great deal of debate over

the ‘knowledge’ level which is somewhat

problematic because the word knowledge, in

common usage, has a broad range of meanings. The

revised Bloom’s taxonomy (Anderson & Krathwohl,

2001; Krathwohl, 2002) tackles this challenge and

contains two dimensions instead of one – a

knowledge dimension and a cognitive process

dimension. The knowledge dimension now clearly

classifies and distinguishes between forms of

knowledge: factual knowledge, conceptual

knowledge, procedural knowledge and

metacognitive knowledge (Table 1). Anderson and

Krathwohl (2001) described factual knowledge as

“knowledge of discrete, isolated content elements”;

conceptual knowledge as involving “more complex,

organized knowledge forms”; procedural knowledge

as “knowledge of how to do something”; and

metacognitive knowledge as involving “knowledge

about cognition in general as well as awareness of

one’s own cognition” (p. 27).

273

McNaught C., Lam P., Leung S. and Cheng K. (2007).

LEARNING AND RETENTION OF LEARNING IN AN ONLINE POSTGRADUATE MODULE ON COPYRIGHT LAW AND INTELLECTUAL PROPERTY.

In Proceedings of the Third International Conference on Web Information Systems and Technologies - Society, e-Business and e-Government /

e-Learning, pages 273-280

DOI: 10.5220/0001265802730280

Copyright

c

SciTePress

As educators we are interested in students

acquiring conceptual, procedural and metacognitive

knowledge, as well as factual knowledge. It is

somewhat paradoxical that formal education has

often overemphasized factual knowledge in

beginning classes, calling such knowledge

‘foundation knowledge’, and then expected students

to make the transition to other forms of knowledge

with little overt support. For example, Conway,

Gardiner, Perfect, Anderson and Cohen (1997)

remarked that students who achieve higher grades on

essay-based examinations show conceptual

organization of knowledge while simple listings of

facts and concepts are correlated with low grades.

The development of mental structural maps of

knowledge (Novak & Gowin, 1984) and

“accompanying schematization of knowledge is

what educators surely hope to occur in their

students” (Herbert & Burt, 2001, p. 633).

2 LEARNING AND KNOWLEDGE

RETENTION IN E-SETTINGS

The use of the web as a strategy to deliver learning

activities has been of growing importance as

technology advances. Research studies have been

carried out to evaluate the effectiveness of e-learning

in achieving learning outcomes. While many studies

claimed that students learn well in the new media,

most of these studies did not differentiate or

compare the forms of knowledge being investigated.

This paper compares and contrasts students’

learning on four levels of knowledge in an online

course. The first objective is to investigate whether

e-learning can support the acquisition of higher

order knowledge. For e-learning to be an effective

learning tool, it has to be able to facilitate

acquisition of knowledge at the higher levels.

The second objective of the study is to explore

how well the knowledge acquired at these various

levels is retained.

The study of knowledge retention in non-web

settings in general tends to show that the retention

rate for specific facts falls behind that for a broader

base of more general facts and concepts (Semb &

Ellis, 1994). For example, Conway, Cohen and

Stanhope (1991) studied very long-term knowledge

retention by monitoring the performance of 373

students over ten years on tasks related to a

cognitive psychology course. They found that “the

decline in retention of concepts is less rapid than the

decline in the retention of names” (p. 401).

This finding supports Neisser’s (1984)

schema theory that describes how conceptual

knowledge is developed when students construct

linkages between specific facts in their minds. Such

linkages or webs or maps are called knowledge

schema. They are more resistant to forgetting than

isolated pieces of detailed knowledge. There might

be exceptional cases, though, if the specific facts are

involved in very personal contexts. Herbert and Burt

(2004) suggested that context-rich learning

environments (such as problem-based tasks or tasks

with connections to learners’ own lives) allow the

building of a rich episodic memory of specific facts

and this improves the motives of learners to pay

attention to learning. Learners are “more likely to

then know the material and schematize their

knowledge of the domain” (p. 87).

Relatively little is known, however, about

learning and knowledge retention patterns in e-

settings. Yildirim, Ozden and Aksu (2001)

compared the learning of 15 students in a

hypermedia learning environment with that of 12

students in a traditional situation. They found that

students learnt and retained knowledge better in the

computer-based environment, not only in the lower-

level domains that were about memorization of

declarative knowledge, but also in the higher

domains of conceptual and procedural knowledge.

Bell, Fonarow, Hays and Mangione (2000),

however, in their study with 162 medical students,

found that “the multimedia textbook system did not

significantly improve the amount learned or learning

efficiency compared with printed materials …

knowledge scores decreased significantly after 11 to

22 months” (p. 942). The problem with many of

these studies is that the design of the online module

does not provide any advantage over the printed

version from the students’ perspective (Reeves &

Hedberg, 2003). We were conscious of the need to

design for a learning advantage when deciding to

use a fully online module.

The present paper aims to provide further

information about knowledge retention in an online

course through analysing student performance levels

on a fully online introductory course for

postgraduate students on copyright law and

intellectual property. The course was structured to

include learning activities on four levels: (1) specific

facts, (2) more general facts and rules, (3) concepts,

and (4) applied knowledge. These are related to the

revised Bloom’s taxonomy in Table 1. For the fourth

category, we will use the term ‘applied knowledge’

but, as shown in Table 1, the tasks in this category

WEBIST 2007 - International Conference on Web Information Systems and Technologies

274

require some analytic skills. These categorizations

are only indicative.

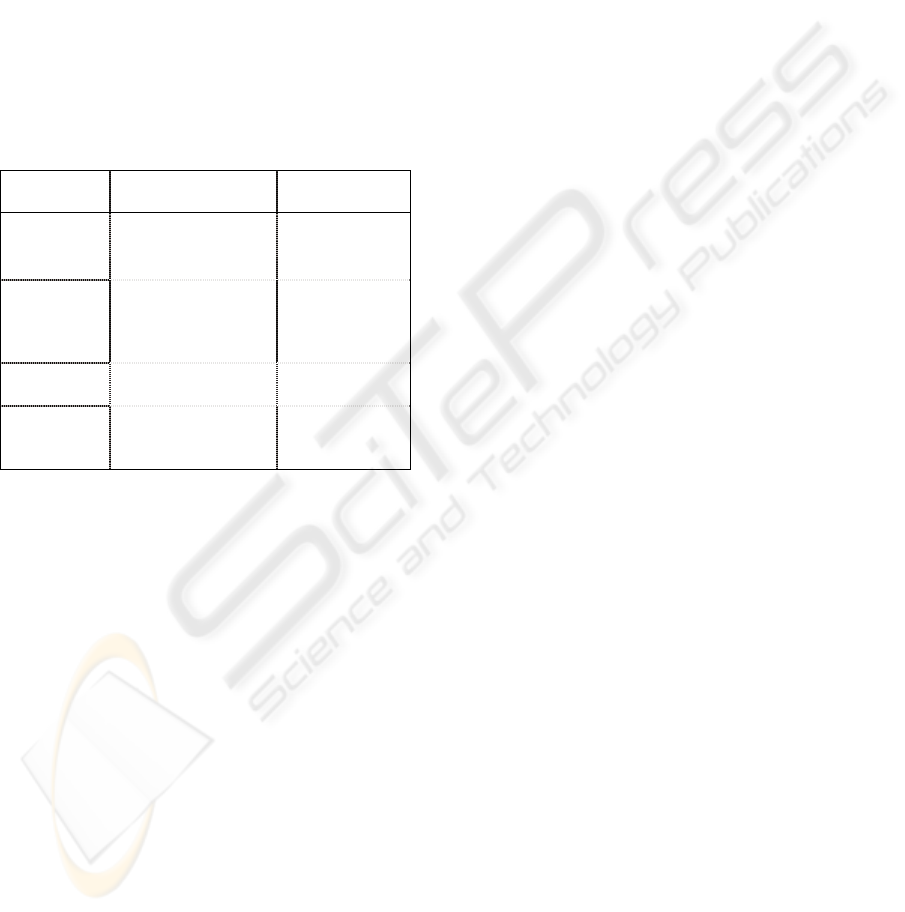

Table 1: Knowledge levels in the online module and the

revised Bloom’s taxonomy.

Cognitive process

Knowledge

Rem Und App Anal Eval Cre

Factual (1) (2)

Conceptual (3)

Procedural

(4)

Metacognitive

Rem=Remember Und=Understand App=Apply

Anal=Analyze Eval=Evaluate Cre=Create

3 THE CONTEXT OF THE

STUDY

The topic of avoiding infringement of copyright law

is central to research ethics and includes issues of

honesty, credit-sharing and plagiarism. As the

computer is used increasingly to disseminate

information, teaching professionals also must have

knowledge of the applications of the law to this

developing technology (Van-Draska, 2003). There is

a growing need to introduce copyright policies into

university libraries (Gould, Lipinski & Buchanan,

2005). It is vital to equip students with knowledge

about copyright and intellectual property, and to

warn them against plagiarism. The situation is

particularly true in Hong Kong as the issue of

intellectual property and copyright law in research

and study-related environments is currently

receiving a great deal of attention in the academic

community. At present the Government is revising

the ordinances and laws governing copyright in

Hong Kong. These laws are being interpreted and re-

interpreted by many different people and interest

groups. The need to educate students properly on

these issues is thus particularly important.

The University Library of The Chinese

University of Hong Kong (CUHK) teaches all

postgraduate students a module titled ‘Observing

intellectual property and copyright law during

research’. This course is a compulsory module for

research postgraduate students; students need to

complete this online module before their graduation.

As the situation is very fluid in Hong Kong, the

course has been designed to tackle the issue in as

many practical ways as possible. Whatever the laws

in Hong Kong are, it must be clearly stated that

many of the issues surrounding intellectual property

in academic circles are universal, and not just

applicable in Hong Kong. There are two major

components on the online module: the learning

resources and the test.

The module was originally conducted solely

through face-to-face workshops organized by the

Library. The problem with this method was that

teaching was restricted to designated times and

places. In recent years, the Library has been

investigating the potential benefits of putting the

course online, similar to the University of Illinois at

Chicago (Rockman, 2004). An online version of this

particular topic was deemed to be an appropriate

strategy for the following reasons:

The course is an introductory course: Most of

the study materials are easy to understand. This

type of content is good for self-learning through

students’ individual reading and consideration

of the online materials.

Students are from various disciplines:

Gathering students physically for a lesson has

always been difficult, as they have conflicting

timetables. With e-learning methods, learning

can take place on-demand, and students can be

given greater control over their learning than

before (DeRouin, Fritzsche & Salas, 2004).

Online learning might be effective: Our reading

provided sufficient examples of studies where

higher level learning seemed to be supported by

an online environment. For example, Iverson,

Colky and Cyboran (2005) compared

introductory courses held in the online format

and traditional format. Their findings suggested

that online learners can gain significantly

higher levels of enjoyment and significantly

stronger intent to transfer their learning to other

contexts.

With effect from 2004–05, the format of this

compulsory module was changed from lecture-based

to online-based. The online version of the course

was run the second time in the academic year 2005–

06. The online module is offered four times a year

from September to April each year and it is offered

under the ‘Research’ section of CUHK’s Improving

Postgraduate Learning programme (http://www.

cuhk.edu.hk/clear/library/booklet/29.htm). At the

time of writing, the module had been run eight

times.

LEARNING AND RETENTION OF LEARNING IN AN ONLINE POSTGRADUATE MODULE ON COPYRIGHT

LAW AND INTELLECTUAL PROPERTY

275

4 ONLINE MODULE

4.1 Procedure

All postgraduate students are entitled to enrol in the

course. In fact, they are required to take (and pass)

the course before their graduation. There are four

cohorts each year, and each cohort last for about two

months. Eligible students may enrol themselves into

any one of the cohorts; they then have to complete

the course and the course-end test within the two-

month duration.

Figure 1: Flow of learning activities of the online course.

The flow of the course is illustrated in Figure 1. In

order to complete the course, students are required to

complete the following four tasks in sequence within

the course period:

1) read ALL the course materials;

2) take the online exam;

3) fill in the online survey (not viewable until

the exam is submitted); and

4) view their own exam results (not viewable

until the survey is submitted).

Students can attempt the summative test only after

they have completed reading all the course

materials.

4.2 Course Content

The learning resources consist of 28 pages of course

content that focuses on five areas of issues:

copyright around the world and copyright in Hong

Kong cover specific facts such as history and the

enactment bodies of copyright laws in Hong Kong

and around the world; permitted act for research

and private study in Hong Kong introduces the more

general facts and rules governing the accepted

academic practices; avoiding plagiarism is a

conceptual section as it defines plagiarism and

explains various related concepts; lastly, intellectual

property & copyright focuses on the applied

knowledge by showcasing various real-life

situations and commenting on appropriate practices.

Each page contains easy-to-read materials; some are

linked to PowerPoint slides and/or further readings.

In all, the course gives students a clearer

understanding of the core issues of intellectual

property, copyright law and plagiarism in academic

research. The course provides advice about

compliance in ‘dealing with’ intellectual property

‘fairly’.

4.3 Course-end Test

The summative test consists of 20 questions

randomly selected from a pool of 29 questions. The

pass mark of the test was 10. Students fail the test if

they score 9 or below. If they do so, they need to

retake the course. The test questions followed the

course structure and asked students’ knowledge on

the five themes described above. The questions were

set at different levels of knowledge.

Specific facts are “facts that referred to details of

specific theories and findings highlighted in the

course” (Conway, Cohen & Stanhope, 1991, p. 398).

They are related to a restricted setting. Example test

questions include “Where was the Convention

signed in the 19th century which protects literary

and artistic works?” and “The Hong Kong

Ordinance on Copyright was substantially revised in

which year?”

General facts and rules are the “more global

aspects of theory” (Conway, Cohen & Stanhope,

1991, p. 398). Rules are general facts in this sense as

they are set procedures that are true in a wider

context. Questions that fall into this category

include: “Printing out any records or articles from

the electronic resources subscribed by the Library

will infringe the copyright; True/ False?” and

“Copying by a person for research is fair dealing if

the copying will result in copies of the same material

being provided to more than one person at the same

time and for the same purpose; True/ False”.

Concepts are explanations and definitions of

theories and ideas, and clarifications of the linkages

between these theories and ideas. They are “highly

familiar, generalized knowledge which students tend

to simply know” (Herbert & Burt, 2004, p. 78). Test

questions in the course concerning concepts include

“Which of the following actions is regarded as

plagiarism?” and “Leaving out some words in a

quoted passage without any indication is plagiarism;

True/ False?”

WEBIST 2007 - International Conference on Web Information Systems and Technologies

276

Lastly, there are questions that required

application of knowledge, and students were asked

to make decisions based on theories and concepts

learnt in highly-specific situations. For example,

there are questions “You want to set up a factory in

Shenzhen to make a black and gold-coloured pen,

and you want to call the pen a ‘MAN BLANK’.

Which of the following would you need to check?”

and “You want to use a photograph of a painting by

Leonardo Da Vinci (1452–1519) in your

dissertation. Who owns the copyright?”

Table 2 illustrates the relationships between the

content themes of the questions and their respective

knowledge levels.

Table 2: Categorization of the exam questions.

Knowledge

levels

Content themes Questions

Specific

facts

Copyright around

the world

6, 7, 8, 9, 11

Copyright in HK 5

General

facts &

rules

Permitted act for

research & private

study in HK

13, 14, 15, 16,

17, 18, 19, 20,

25, 27, 28, 29,

30

Concepts Avoiding

plagiarism

12, 21, 22

Applied

knowledge

Intellectual

property &

copyright

1, 2, 3, 4, 10,

23, 24, 26

4.4 Evaluation Strategies

CUHK is a strongly face-to-face university in its

teaching style and e-learning is not used extensively

(McNaught, Lam, Keing & Cheng, 2006). It is

therefore especially important to evaluate

innovations, especially in courses that are conducted

totally online. We devised an evaluation plan which

is composed of multiple evaluation instruments. The

evaluation questions that interested the course

organizers include: accessibility – whether students

can readily access the course; learning – whether

students can learn the concepts of the course

effectively through online means; and retention of

learning – whether the learning is retained.

Concerning accessibility, the research team kept

detailed records on the access and activity logs of

the students’ visits to the various pages on the site

and their attempts at the tests. We will illustrate this

aspect by quoting the logs kept in the eight cohorts

across two academic years (2004–05 and 2005–06).

Regarding students’ learning, the data came from

students’ test scores and their opinions elicited

through surveys conducted in the same eight cohorts

in the academic years 2004–05, and 2005–06. The

surveys collected students’ feedback on how much

they valued the course, and how much they thought

they learnt from the course.

Lastly, regarding retention of knowledge, two

attempts to invite students to take retests were

carried out. During the 2004–05 academic year, the

first trial of this study was carried out. The retest

was launched in June 2005 for both students in

Groups 1 and 2 in the 2004–05 cohort. Group 1

students originally took the online course test in

October 2004 and the original test period of the

Group 2 students was December 2004. Therefore,

there was a time gap of six to eight months between

the first time the students did the test and the retest.

The content of retest was the same as the original

examination, and consisted of 20 multiple choice

questions randomly selected from a pool of 29

questions.

The retest received a relatively low completion

rate in the first trial: 16.5% (52 did the retest out of

the 315 students who were in either Group 1 or 2

and had taken the original test). Thus, in order to

boost the response rate, a lucky draw prize ($HK500

– ~Euro51 – book coupon) was offered in the second

trial in the 2005–2006 academic year.

The second study was launched in March–April

2006. This time we invited students in Groups 1 and

2 of the 2005–06 cohort to take the retest. The

original test period of the 2006 Group 1 was 3–28

October 2005 and that of the Group 2 students was

21 November–16 December 2005. Thus, the time

gap between the exam and the retest ranged from

three to six months. The retest invitation was sent to

those Group 1 and Group 2 students who had taken

the course test. No retest invitation was sent to those

who did not take part in the examination. The

number of students who received the invitation of

retest was 387. Reminders were sent twice. At the

end, there were a total of 148 retest participants. The

completion rate for the second trial is 38.2%

(148/387).

5 FINDINGS

The online course was readily accessed by students.

For example, in the academic year 2005–06, the 571

students who took the course and finished the online

test had visited the site (recorded by the counter on

the first page of the site) a total of 5,786 times,

meaning that each student on average accessed the

site 10.1 times. The counters on the 28 course

LEARNING AND RETENTION OF LEARNING IN AN ONLINE POSTGRADUATE MODULE ON COPYRIGHT

LAW AND INTELLECTUAL PROPERTY

277

content pages, on the other hand, recorded a total of

119,034 visits. Thus, on average, each student

accessed these pages 208.5 times to prepare for the

course-end test. Most students who registered the

course actually finished it. A total of 1,278 students

registered for the course in all the eight cohorts, and

among them 1,134 successfully completed the

course-end test. The completion rate was 88.7%.

Overall, students answered 17.8 questions correctly

out of the 20 attempted questions, a percentage score

of 88.9%.

A total of 1,120 students answered the opinion

survey attached with the course-end test (out of the

1,134 students who completed the course; response

rate being 98.8%). The students were assured that

their feedback on the survey would not in any

manner affect their scores on the test. The survey in

general affirmed that the course was

overwhelmingly welcomed by students. For

example, the average score on the question “The

modules achieved the stated objectives” was 4.0 in a

5-point Likert scale in which 1 stands for strongly

disagree and 5 means strongly agree. This is very

high for a compulsory module.

The following sections explore the performance

of a subset of the students (the 200 students who

completed both the test and retest in our two study

trials) in their learning and retention of the

knowledge acquired in the course.

5.1 Learning of Knowledge

The learning outcomes of the students can be gauged

by the performance of the students in their original

course-end tests. The 200 students performed very

well in the original test, achieving a percentage score

of 93.4% among the questions they attempted.

Their scores of each of the knowledge levels

were slightly different, though still very high in

general. They scored, on average, 91.3% correct in

questions that were about specific facts, 93.7% in

the questions about general facts and rules, 97.4% in

questions on concepts, and 93.0% in questions about

applied knowledge. The distribution of the marks is

illustrated in Figure 2. It is also noted that the

performance of the 52 students in the first 2004–05

study trial in general showed the same pattern as that

of the 148 students in the second 2005–06 trial.

One-way ANOVA found that the between-group

differences were statistically significant at the 0.01

level. Post-hoc Scheffe tests were then carried out

which established that the main difference was from

the exceedingly high marks on the concepts

category. The differences between students’

performances on questions related to concepts and

those in questions related to other knowledge

domains were all statistically significant at the 0.05

level.

5.2 Retention of Knowledge

Retention of knowledge was investigated by

comparing the 200 students’ performances in their

original tests and re-tests. Paired-sample t-tests were

used to test for any differences between the mean

scores of the examination and the retest.

Although the first trial of the study in 2004–05

had a much lower response rate than the second test–

retest study in 2005–06, the two set of results were

actually very similar.

In 2004–05, students scored on average 94.4% in

their original test while they scored 78.0% in their

postponed retest. In 2005–06, the scores were 93.0%

and 77.9% respectively. Overall, the 200 students

scored 93.4% and 77.9% in their original tests and

retests. The result from the paired-sample t-test

revealed that the differences between these original

test scores and retest scores are statistically

significant at the 0.01 level.

It is worthwhile to note that although the

students’ performance in the retest declined

significantly; nevertheless, their performances were

quite reasonable, with an average percentage score

of 77.9%.

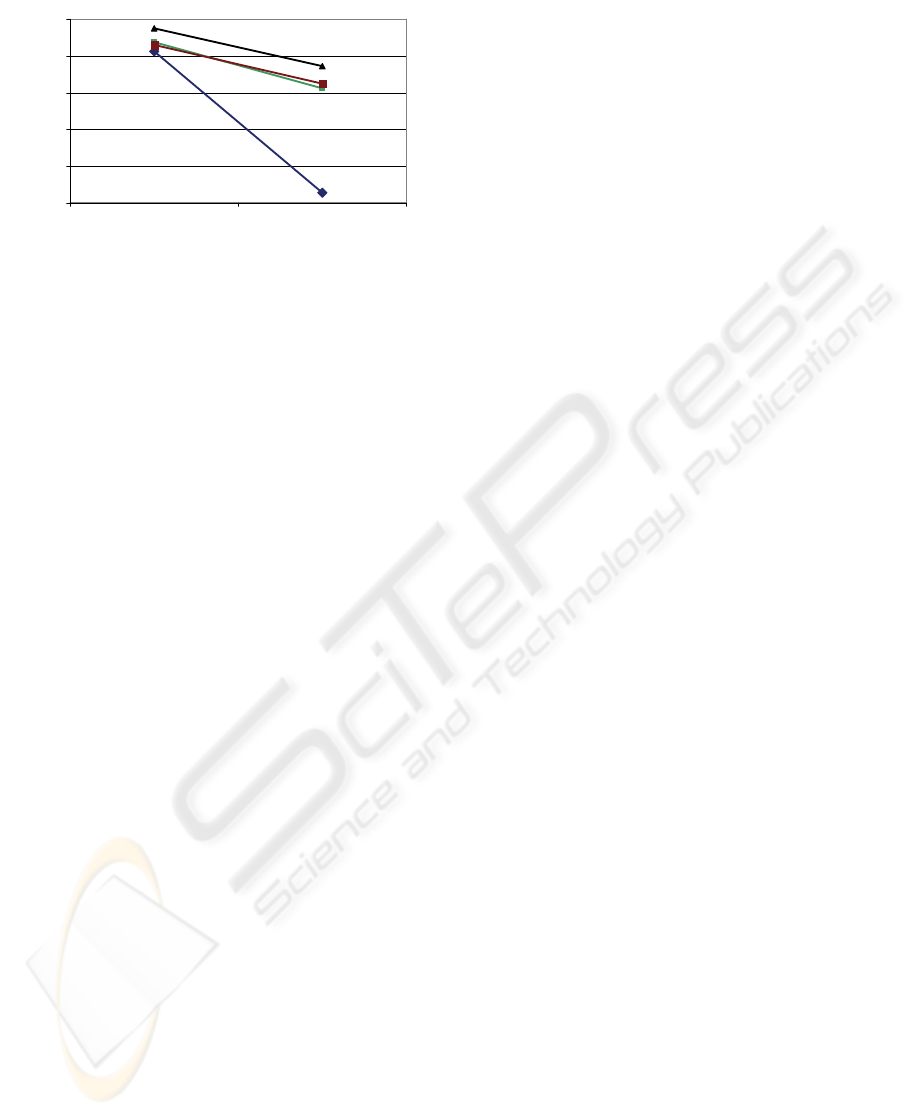

A closer look at the data on the various

knowledge levels revealed the patterns portrayed in

Table 3 and Figure 3.

Table 3: Retention by knowledge domains.

Knowledge domains Exam Retest Diff.

Specific facts 91.3% 52.7% 38.6%

General facts and

rules 93.7% 81.2% 12.5%

Concepts 97.4% 87.1% 10.3%

Applied knowledge 93.0% 82.5% 10.5%

80%

85%

90%

95%

100%

Specific facts General facts/

rules

Concepts Application of

concepts

Overall

04-05

05-06

Figure 2: Performance in different knowledge levels.

WEBIST 2007 - International Conference on Web Information Systems and Technologies

278

Specific facts

Principles/

rules

Concepts

Application of

concepts

50%

60%

70%

80%

90%

100%

Exam Retest

Percentage correct

Figure 3: Decline in performance by knowledge domains.

The data show the sharpest decline in performance

on question items that relate to specific facts when

compared with the other knowledge domains. This

was a 38.6% drop (91.3% to 52.7%) while the

declines in performances in the other three questions

levels were only 12.5%, 10.3% and 10.5%,

respectively. This represents more than three times

the percentage change when compared with the

other changes.

6 DISCUSSION

The online module appears to be an effective

learning tool. The scores on the original tests were

all very high, showing that e-learning is good not

only in delivery of facts, but also in explaining

concepts (in fact, students’ scores on the questions

related to concepts were the best), and teaching

applied knowledge.

Students performed slightly worse in the retests

than in the first tests. Compared with the very high

scores in the original test, students’ scores in the

retests were clearly poorer. This drop in scores,

however, is quite expected as time is always

regarded as affecting retention of knowledge. In fact,

students still managed to achieve relatively good

performance in the retests and this shows that e-

learning can have extended effects on students’

learning, contrary perhaps, to the observations of

Bell, Fonarow, Hays and Mangione (2000), who

found material learnt on computer is not retained;

but more or less in line with the position of Yildirim,

Ozden and Aksu (2001) that e-learning can produce

long-term learning. While this small study in no way

solves the ambiguity in the research literature, it

does contribute to our understanding.

While students generally found all categories

easy (above 85% of the answers in all categories

were correct), they found one category increasingly

more difficult as time passed. This is the category of

specific facts. Students differed in their retention of

different forms of knowledge. Knowledge of

specific facts tended to drop to a far greater extent

than learnt knowledge in the other domains. The

decrease of scores in this category significantly

outnumbered those in the other categories, dropping

more than 35% while the other declines were in the

10% level. Unrelated facts are difficult to remember

in traditional classroom teaching (Conway, Cohen &

Stanhope, 1991) and we now have evidence that,

although e-learning can be used to disseminate facts,

facts learnt in ‘e-classrooms’ are not retained over

time. There is thus a resemblance between

knowledge retention in the two learning

environments.

Education is concerned with the development of

the higher cognitive reasoning skills rather than

memorization of facts and unrelated concepts. The

findings of this study seem to support the role of

web-assisted teaching as not being limited to

delivery of isolated facts and information. The web

can be effective in facilitating learning at higher

levels. Knowledge of isolated specific facts is not

retained while acquired knowledge concerning more

general rules and concepts, and their applications,

appears to be more worthwhile as the focus of online

materials.

The findings of the study provided timely

feedback to the development team about which

questions in the module to consider for revision and

how we might refocus some of the information in

the module. There have thus been tangible benefits

from the study.

The present study has clear limitations. First,

the delayed retests took place after a relatively short

period of time (three to six months) and so the

retention pattern of these various forms of

knowledge in a more extended period of time is

largely unknown. Nevertheless, many previous

studies have shown that the period immediately after

the learning activity is actually the most critical as

this is when the decline in knowledge retained is

most serious (Bahrick, 1984; Bahrick & Hall, 1991;

Conway, Cohen & Stanhope, 1991). Second, we are

aware of the fact that many factors, such as

individual differences, prior knowledge of learners,

content organization and structure, etc., affect

learning and memory retention (Semb & Ellis, 1994;

Semb, Ellis & Araujo, 1993). The present study on a

single online module utilizing one specific way of

content design is far from being able to make any

LEARNING AND RETENTION OF LEARNING IN AN ONLINE POSTGRADUATE MODULE ON COPYRIGHT

LAW AND INTELLECTUAL PROPERTY

279

general claims about retention of knowledge forms

in e-medium learning environments.

7 CONCLUSION

The study confirms that e-learning can be an

effective tool not only in the dissemination of facts,

but can also effectively explain concepts and assist

students in applying knowledge. Specific factual

knowledge is hard to retain. The findings of this

study suggest that the role of e-learning, just as the

role of traditional teaching, should focus on concepts

and applied knowledge rather than on memorization

of facts alone.

The data from this study come from one course

alone and so must be treated as indicative. Further

studies in a range of discipline areas are warranted.

REFERENCES

Anderson, L. W., & Krathwohl, D. R. (2001). A taxonomy

for learning, teaching, and assessing: A revision of

Bloom’s taxonomy of educational objectives. Boston:

Allyn & Bacon.

Bahrick, H. P. (1984). Semantic memory content in

permastore: Fifty years of memory for Spanish learned

in school. Journal of Experimental Psychology,

113(1), 1–29.

Bahrick, H. P., & Hall, L. K. (1991). Lifetime

maintenance of high school mathematics content.

Journal of Experimental Psychology: General, 120(1),

20–33.

Bell, D. S., Fonarow, G. C., Hays, R. D., & Mangione, C.

M. (2000). Self-study from web-based and printed

guideline materials: A randomized, controlled trial

among resident physicians. Annals of Internal

Medicine, 132(12), 938–946.

Bloom, B. S. (Ed.). (1956). Taxonomy of educational

objectives: The classification of educational goals:

Handbook I, Cognitive domain, New York: Longman.

Conway, M. A., Cohen, G., & Stanhope, N. (1991). On the

very long-term retention of knowledge acquired

through formal education: Twelve years of cognitive

psychology. Journal of Experimental Psychology:

General, 120(4), 395–409.

Conway, M. A., Gardiner, J. M., Perfect, T. J., Anderson,

S. J., & Cohen, G. M. (1997). Changes in memory

awareness during learning: the acquisition of

knowledge by psychology undergraduates. Journal of

Experimental Psychology: General, 126, 393–413.

DeRouin, R. E., Fritzsche, B. A., & Salas, E. (2004).

Optimizing e-Learning: research-based guidelines for

learner-controlled training. Human Resource

Management, 53 (2–3), 147–162.

Gould, T. H. P., Lipinski, T, A., Buchanan, E. A. (2005).

Copyright policies and the deciphering of fair use in

the creation of reserves at university libraries. The

Journal of Academic Librarianship, 31(3), 182–97.

Herbert, D. M. B., & Burt, J. S. (2001). Memory

awareness and schematization: Learning in the

university context. Applied Cognitive Psychology,

15(6), 613–637.

Herbert, D. M. B., & Burt, J. S. (2004). What do students

remember? Episodic memory and the development of

schematization. Applied Cognitive Psychology, 18(1),

77–88.

Iverson, K. M., Colky, D. L., & Cyboran, V. (2005). E-

learning takes the lead: An empirical investigation of

learner differences in online and classroom delivery.

Performance Improvement Quarterly, 18(4), 5–18.

Krathwohl, D. R. (2002). A revision of Blooms’

Taxonomy: An overview, Theory into Practice, 41(4),

212–218.

McNaught, C., Lam, P., Keing, C., & Cheng, K. F. (2006).

Improving eLearning support and infrastructure: An

evidence-based approach. In J. O’Donoghue (Ed.).

Technology supported learning and teaching: A staff

perspective (pp. 70–89). Hershey, PA: Information

Science Publishing.

Neisser, U. (1984). Interpreting Harry Bahrick’s

discovery: What confers immunity against forgetting?

Journal of Experimental Psychology: General, 113

,

32–35.

Novak, J. D., & Gowin, D. B. (1984). Learning how to

learn. Cambridge, UK: Cambridge University Press.

Reeves, T. C.; & Hedberg, J. G. (2003). Interactive

learning systems evaluation. Educational Technology

Publications, Englewood Cliffs: New Jersey.

Rockman, H. B. (2004) An Internet delivered course:

Intellectual property law for engineers and scientist.

Prontiers in Education, 34, S1B/22–SIB/27.

Semb, G. B., & Ellis, J. A. (1994). Knowledge taught in

school: What is remembered? Review of Educational

Research, 64(2), 253–286.

Semb, G. B., Ellis, J. A., & Araujo, J. (1993). Long-term

memory for knowledge learned in school. Journal of

Educational Psychology, 82(2), 305–316.

Van-Draska, M. S. (2003). Copyright in the digital

classroom. Journal of Allied Health, 32(3), 185–188.

Yildirim, Z., Ozden, M. Y., & Aksu, M. (2001).

Comparison of hypermedia learning and traditional

instruction on knowledge acquisition and retention.

The Journal of Educational Research, 94(4), 207–214.

WEBIST 2007 - International Conference on Web Information Systems and Technologies

280