Impact of First Person Avatar Representation in Assembly

Simulations on Perceived Presence and Acceptance

Jennifer Brade

a

, Alexander Kögel

b

, Christian Fuchs

c

and Philipp Klimant

d

Professorship for Machine Tool Design and Forming Technology, Chemnitz University of Technology,

Reichenhainer Str. 70, Chemnitz, Germany

Keywords: Presence, User Studies, Acceptance, Virtual Reality, Avatars, Immersion.

Abstract: This article reports the impact of three different avatar representations on perceived presence and acceptance

during an assembly task. The conducted experiment focuses not on the perceived virtual body ownership, but

on the limited visibility of the virtual body during a task at a workbench – meaning the view on hands and

forearms. The initial question is, if a detailed avatar, which is time-consuming to develop, is needed during a

virtual assembly task or if the impact on presence and acceptance caused by the kind of avatar visualisation

is negligible. Therefore, three different kinds of avatar representations were used to examine the influence of

the avatar on the perceived presence and acceptance. The results of the experiment show that there are no

significant differences between the three kinds of avatar representations. All three avatars reach high values

for presence and acceptance. Therefore, a partial-body representation is sufficient to obtain a high presence

and acceptance level in scenarios which focus on manual tasks on or above a work bench.

1 INTRODUCTION

The advantages in the area of virtual reality (VR)

extend the field of potential applications, also for the

field of mechanical engineering. Thus, and due to the

benefits of virtual environments – like resource

saving, non-destructive testing of machine failures

and better safety aspects for the user, VR scenarios

become a popular tool for training and education. To

ensure the transferability of the virtually learned skills

to the real tasks, it is necessary to simulate the task as

realistic as possible and to reach a high level of

presence in these environments. Presence is described

as the “sense of being in the virtual environment” and

is seen as a cognitive state that results from

information processing of stimuli in the environment

from various senses (Slater and Wilbur, 1997). The

sense of presence is affected by several factors and

many studies devoted on evaluate the degree of

influence these factors exert on it. Schuemie et al.

(2001) listed the results of several researchers in this

field. Weiss et al. (2006) divided factors, which

a

https://orcid.org/0000-0002-6219-6729

b

https://orcid.org/0000-0002-3358-3316

c

https://orcid.org/0000-0003-2835-0638

d

https://orcid.org/0000-0001-7819-8473

influence the sensations of presence into three

categories: Characteristics of the user, the VR system

and the VR task (see

Figure

1).

Figure 1: Factors that influence the sensation of presence in

the virtual environment by Weiss et al. (2006).

The VR system characteristics also include the

way in which the user is represented within the virtual

environment (Nash et al., 2000). These

representations are called avatars and are the users

embodied interface in VR. Such Avatars convey the

feeling of direct interaction with the virtual

environments and are the direct extension of the user

Brade, J., Kögel, A., Fuchs, C. and Klimant, P.

Impact of First Person Avatar Representation in Assembly Simulations on Perceived Presence and Acceptance.

DOI: 10.5220/0008878700170024

In Proceedings of the 15th International Joint Conference on Computer Vision, Imaging and Computer Graphics Theory and Applications (VISIGRAPP 2020) - Volume 1: GRAPP, pages

17-24

ISBN: 978-989-758-402-2; ISSN: 2184-4321

Copyright

c

2022 by SCITEPRESS – Science and Technology Publications, Lda. All rights reserved

17

in VR (Waltemate et al., 2018). The design of the

artificial body affects the so-called “sense of

embodiment” and Kilteni et al. (2012) defines the

sense of embodiment (SoE) toward a body B as the

sense that emerges when B’s properties are processed

as if they were the properties of one’s own biological

body. Embodiment can be achieved at different

levels, which were described by Kilteni et al. (2012)

and effect the level of presence (Jung and Hughes,

2016; Slater and Steed, 2000; Tanaka et al., 2015). To

reach a strong effect of presence it is necessary to

respect the influence of a virtual body on user’s

perception. Because training and education scenarios

in VR focus on processes and tasks, the aspect of the

sense of embodiment is mostly unconsidered. This

also stems from the fact that the creation of a virtual

avatar leads to more effort during the creation of the

VR scenarios and that the view on the virtual body

during assembly or maintenance tasks is mostly

limited to the hands and the arms of the user.

Nevertheless, to accomplish a high level of presence,

a virtual body should be implemented in training and

education scenarios as well. The question is: how

detailed and anatomically realistic should this body

look and behave in order to reach a high value of

presence while keeping the modelling effort as low as

possible.

This paper presents a study that compared

different avatars in a virtual assembly task to evaluate

the influence of the representation towards perceived

presence and acceptance of the scenarios.

2 RELATED WORK

Multiple studies on the topic of presence have shown

that presence is greatly affected by general

embodiment (Jung and Hughes, 2016; Slater and

Steed, 2000; Tanaka et al., 2015) and general

immersion (Slater, 1999). Moreover, using a self-

avatar in immersive virtual reality environments can

positively influence the perceived sense of presence

and help with perceptual judgements and interaction

tasks in these virtual worlds (Slater et al., 1995).

Even the user’s ability to perform purely

cognitive tasks can be improved by providing tracked

self-avatars, as it has been shown that their existence

in a scene appears to reduce cognitive load of the user

during certain tasks (Steed et al., 2016).

It is noteworthy that such virtual self-avatars have

been shown to have a bigger impact on presence

during tasks resembling the real world – like

locomotion – than while using non-realistic

interaction methods, like flying through the virtual

world (Slater et al., 1995).

The majority of studies regarding presence and

virtual embodiment has been performed outside of the

first person perspective, using virtual mirror scenarios

(Waltemate et al., 2018), mannequins (Petkova and

Ehrsson, 2008) or out-of-body experiences

(Lenggenhager et al., 2007), often to overcome the

problem of poor visibility of the avatar representation

(Waltemate et al., 2018). This stands in contrast to the

findings of subsequent studies, that show that using a

first person perspective is “essential for experiencing

the sense of ownership over the virtual body” (Maselli

and Slater, 2013) and to the idea that the increased

immersion of a head mounted display (HMD)

compared to a CAVE system increases both virtual

embodiment and agency, as well as presence itself

(Waltemate et al., 2018).

There are several top down factors affecting the

conceptual interpretation of the virtual body parts the

users sees and controls (Waltemate et al., 2018). One

of the most important aspects of creating a strong

sense of ownership over the virtual limbs is the

synchrony between visual and proprioceptive

information perceived by the user of the VR system

(Sanchez-Vives et al., 2010). This effect is so strong

that some research showed that colocation alone

could create a basic illusion of embodiment even

without full-body motion tracking (Maselli and

Slater, 2013).

Further research has shown, though, that motion

fidelity plays a vital role in strengthening the

believability of self-avatars, even stronger than visual

fidelity (Lok et al., 2003). This also is reflected in

studies regarding avatars representing other people in

the user’s virtual environment, where even cartoonish

looking animated avatars showed benefits over non

animated avatars consisting of basic shapes (Gerhard

et al., 2001) or that an avatar consisting of only

tracked hands and heads was rated better in terms of

copresence and behavioural interdependence

compared to a full body avatar with predefined

animations (Heidicker et al., 2017). It has been noted

that not being able to represent the user’s movements,

due to miscalibration or other factors, might lead to a

break in perceived presence (Slater and Steed, 2000)

as it has been shown that violating anatomical

constraints – like limbs shown in impossible

postures – breaks the full body ownership illusion

(Ehrsson et al., 2004; Tsakiris and Haggard, 2005).

This has been thought as one of the reasons

commercially available software for current home-

user HMD-Systems usually don’t include a full self-

GRAPP 2020 - 15th International Conference on Computer Graphics Theory and Applications

18

avatar and only present virtual hands or even just the

objects in the users hands (Steed et al., 2016).

Argelaguet et al. (2016) showed in a study

consisting of hazardous looking virtual objects that

had to be avoided during certain simpler tasks, like

picking and placing objects or placing your virtual

hands in a certain spot, that simplified virtual hands

can show benefits as well. For example, they lead to

faster and more accurate interactions as well to a

greater sense of agency than realistic hands with

realistic arms which itself provided the most sense of

ownership compared to the simpler representations –

at least until a certain degree of familiarisation with

the virtual environment has occurred. Blockage of

vision when using the more realistic self-avatar and

tracking issues where stated as the most probable

reasons for these findings (Argelaguet et al., 2016).

The issue of the uncanny valley effect (Mori et al.,

2012) is also hypothesized as one reason for a finding

by Lugrin et al. (2015a) that virtual body ownership

decreased slightly when comparing higher human

resemblance to robot or cartoon-like avatars.

3 EXPERIMENTAL METHOD

As described in the related work section, previous

work compared the influence of avatar

representations on different factors affecting the

sensation of presence. But the question remains how

much avatar representations are contributing to these

factors, when the VR scenarios used are not focused

primarily on experiencing the virtual avatar alone, but

more on the view participants have during tasks

related to training scenarios in the field of mechanical

engineering. Therefore, we decided to compare three

different avatar representations to answer the research

question, if different avatar representations vary in

the presence and acceptance scores in VR scenarios

focussed on manual tasks at workstation tables

(meaning primarily seeing the hand and parts of the

arms of the virtual avatar).

3.1 Participants

34 participants (22 female and 12 male) with a mean

age of 24.38 (SD=3.61) completed the experiment.

All participants were students or employees of the

university and obtained a financial compensation for

their participation. All participants had normal or

corrected-to-normal vision. 11 participants reported

no prior experience with VR, 13 participants had

experienced VR 1 or 2 times and 10 participants had

contact with VR 3 or more times before. The total

time of the test was 40 minutes while the Participants

spent a total of 18 minutes within the virtual

environment. The order of the representation of the

different avatars was randomized. All participants

participated in the study voluntarily and were allowed

to abort the experiment at any time.

3.2 Virtual Reality Setup and Avatars

The experiment took place in a room measuring

4.10 x 4.35 meters with a tracking space of 16 square

meters approximately. The participants wore an HTC

Vive HMD which also used a “Lighthouse” tracking

system version 1 to track the participant’s position.

To study the influence of the avatar representations,

three different forms of avatars were developed. The

first consists only of two floating human hands,

terminating at the wrist (hereinafter called

“hands_only”). These follow the movements of the

Vive controllers according to their tracking data.

Button inputs are visualized by animations displaying

gripping or pointing motions of the hands.

The other two avatars consist of different full-

body manikins, visible in

Figure 2.

Figure 2: Full-body manikins – on the left the lowpoly

version and on the right the highpoly version.

Both are anatomically correct and rigged to a

human-inspired animation skeleton to allow realistic

movements. They diverge in their visual design: One

shows very flat shading with little detail in textures

(hereinafter called “full_body_lowpoly”) the other is

designed more realistically with highly detailed skin

and cloth fabric (hereinafter called

“full_body_highpoly”). Both male and female

versions of the models were provided.

Beside the aforementioned hand animations, the

full body avatars feature an inverse kinematics system

enabling runtime animation of the whole body based

on the transformations of the tracked VR hardware.

Using the Unity plugin Final IK, targets for the

transformations of the body’s end effectors, meaning

Impact of First Person Avatar Representation in Assembly Simulations on Perceived Presence and Acceptance

19

hands, feet and the head, can be set forcing

appropriate movements of the untracked body parts.

Head tracking was established by accessing the

transformation of the HMD itself. Likewise, the

hands were tracked using the Vive controllers and the

feet by standalone Vive trackers fixed to the user’s

actual feet by Velcro straps. All different local

coordinate systems of the hardware were aligned with

the user through different offsets in both position and

rotation.

To study the influence of the avatar representation

during assembly tasks, we focused on the

representation of the hands and arms during the task.

The participants were not explicitly instructed to take

a closer look at the full body of the avatar but could

do so at any time.

Figure 3 to 5 show the three views

of the participants on the avatar during the tasks.

In the experiment, the participants had to

assembly a toy truck using equipment on two

workstations that where placed in a workshop-style

basement area. One workstation was an assembly

table with a height-adjustable table top and swivel

arms. The second workstation was a table with a drill

press mounted to it. Both workstations were equipped

with a virtual monitor on which the assembly steps

were explained.

Figure 3: Participants view on the “hands_only” avatar

during the assembly task.

Figure 4: Participants view on the “full_body_lowpoly”

avatar during the assembly task.

For each kind of avatar representation, the

participants had to fulfil the same tasks: at first, they

had to grab a car chassis and a wheel out of the

containers in the swivel arms, then they had to move

to the drill press to drill a hole in the wheel: After they

Figure 5: Participants view on the “full_body_highpoly”

avatar during the assembly task.

had put the wheel under the drilling press, they

switched on the machine and moved the feed lever to

the drill position. This procedure was repeated for all

four wheels. Afterwards, the participants pre-

assembled the wheels to the chassis by snapping them

on the axles and moved back to the assembly table.

Here they grabbed four screws out of the small load

carriers and put them on the right position on the

wheels. Then they took the screwdriver and screwed

all four screws into the axles, securing the wheels.

The last steps was to clip the truck bed on the chassis.

For moving in the virtual room, the participants

used real walking in the tracking space.

3.3 Methods

A within-subject study with the kind of avatar

representation as independent variable was

conducted. The study started with the participation

information and the data protection declaration as

well a short general questionnaire about previous

experience in VR. Afterwards, the instructor shortly

explained the Vive trackers and put them on the

participant’s feet. Before starting the test scenario, the

participants completed a quick tutorial, which showed

them how to move in the virtual environment and how

to pick things up. Then they started the assembly task

with the avatar representation in a randomized order.

After finishing the assembly task with one avatar,

they took off the HMD and filled out the

questionnaires. Then they fulfilled the assembly task

with the second avatar representation and repeated

this procedure. During the task, the participants read

the instructions for the assembly on two virtual

screens behind the tables, displaying the necessary

information regarding the order of the assembly steps.

All participants completed the tasks for all three

avatar representations successfully. The completion

time was not a main focus of the study.

To answer the research question, the presence and

acceptance factors were evaluated with post-test

questionnaires. The perceived presence was assessed

with a shortened version of the ITC-SOPI (Lessister

GRAPP 2020 - 15th International Conference on Computer Graphics Theory and Applications

20

et al., 2001), which included only 12 instead of 44

items and was ranked on a five-point Likert scale. The

ITC-SOPI contains four factors, which were

measured by the three top loading items per scale.

Sense of physical space: indicates “a sense of

physical placement in the mediated environment, and

interaction with and control over parts of the

mediated environment” (Lessister et al., 2001).

Engagement: includes the “user’s involvement

and interest in the content of the displayed

environment, and their general enjoyment of the

media experience” (Lessister et al., 2001).

Ecological validity: evinces the believability and

the realism of the content as well as the naturalness of

the environment (Lessister et al., 2001).

Negative effects: summarizes “adverse

physiological reactions” (Mania and Chalmers, 2004)

e.g. motion sickness, dizziness of virtual

environments.

For the assessment of the acceptance of the avatar

representation, we used the acceptance scale of Van

Der Laan et al. (1997). This scale contains nine Likert

items, which refer to the two dimensions usefulness

and satisfaction.

Based on previous work described in Section 2,

we defined the following hypotheses:

H1: A part body avatar reaches better presence

values for the factors sense of physical space,

ecological validity and negative effects: Previous

studies show that simplified virtual hands lead to a

better controllability (Argelaguet et al., 2016) and it

is also evaluated that failures in the representation of

the user’s movements lead to reduced sense of

presence and embodiment (Ehrsson et al., 2004;

Slater and Steed, 2000; Tsakiris and Haggard, 2005).

It is suspected that the “hands_only” avatar reaches

significantly higher ecological validity values than

the more error-prone full body manikins.

Additionally, occasionally occurring incapabilities of

the full body avatars to perfectly represent the user’s

movements due to tracking and animation constraints

could lead to additional negative effects, because the

failures in the representations of the limbs could be

experienced as unpleasant.

H2: A part body avatar reaches higher acceptance

values due to the possible uncanny valley effect (Mori

et al., 2012) and the findings by Lugrin et al. (2015a)

that supposedly a less realistic avatar is more

acceptable.

H3: A higher fidelity full body manikin reaches a

higher ecological validity than a full body

representation with lesser details in shading,

geometry and textures.

4 RESULTS

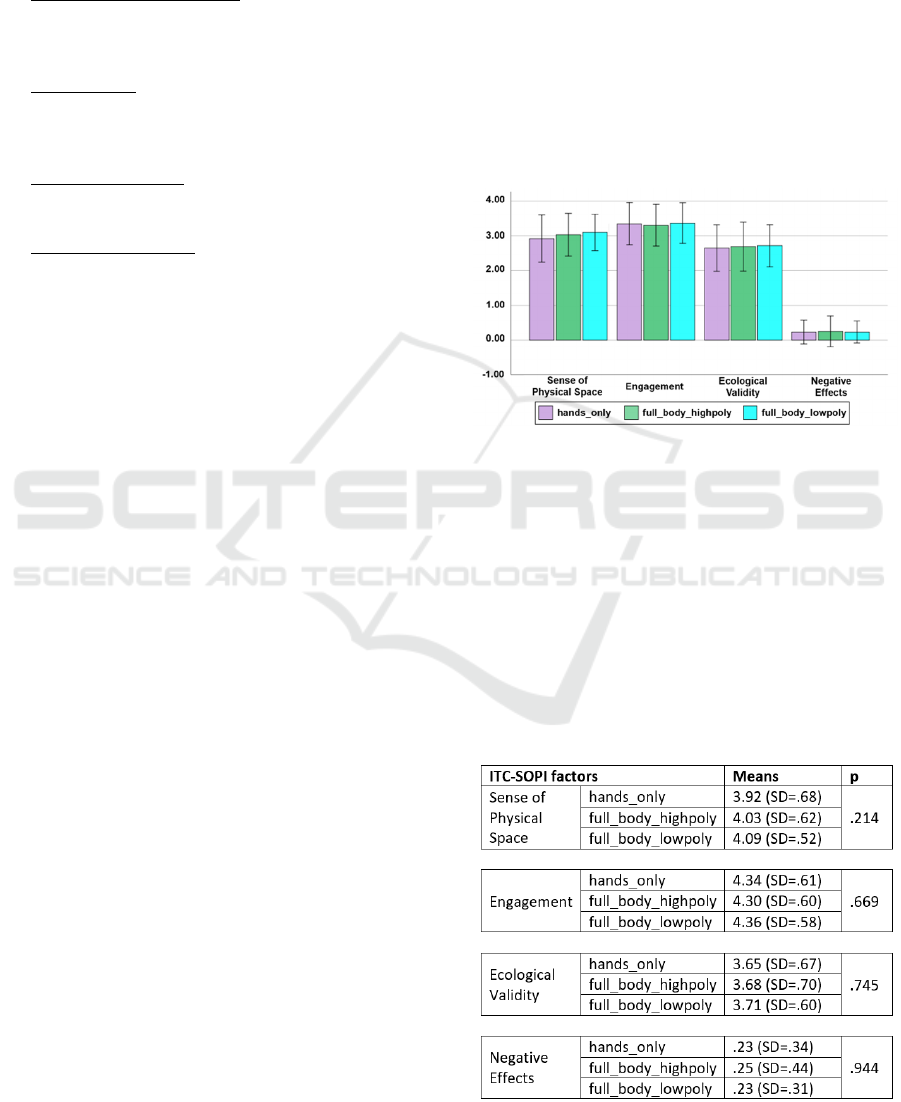

To evaluate the influence of different avatar

representations during an assembly task, we

compared the results of the presence factors of the

ITC-SOPI between the different representations.

Figure

6 shows the bar charts of the means and

standard deviations of the presence factors for all

three kinds of avatars.

Figure 6: Bar charts of the means of the presence factors for

the three avatar representations.

In

Table

1 the means and standard deviations of the

presence factors are shown. A Shapiro-Wilk test

showed that the presence factors are not normally

distributed. Therefore, we analysed the data with a

Friedman test for paired samples at the 5%

significance level. The results are also presented in

Table

1. There are no significance effects of the kind

of avatar representation on the ITC-SOPI-scores.

Table 1: Means, (standard deviations) and p-values of the

ITC-SOPI factors for the three avatar representations.

Impact of First Person Avatar Representation in Assembly Simulations on Perceived Presence and Acceptance

21

To test if there were significant differences

between respectively two kinds of avatar

representations in the ITC-SOPI factors, we

conducted a Wilcoxon signed-rank test for paired

samples at the 5% significance level. No significance

differences between the kinds of avatar presentations

on the ITC-SOPI factors were found. The results of

the significance test are shown in

Table

2.

Table 2: Results of the Wilcoxon signed-rank test for the

ITC-SOPI factors.

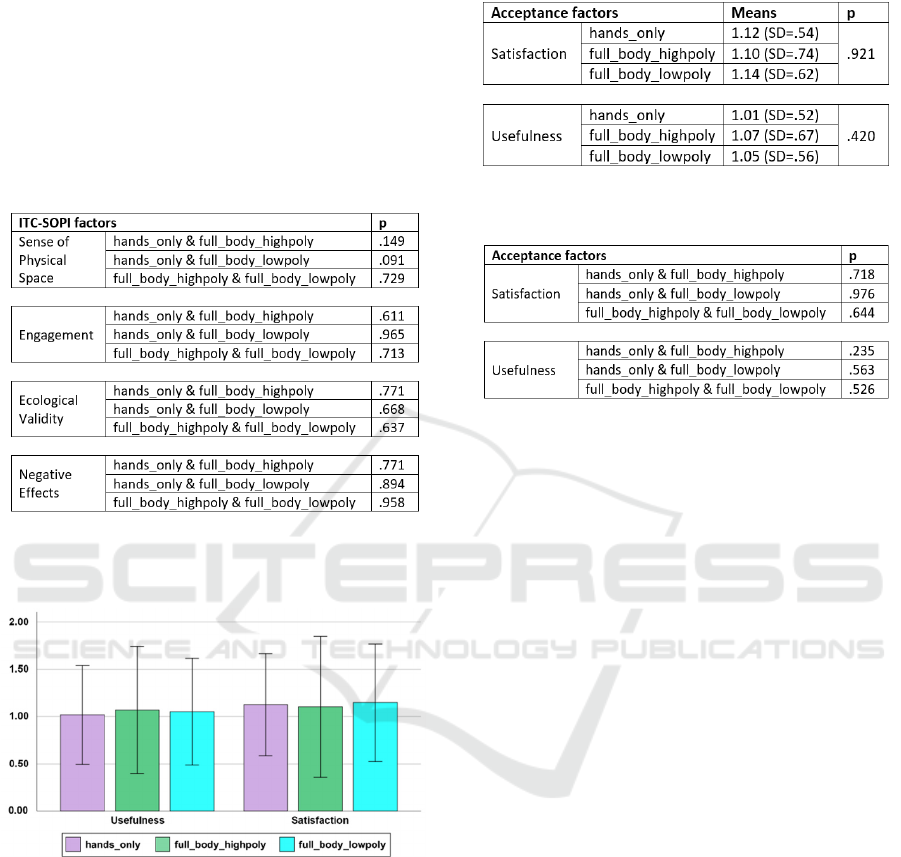

For the evaluation of the acceptance of the avatar

representations, we also calculated the means and

standard deviations (see Figure 7 and

Table

3).

Figure 7: Bar charts of the means of the acceptance scale

factors for the three avatar representations.

A Shapiro-Wilk test indicates, that the

assumption of normality had been violated. Thus, a

Friedman test for paired samples at the 5%

significance level was calculated (see

Table

3).

There was no significance effect of the avatar

presentation on the acceptance scale detected.

A Wilcoxon signed-rank test for paired samples

at the 5% significance level was conducted to check

for significant differences between two kinds of

avatar representation on the acceptance scale, but

result in no significant differences (see

Table

4).

Table 3: Means, (standard deviations) and p-values of the

acceptance scale factors for the three avatar representations.

Table 4: Results of the Wilcoxon signed-rank test for the

acceptance scale.

5 DISCUSSION

Overall, all three avatars reached high values for

presence and a good acceptance score. The results for

the factor “Sense of Physical Space” obtain a very

high score for all avatars, which indicates that the

participants had the feeling of being placed in the

virtual room and that they felt positively about the

available interactions and the controllability of the

task. Additionally, the feature of real walking

supports the feeling of being placed. The ranking of

the “Engagement” factor reached very high values,

which strengthens the assessment that the participants

enjoyed the tasks and the way they could interact with

and within the virtual world. These findings were

supported by the feedback the participants gave to the

instructor.

The results of the significance tests revealed no

significant differences between the avatar

representation on the scores of ITC-SOPI and the

acceptance scale. Therefore, H1, H2 and H3 have to

be rejected. The explanation of these findings refers

to several factors: First, all the representations of the

avatars showed too much similarities, especially in

the visualisation and animation of the hands, so that

the perceived differences where too small to be

mirrored by the questionnaires.

The second factor is that the participants had little

to no contact with VR-scenarios before, making it

possible that this lack of experience could bias their

assessment of the visualisations due to the small

sample size they could compare it to. The low

GRAPP 2020 - 15th International Conference on Computer Graphics Theory and Applications

22

experience levels regarding VR could also affect the

enjoyment of the virtual task, considering the novelty

character of the sensation of exploring virtual

Environments (Brade et al., 2017). This is

strengthened by the exceedingly positive feedback

the participants gave to the instructor. More

experienced users could be expected to display a

more critical view on the presented avatars, but the

sample set of the study did not allow such an

evaluation.

The third and most important factor is, that the

participants noticed no significant differences in the

avatar representations for they were primarily

focused on the tasks and had a limited view on the

parts of the avatars, besides from the hands itself.

Even though the focus of the study was deliberately

laid on such a first person view during a table-based

assembly task for exactly these reasons, the

differences between the floating hands and the full

body avatars were expected greater. As the attention

of the participants lied mainly on the hands and not

on the other body parts, for all tasks consisted mainly

of manual tasks, the findings of this study should be

verified for other actions, like climbing stairs or

sitting down on chairs, for example.

These factors are corroborated by the findings of

Lugrin et al. (2015b): They showed that there are no

significant difference between non-realistic and

realistic self-avatars, though limited to the

representation of the users arm, when the tasks that

need to be fulfilled draw the users attention away

from mainly beholding the avatar. This is in contrast

with situations where the user beholds the avatar not

only from the first person view but also in a virtual

mirror. Then “realistic avatars also evoked a

significantly higher acceptance of the virtual body to

be one’s own body concerning the illusion of virtual

body ownership” which was shown by Latoschik et

al. (2017) Therefore, it can be expected that the time

the user has time to actively behold the avatar is an

influencing factor for presence and acceptance.

Particular because there are no significant

differences measured, the outcome of the study

lessens the effort needed to create VR based training

and education scenarios, because it indicates, that

there is no necessity for a highly detailed avatar to

strengthen the perceived presence and acceptance

during such scenarios containing mainly manual

assembly task. Therefore, difficult and time-

consuming creation processes regarding anatomically

correctly modelled and tracking-based animated

avatars can be reduced to focus on the body parts

directly needed to fulfil the given training tasks.

6 CONCLUSIONS

The current study evaluated the effect of different

avatars on perceived presence and acceptance during

a manual assembly task. The results show that the

tested avatars did not differ significantly concerning

presence and acceptance measures. Both full body

manikins reached high presence and acceptance

values as well as the “floating hands” avatar.

Therefore, it can be concluded that, if the focus of the

simulated task lies on manual activities during which

the view on the avatar is mainly limited to the hands

and arms, no full body manikin is necessary.

Because the conducted study considered mainly

manual, table-based assembly activities, the

transferability of the results on other task is limited.

To proof the findings on other tasks, a second study

should address assembly and maintenance task which

involve different postures, like crouching, climbing,

or in general involve more body-related activities.

ACKNOWLEDGEMENTS

This project is co-financed with tax money based on

the state budget, passed by the representatives of the

Saxon Landtag.

REFERENCES

Argelaguet, F., Hoyet, L., Trico, M., & Lécuyer, A. 2016.

The role of interaction in virtual embodiment: Effects

of the virtual hand representation. Paper presented at

the 2016 IEEE Virtual Reality (VR).

Brade, J., Lorenz, M., Busch, M., Hammer, N., Tscheligi,

M., & Klimant, P. 2017. Being there again–presence in

real and virtual environments and its relation to

usability and user experience using a mobile navigation

task. International Journal of Human-Computer

Studies, 101, 76-87.

Ehrsson, H. H., Spence, C., & Passingham, R. E. 2004.

That's my hand! Activity in premotor cortex reflects

feeling of ownership of a limb. Science, 305(5685),

875-877.

Gerhard, M., Moore, D. J., & Hobbs, D. J. 2001.

Continuous presence in collaborative virtual

environments: Towards a hybrid avatar-agent model

for user representation. Paper presented at the

International Workshop on Intelligent Virtual Agents.

Heidicker, P., Langbehn, E., & Steinicke, F. 2017.

Influence of avatar appearance on presence in social

Impact of First Person Avatar Representation in Assembly Simulations on Perceived Presence and Acceptance

23

VR. Paper presented at the 2017 IEEE Symposium on

3D User Interfaces (3DUI).

Jung, S., & Hughes, C. E. 2016. The effects of indirect real

body cues of irrelevant parts on virtual body ownership

and presence. Paper presented at the Proceedings of the

26th International Conference on Artificial Reality and

Telexistence and the 21st Eurographics Symposium on

Virtual Environments.

Kilteni, K., Groten, R., & Slater, M. 2012. The sense of

embodiment in virtual reality. Presence: Teleoperators

and Virtual Environments, 21(4), 373-387.

Latoschik, M. E., Roth, D., Gall, D., Achenbach, J.,

Waltemate, T., & Botsch, M. 2017. The effect of avatar

realism in immersive social virtual realities. Paper

presented at the Proceedings of the 23rd ACM

Symposium on Virtual Reality Software and

Technology.

Lenggenhager, B., Tadi, T., Metzinger, T., & Blanke, O.

2007. Video ergo sum: manipulating bodily self-

consciousness. Science, 317(5841), 1096-1099.

Lessister, J., Freeman, J., Keogh, E., & Davidoff, J. 2001.

A Cross-Media Presence Questionnaire: The ITC-

Sense of Presence Inventory. Presence: Teleoperators

and Virtual Environments, 10 (3), 282-297.

Lok, B., Naik, S., Whitton, M., & Brooks, F. P. 2003.

Effects of handling real objects and self-avatar fidelity

on cognitive task performance and sense of presence in

virtual environments. Presence: Teleoperators &

Virtual Environments, 12(6), 615-628.

Lugrin, J.-L., Latt, J., & Latoschik, M. E. 2015a. Avatar

anthropomorphism and illusion of body ownership in

VR. Paper presented at the 2015 IEEE Virtual Reality

(VR).

Lugrin, J.-L., Wiedemann, M., Bieberstein, D., &

Latoschik, M. E. 2015b. Influence of avatar realism on

stressful situation in VR. Paper presented at the 2015

IEEE Virtual Reality (VR).

Mania, K., & Chalmers, A. 2004, July. The Effects of

Levels of Immersion on Memory and Presence in

Virtual Environments: A Reality Centered Approach.

CyberPsychology & Behavior 4 (2), 247-364.

Maselli, A., & Slater, M. 2013. The building blocks of the

full body ownership illusion. Frontiers in human

neuroscience, 7, 83.

Mori, M., MacDorman, K. F., & Kageki, N. 2012. The

uncanny valley [from the field]. IEEE Robotics &

Automation Magazine, 19(2), 98-100.

Nash, E. B., Edwards, G. W., Thompson, J. A., & Barfield,

W. 2000. A review of presence and performance in

virtual environments. International Journal of human-

computer Interaction, 12(1), 1-41.

Petkova, V. I., & Ehrsson, H. H. 2008. If I were you:

perceptual illusion of body swapping. PloS one, 3(12),

e3832.

Sanchez-Vives, M. V., Spanlang, B., Frisoli, A.,

Bergamasco, M., & Slater, M. 2010. Virtual hand

illusion induced by visuomotor correlations. PloS one,

5(4), e10381.

Schuemie, M. J., van der Straaten, P., Krijn, M., & van der

Mast, C. A. P. G. 2001. Research on Presence in Virtual

Reality: A Survey. CyberPsychologie & Behavior 4 (2),

183-201.

Slater, M. 1999. Measuring presence: A response to the

Witmer and Singer presence questionnaire. Presence,

8(5), 560-565.

Slater, M., & Steed, A. 2000. A virtual presence counter.

Presence: Teleoperators & Virtual Environments, 9(5),

413-434.

Slater, M., Usoh, M., & Steed, A. 1995. Taking steps: the

influence of a walking technique on presence in virtual

reality. ACM Transactions on Computer-Human

Interaction (TOCHI), 2(3), 201-219.

Slater, M., & Wilbur, S. 1997. A Framework for Immersive

Virtual Environments (FIVE): Speculations on the Role

of Presence in Virtual Environments. Presence:

Teleoperators and Virtual Environments, 6 (6), 603-

616.

Steed, A., Pan, Y., Zisch, F., & Steptoe, W. 2016. The

impact of a self-avatar on cognitive load in immersive

virtual reality. Paper presented at the 2016 IEEE

Virtual Reality (VR).

Tanaka, K., Nakanishi, H., & Ishiguro, H. 2015. Physical

embodiment can produce robot operator’s pseudo

presence. Frontiers in ICT, 2, 8.

Tsakiris, M., & Haggard, P. 2005. The rubber hand illusion

revisited: visuotactile integration and self-attribution.

Journal of Experimental Psychology: Human

Perception and Performance, 31(1), 80.

Van Der Laan, J. D., Heino, A., & De Waard, D. 1997. A

simple procedure for the assessment of acceptance of

advanced transport telematics. Transportation

Research Part C: Emerging Technologies, 5(1), 1-10.

Waltemate, T., Gall, D., Roth, D., Botsch, M., & Latoschik,

M. E. 2018. The impact of avatar personalization and

immersion on virtual body ownership, presence, and

emotional response. IEEE transactions on visualization

and computer graphics, 24(4), 1643-1652.

Weiss, P. L., Kizony, R., Feintuch, U., & Katz, N. 2006.

Virtual reality in neurorehabilitation. Textbook of

neural repair and rehabilitation, 51(8), 182-197.

GRAPP 2020 - 15th International Conference on Computer Graphics Theory and Applications

24