Virtual Reality Techniques for 3D Data-Warehouse Exploration

Hamza Hamdi, Eulalie Verhulst and Paul Richard

Laboratoire Angevin de Recherche en Ing

´

enierie des Syst

`

emes (LARIS - EA 7315),

Universit

´

e d’Angers, Angers, France

Keywords:

Virtual Environments, Interaction Techniques, Navigation, Data Warehouse, Human Performance.

Abstract:

This paper focuses on the evaluation of virtual reality (VR) interaction techniques for exploration of data ware-

house (DW). The experimental DW involves hierarchical levels and contains information about customers

profiles and related purchase items. A user study has been carried out to compare two navigation and selection

techniques. Sixteen volunteers were instructed to explore the DW and look for information using the inter-

action techniques, involving either a single Wiimote

TM

(monomanual) or both Wiimote

TM

and Nunchuck

TM

(bimanual). Results indicated that the bimanual interaction technique is more efficient in terms of speed and

error rate. Moreover, most of the participants preferred the bimanual interaction technique and found it more

appropriate for the exploration task. We also observed that males were faster and made less errors than females

for both interaction techniques.

1 INTRODUCTION

Worldwide corporations are mining their data to learn

about client purchasing patterns, fraud, credit appli-

cations and health care outcome analysis. It ap-

pears that the worldwide business intelligence and

data warehousing market had about a 60% year-over-

year growth. As one expects, there has been a surge in

the number of applications that provide DW creation,

management and mining. A DW is a subject-oriented,

integrated, time-variant and non-volatile collection of

data in support of management’s decision making

process (Han and Kamber, 2000).

During the last decade, significant research efforts

have been devoted towards facilitating access to in-

formation. In this context, typical relational database

systems were optimized for query processing and on-

line transaction processing (OLTP), which minimizes

the time needed for systematic daily operations of an

organization (Sawant et al., 2000; Ammoura et al.,

2001). VR techniques were also proposed to immerse

experts in 3D DW. This approach relies heavily on the

human abilities to explore, perceive, and process 3D

information. In this context, different VR setup and

application have been developed (Vald

´

es, 2005; Ogi

et al., 2009; Nagel et al., 2008). Most of these in-

volved complex systems and user interfaces such as

the CAVE

TM

(Cruz-Neira et al., 1992). With the con-

tinuous improvements in computer technology and

video games, it is now possible with almost standard

PCs and low-cost interaction devices such as the Nin-

tendo Wiimote

TM

to explore 3D data sets. However,

usability studies and human performance evaluation

still need to be carried out in order to reach intuitive

and efficient interaction techniques.

In this paper, we report on a user study aimed to

compare two different interaction techniques for the

exploration of a data warehouse with specific graphics

encoding. Sixteen volunteers were instructed to look

for information using an interaction technique in-

volving either a Wiimote

TM

(monomanual technique)

or both a Wiimote

TM

and a Nunchuck

TM

(bimanual

technique).

In the next section, we give a short survey about

VR techniques used in the context of Visual Data

Mining (VDM). Then we describe some works about

3D interaction techniques in Virtual Environments

(VEs). In section 3, we describe the experimental

data set and the proposed graphic encoding of these

data. Section 4 presents the proposed interaction

techniques based on the Nintendo Wiimote

TM

and

Nunchuck

TM

. Section 5 is dedicated to the experi-

ment and the results analysis. The paper ends by a

conclusion and gives some tracks for future work.

Hamdi H., Verhulst E. and Richard P.

Virtual Reality Techniques for 3D Data-Warehouse Exploration.

DOI: 10.5220/0006130400750083

In Proceedings of the 12th International Joint Conference on Computer Vision, Imaging and Computer Graphics Theory and Applications (VISIGRAPP 2017), pages 75-83

ISBN: 978-989-758-229-5

Copyright

c

2017 by SCITEPRESS – Science and Technology Publications, Lda. All rights reserved

75

2 RELATED WORKS

2.1 Visual Data Mining

Visual data mining (VDM) aims to integrate the hu-

man in the data exploration process, applying his per-

ceptual abilities to the large data sets available in to-

day’s computer systems. The main idea of VDM is

to present the data set in some visual form, allowing

the human to get into the data, draw conclusions, and

directly interact with the data. VDM techniques have

proven to be of high value in exploratory data analy-

sis and they also have a high potential for exploring

large databases. According to (Wong and Bergeron,

1997) the exploratory analysis of data and VDM are

not only a set of tools but also a philosophical manner

to approach the problem of knowledge discovery.

VDM methods have been implemented in VR in

several occasions as well as traditional methods for

data exploration (Symanzik et al., 1997; Wegman and

Symanzik, 2002). In 1999, a research project called

3D Visual Data Mining (3DVDM) was initiated at

the VR Media Lab at Aalborg University to study

how VR may be used in VDM (Nagel et al., 2001;

Granum and Musaeus, 2002). Among the facilities

of the VR Media Lab are a 3D Power Wall, a 160

degree Panorama, a 6-sided CAVE, and a 16 proces-

sor SGI Onyx2. Another project called DIVE-ON

(Data mining in an Immersed Virtual Environment

Over a Network), uses advance in VR, databases, and

distributed computing to experiment with a new ap-

proach to VDM. For example, the DWs generated by

DIVE-ON were N-dimensional data cubes (Ammoura

et al., 2001).

2.2 3D Interaction Techniques

3D interaction techniques are generally classified as

follows (Mine, 1995; Hand, 1997; Zeleznik et al.,

1997): selection, manipulation, navigation and appli-

cation control. Navigation is composed of two tasks:

travelling and way-finding (Bowman et al., 2001),

where travelling represents the main component of

navigation and refers to the physical displacement

from a place to another one. Way-finding corresponds

to the cognitive component of navigation by allowing

the users to be located in the VEs and to choose a tra-

jectory for displacement. Both aspects of navigation

are crucial for efficient exploration of 3D DW.

Several studies aimed to develop techniques for

specific tasks and applications. (Bowman et al., 1997)

suggested a framework for evaluating the quality of

interaction techniques for specific tasks in VEs. Re-

sults indicated that pointing techniques are advanta-

geous relative to gaze-directed steering techniques for

a relative motion task. Moreover, they observed that

motion techniques which instantly teleport users to

new locations are correlated with increased user dis-

orientation. Some hand directed motion techniques

have also been proposed for navigation. The position

and orientation of the hand determines the direction

of motion through the VEs. Wii devices have been

adopted by a number of researchers for a wide vari-

ety of applications (Schlomer et al., 2008). Gener-

ally, in case of using The Wiimote

TM

for navigation,

rotation angles such as pitch, yaw, and roll informa-

tion are used. For example, (Duran et al., 2009) used

the Wiimote

TM

for controlling wheelchair using pitch

and yaw movements. (Fikkert et al., 2009; Fikkert

et al., 2010) proposed interaction techniques using the

Wiimote

TM

and the Wii Balance Board

TM

. Both in-

put devices were used to navigate a maze application.

(Yamaguchi et al., 2011) developed a 3D interaction

technique to explore Google Earth using the Nintendo

Wii devices. The Wiimote

TM

was used for zooming

and steering and the Balance Board

TM

was used for

walking. The authors tested operation workload for 9

different threshold angle combinations. They found a

most low workload threshold angle combination of 45

degrees (for zooming out) /-15 degrees (for zooming

in) and of 30 degrees (for steering right) /-30 degrees

(for steering left). Some more recent approaches have

been proposed for navigation in VEs (Fajnerova et al.,

2015; Gaona et al., 2016; Christou et al., 2016).

3 DATABASE AND GRAPHIC

ENCODING

The aim of the graphic encoding is the rewriting of

the data in the form of graphic objects by associ-

ating each variable in the data with a graphic ones

(position, length, surface, color, luminosity, satura-

tion, form, texture, etc.). Graphic objects can be from

zero to three dimensions, i.e. a point, a line, a sur-

face, or a volume. Evolution of the objects in time

can introduce an additional dimension. Several au-

thors worked with the classification of the graphic

encodings so as to determine those which are most

effective according to the data to represent. For

the statistical graphs (groups of dots, diagrams, etc),

there are works from(Cleveland and McGill, 1984),

and(Wilkinson, 1999).

3.1 Description of the Database

For the experiment, we modelled a simple man-

agement of client relationship (CRM) database that

HUCAPP 2017 - International Conference on Human Computer Interaction Theory and Applications

76

gather information enabling the describtion and char-

acterization of customers purchase. This database is

primarily characterized by:

• the Customer table that is characterized by the

identifier, first name, familly name, age, sex, mar-

ital status and the number of credits;

• the Product, table where each product is identi-

fied by a single code and is indicated by the word-

ing, the category of product (fruits, vegetables,

drinks), the unit price and stock.

This database is described under the Microsoft

Access basic management system, and include 200

customers. The Figure 1 illustrates the selected

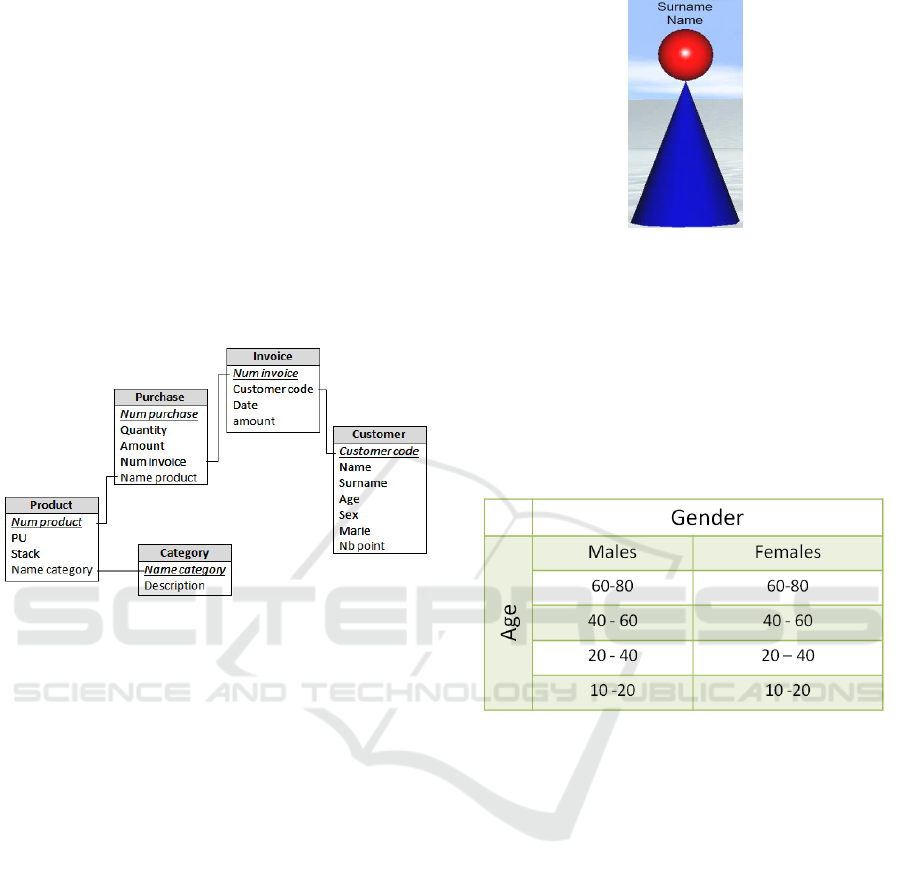

database using the relational model.

Figure 1: Model of data design.

3.2 Graphic Encoding

Each element of the database was translated to a

graphic representation (position and size). The pro-

posed graphic encoding of each customer is illus-

trated in Figure 2. The height of the cone repre-

sents the number of purchased items, while the ra-

dius of the sphere represents the percentage of the

expenditure relativelly to the other customers. The

color of spheres and cones were randomly selected

in order to easily distinguish each customer. More

precisely, a large cone posed with the lower part of

a sphere represents a customer whose expenditure is

high, while a small cone posed with the lower part of

a sphere represents an encoding of a low expenditure

customer. Moreover, complementary text labels are

posted above each object to give the first name and

familly name of the customer.

Each customer has been placed on the right (fe-

males) or left (males) side of the VE according to

his/her gender (Fig. 3 (a)). As we can see, this

graphic encoding strongly highlights the most active

customers. Similarly, the customers have been placed

at different depth according to their age, as illus-

trated in Table 1. The database contains all informa-

tion about customers’ purchases history (last twelves

Figure 2: Graphic encoding of each customer.

months only) which have been classified according

to their categories (cloths, fruits, meat vegetable, yo-

ghurts, fish, cheese, drinks). These data are visualized

using three-dimensional histograms representing the

amount of each product versus the month they have

been purchased. The histograms are positioned simi-

larly to the shelves of a supermarket (Fig. 3 (b)).

Table 1: Custumers segmentation according to their age.

4 INTERACTION TECHNIQUES

4.1 Interaction Modelling

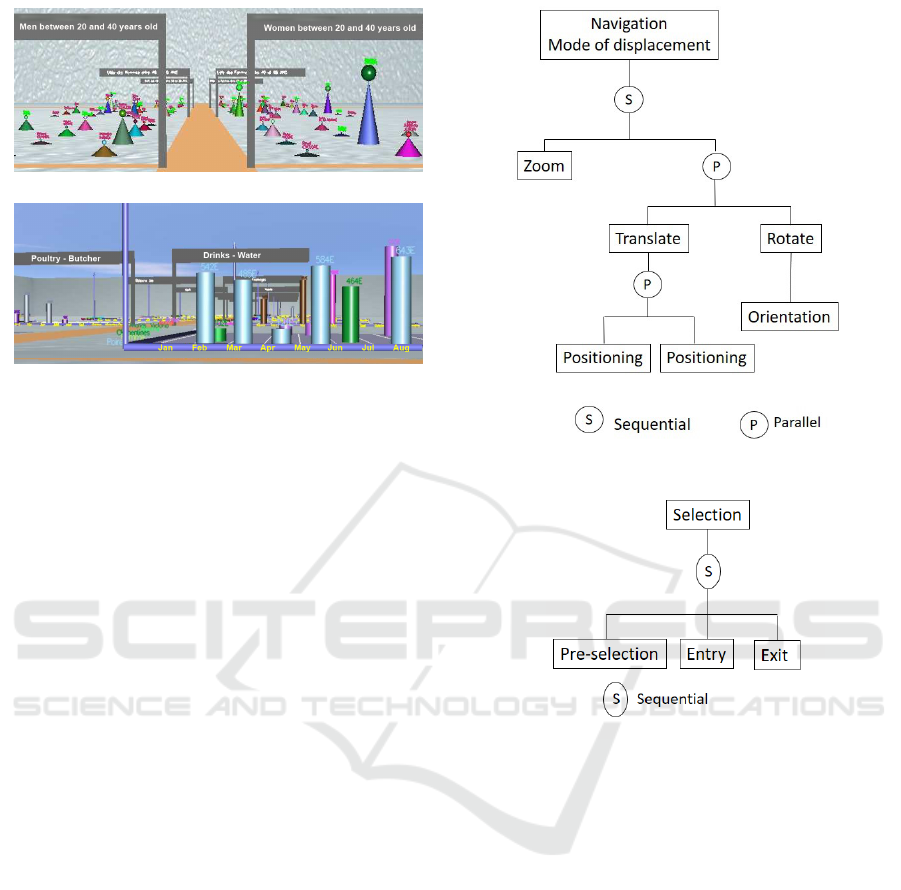

We proposed two interaction modes for the explo-

ration of the data-warehouse: (1) the navigation mode

and (2) the selection mode. The navigation mode al-

lows the user to navigate and explore both the VE

containing the customers representation and each VE

containing the customers data. This appoach is illus-

trated by the hierarchical model presented in Figure 4.

• The Translate function allows the user to move the

camera (viewpoint) along the lateral axis and/or

the depth axis;

• The Zoom function allows the user to zoom on a

selected customer, whatever his/her distance from

the user;

• The Rotate function allows the user to rotate the

camera relative to the vertical axis (steering).

Virtual Reality Techniques for 3D Data-Warehouse Exploration

77

(a)

(b)

Figure 3: Illustration of the 3D database: customers visual-

ization (a), purchased items of a given customers (b).

The selection mode is split in three sub-modes includ-

ing Pre-selection, Entry, and Exit. It allows the user

to select a given customer at any time and at any dis-

tance from him/her. This mode is illustrated by the

hierarchical model presented in the Figure 5.

• The Pre-selection function enables an automatic

zoom on a selected customer in order to quickly

and easily get his/her personal information;

• The Entry function enables the user to be tele-

ported in the VE containing the selected customer

personnal data;

• The Exit function enables the user to get back to

the main VE. This function could be activated at

any time and anywhere in the VE containing the

customer personnal data and do not require any

selection process.

4.2 Implementation of Interaction

In order to propose a low-cost system, we im-

plemented the interaction technique using the

Wiimote

TM

and the Nunchuck

TM

. The Wiimote

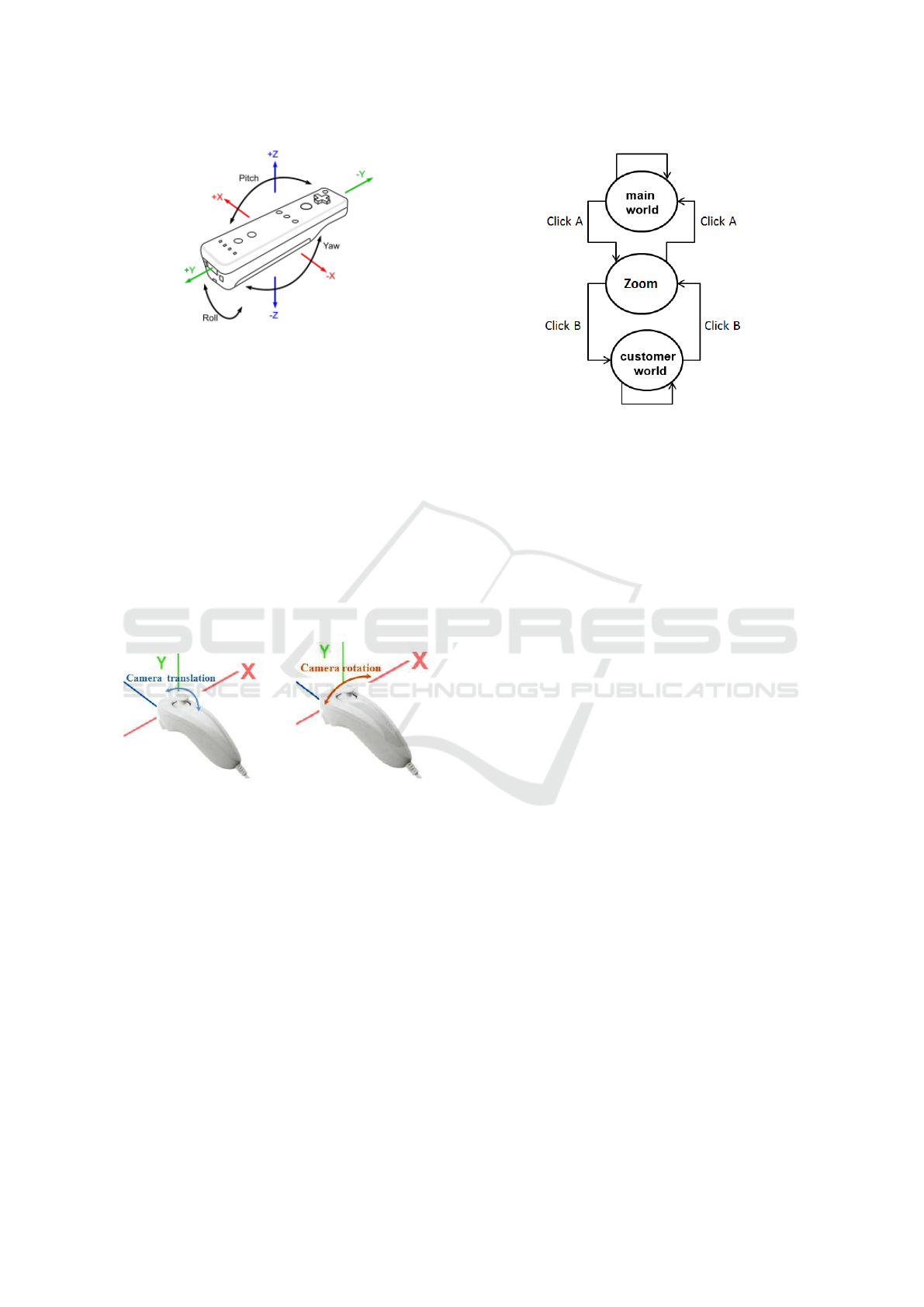

TM

(Fig. 6) has the capability to track the user’s hand ori-

entation along two degrees of freedom (pitch and roll)

using inertial sensors (accelerometers / gravimeters).

In addition, the Wiimote

TM

has 12 buttons which

could be used to trigger events. The Wiimote

TM

is

also equiped with an infrared emitter which could be

used to track yaw movements. The Wiimote

TM

com-

municates with the computer using Bluetooth wire-

less communication an could be connected with the

Nunchuck

TM

in order to add a second set of 3 ac-

celerometers along with 2 trigger-style buttons and

Figure 4: Hierarchical model for the navigation mode.

Figure 5: Hierarchical model of the selection mode.

an analog joystick. therefore, the combination of the

Wiimote

TM

/Nunchuck

TM

may be used as a bimanual

user input.

4.2.1 Mono-manual Interaction Technique

For this approach, the Wiimote

TM

was used to carry

out forward and backward movements (pitch) and

steering movements (roll). It was also used to switch

between the navigation and the selection modes (B

button). This approach allows simultaneous control

of translation and rotation of the virtual camera. In the

selection mode, the mouse cursor was also moved us-

ing the pitch and roll movements preventing the use of

the infrared emmiter/receiver. To select a given cus-

tomer and trigger the teleportation of the user in the

VE containig his/her peronnal data (customer world),

the A button of Wiimote

TM

was used.

HUCAPP 2017 - International Conference on Human Computer Interaction Theory and Applications

78

Figure 6: Rotational degrees of freedom (pitch, roll and

yaw) provided by the Wiimote

TM

.

4.2.2 Bi-manual Interaction Technique

The proposed bi-manual interaction technique use

both the Wiimote

TM

and the Nunchuck

TM

. The for-

mer device is used for only for customer selection

and trigger the teleportation and the come back of the

user in the main VE. The latter device is exclusivelly

used for navigation and therefore to control the for-

ward/backward (Fig. 7 (a)) and steering (Fig. 7 (b))

movements of the virtual camera. This is done using

the joystick of the Nunchuck

TM

. As in the previous

navigation technique, this approach allows simulta-

neous control of translation and rotation of the virtual

camera.

(a) (b)

Figure 7: Control of forward/backward (a) and steering (b)

movements using the Nunchuck

TM

.

5 USER STUDY

5.1 Task

Each participant was instructed to explore the cus-

tomers world and find out the three best customers

(higher cones), among one hundred customers. Then,

they had to select each of these customers and explore

their personal data (customer world) to discover the

three most purchased items. This procedure is illus-

trated in Figure 8 using a finite state machine. The

task ends as the participant felt he/she collected the

requested information and get back to the main world.

Figure 8: State machine model of the exploration task.

Whatever his/her location, a click on a given cus-

tomer (using the A button of the Wiimote

TM

) en-

ables a teleportation of the virtual camera in front of

him/her. This allows the participants to read the tex-

tual information about the customer’s name and give

him/her the possibility to be teleported in the cus-

tomers world. Thus, the participant may then decide

to enter in the customer world (using the B button of

the Wiimote

TM

), or to get back to its initial position

and continue the exploration of main world. In the

customer’s world, the participants can navigate us-

ing the same interaction technique and find out the

requested information (three most purchased items).

The participants can then activate the B button of the

Wiimote

TM

to get back to their location in the main

world (in front of the previouly selected customer).

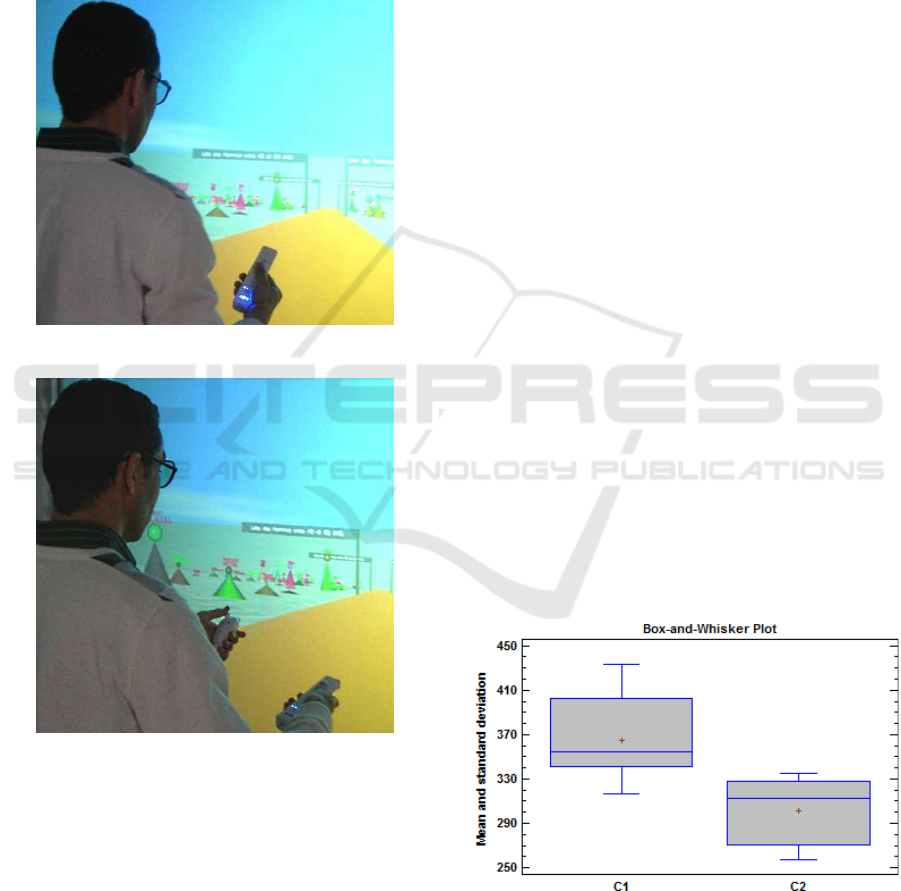

5.2 Design and Procedure

Sixteen participants (8 males and 8 females) were

divided into two groups (G1 and G2). They were

aged between 22 and 35 years old (72.72% are right-

handed and 27.27% are left-handed) and had normal

or corrected-to-normal vision capabilities. The exper-

iment was conducted according to the two following

conditions:

• C1: navigation and selection using Wiimote

TM

only (Fig. 9 a);

• C2: navigation using the Nunchuck

TM

, selection

using the Wiimote

TM

(Fig. 9 b).

Four different VEs (D1, D2, D3 and D4) each cor-

responding to a different database were defined. The

participants of the group G1 start the exploration of

D1 and D2 by using Wiimote

TM

only (condition C1).

Then, they explore D3 and D4 by using Wiimote

TM

Virtual Reality Techniques for 3D Data-Warehouse Exploration

79

and Nunchuck

TM

(condition C2). Similarly, the par-

ticipants of the group G2 start the exploration of D1

and D2 by using Wiimote

TM

and Nunchuck

TM

(con-

dition C2), and they also explore D3 and D4 by using

Wiimote

TM

only (condition C1). In order to facilitate

the comprehension of the experience, we give a short

description of the task and allow each participant to

get acquainted with the system and perform in both

conditions (C1 and C2).

(a)

(b)

Figure 9: A participant exploring the 3D datawarehouse us-

ing: (a) the Wiimote

TM

only (condition C1), and (b) both

the Wiimote

TM

and the Nunchuck

TM

(condition C2).

Each participant was placed in front of a large

rear-projected stereoscopic screen (2x2.5 m) as illus-

trated in Figure 9, and equiped with passive (polar-

ized) glasses. A Full HD Optoma HD142X (1080p)

projector was used for displaying the images. The

participants were instructed to start the task using ei-

ther the Wiimote

TM

(C1) or both the Wiimote

TM

and

Nunchuck

TM

(C2) according to the group they be-

long to. At the end of the experiment, we gave each

participant a questionnaire in order to get subjective

data about the proposed interaction techniques and the

graphic encoding of the data warehouse.

5.3 Collected Data

We recorded the task completion time and the number

of errors for each single trial for each database (D1,

D2, D3, and D4). The errors consisted in the wrong

selection of the three best customers or the most three

purchased items. In order to examine participant’s be-

havior during the task, we recorded the paths for each

single trial.

5.4 Results

In this section we present the results of the experi-

mental study. The collected data have been analyzed

through a one-way repeated ANOVA. The descrip-

tion of the results is based on three criteria: (1) task

completion time, (2) average number of errors (num-

ber of best custumers not selected or number of the

three most purchased item not selected), and (3) par-

ticipant’s paths, which have been recorded in order to

analyse his/her behaviour and strategy or any difficul-

ties concerning the task.

5.4.1 Effect of Navigation Technique

Task Completion Time. The ANOVA revealed a

significant effect of the navigation technique on the

task completion time (F(1, 23) = 20.19; p < 0.05).

Average completion time for the condition C1 and C2

condition were respectively about 364.3 Sec. (SD :

36.30) and 300.7 Sec. (SD : 29.75). This result is

illustrated in Figure 10.

Figure 10: Task completion time (Sec.) vs. condition C1

(monomanual) and C2 (bimanual).

Number of Errors. The average number of errors

for the condition C1 (monomanual) and C2 (biman-

ual) were 4.27 and 3.73 respectively. A statistical

HUCAPP 2017 - International Conference on Human Computer Interaction Theory and Applications

80

analysis (ANOVA) showed that the interaction tech-

nique, either monomanual or bimanual, has no signif-

icant effect on error rate.

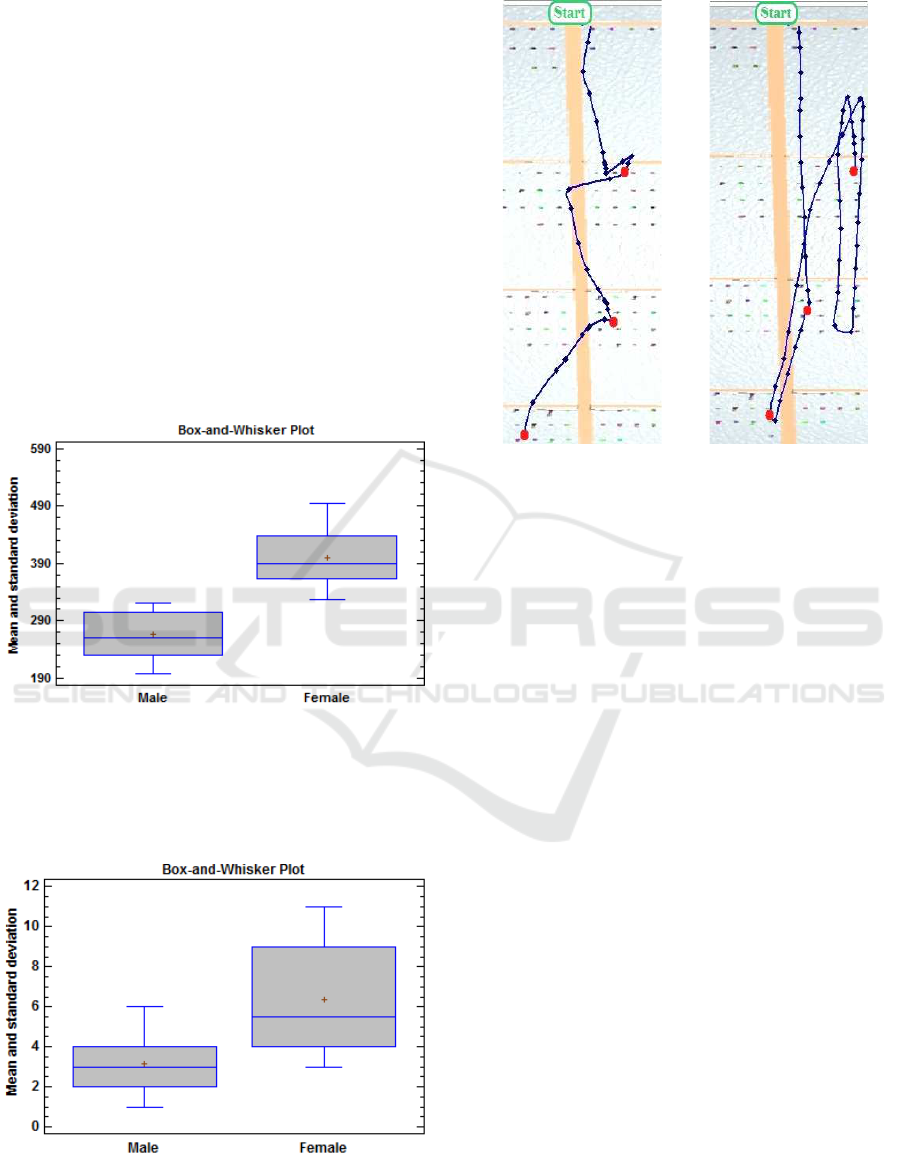

5.4.2 Gender Effect

In the following paragraph we look at the gender

effect on participant’s performance such as the task

completion time and number of errors.

Task Completion Time. We observed a sig-

nificant gender effect on task completion time

(F(1, 23) = 42.73; p < 0.05). Average completion

time was about 266 Sec. (SD : 40.5) for males and

399 Sec. (SD : 53.9) for females (Fig. 11). This sug-

gest that females had more difficulties in exploring

the VEs and find out the requested information than

males.

Figure 11: Task completion time (Sec.) vs. gender.

Number of Errors. The number of errors was on

average 3.14 for males, and 6.33 for females (Fig. 12).

Thus, females made more than twice number of errors

than males.

Figure 12: Number of errors vs. gender.

Navigation Paths. Figures 13 (a) and 13 (b) illus-

trate typical paths associated respectively with males

(a) (b)

Figure 13: Typical paths obtained by males (a) and females

(b).

and females. We observed that most females used no-

optimal and longer routes than males.

5.5 Subjective Data

Each participant were instructed to fill a questionnaire

and answer the following questions.

Question 1 Which interaction technique you feel the

most relevant for the task?

Question 2 How would you rate the level of diffi-

culty of the task (simple/difficult/very difficult)?

Results showed that many participants found the

bimanual interaction technique more relevant than the

monomanual one. Thus, 63.63% of them preferred

to perform the task using the Wiimote

TM

associated

with the Nunchuck

TM

and 36.36% of them preferred

to perform the task with the Wiimote

TM

only. Con-

cerning the level of difficulty of the task, many partic-

ipants (72%) found it simple and had no difficulty to

understand the meaning of the graphics.

5.6 Discussion

In this paper we compared mono and bimanual

interaction technique for the exploration of a data

warehouse. Participants used Wiimote

TM

alone or

Wiimote

TM

and Nunchuck

TM

. The results revealed

that interaction technique has a significant effects

Virtual Reality Techniques for 3D Data-Warehouse Exploration

81

on the objective dependant variables (completion

time and error rate). We observed that the bimanual

interaction technique (Wiimote

TM

and Nunchuck

TM

)

led to better performance. In addition, most of partic-

ipant preferred this technique over the monomanual

one (Wiimote

TM

only).

These results confirm some previous results con-

cerning the efficiency of bimanual interaction tech-

nique for complex motor activities (Guiard, 1987). Of

course, any combinations of these devices would have

been possible like selection with the Nunchuck

TM

and

navigation with the Wiimote

TM

. However, the use of

the Wiimote

TM

for selection and the Nunchuck

TM

for

navigation is efficient. Indeed joysticks are easy to

use(Vera et al., 2007) and only the Nunchuck

TM

has a

joystick. The Nunchuck

TM

may have a better usabil-

ity than the Wiimote

TM

. The observation of paths in

the cutomers world revealed that the Nunchuck

TM

is

more appropriate for navigation than the Wiimote

TM

(less saccadic trajectories). We have observed a sig-

nificant gender effect on task performance. The males

had much better performance in terms of task com-

pletion time and error rate than females with both

interaction techniques. It seem that this difference

come from the difficulties for females to navigate

and explore the 3D worlds(Vila et al., 2003). These

results allow us to state that 3D perception is rela-

tively low for females (Fig. 13)(Coluccia and Louse,

2004). Futhermore, these difficulties may have been

increased by the complexity of the task and the multi-

level hierachy of the data warehouse involving back-

and-forth transposition between the customer world

and the purchased items worlds.

6 CONCLUSION AND FUTURE

WORK

In this paper, we investigated the use of VR tech-

niques for the exploration of a data warehouse. In this

context, we have proposed two interaction techniques

based on the Wiimote

TM

and Nunchuck

TM

. Volunteer

participants were instructed to explore a data ware-

house with specific graphics encoding and collect in-

formation, either using the Wiimote

TM

only for sec-

tion and navigation (monomanual technique) or using

the Nunchuck

TM

for navigation and the Wiimote

TM

for selection (bi-manual technique). Results indicated

that the proposed bimanual interaction technique is

more efficient than the mono-manual one in terms

of completion time and errors rate. Moreover, most

participants preferred the bimanual interaction tech-

nique and find it easier and more appropriate for the

required task. Finally, we observed that males were

much faster and made less errors than females for

both interaction techniques. This confirm previous

results concerning the difficulties of females for navi-

gating in complex 3D worlds(Vila et al., 2003). In the

future work, we will develop and investigate interac-

tion and navigation techniques such as step-in place.

In addition, we will introduce haptic and multimodal

assistances to help the users to find information in the

data warehouse. We will also pay attention to the us-

ability of the interaction technique and users habits.

REFERENCES

Agarwal, S., Agrawal, R., Deshpande, P., Gupta, A., F.,

J. N., Ramakrishnan, R., and Sarawagi, S. (1996). On

the computation of multidimensional aggregates. In

Proceedings of the 22

th

International Conference on

Very Large Data Bases, pages 506–521, San Fran-

cisco, CA, USA.

Ammoura, A., Zaane, O. R., and Ji, Y. (2001). Dive-on:

From databases to virtual reality. Crossroads, 7(3):4–

11.

Bowman, D. A., Johnson, D. B., and Hodges, L. F. (2001).

Testbed evaluation of virtual environment interaction

techniques. Presence, 10(1):75–95.

Bowman, D. A., Koller, D., and Hodges, L. F. (1997).

Travel in immersive virtual environments: An evalua-

tion of viewpoint motion control techniques. Virtual

Reality Annual International Symposium, 215:45–52.

Christou, C., Tzanavari, A., Herakleous, K., and Poullis,

C. (2016). Navigation in virtual reality: Compar-

ison of gaze-directed and pointing motion control.

In 2016 18th Mediterranean Electrotechnical Confer-

ence (MELECON), pages 1–6.

Cleveland, W. S. and McGill, R. (1984). Graphical per-

ception: Theory, experimentation, and application to

the development of graphical methods. The American

Statistical Association, 79(387):531–554.

Coluccia, E. and Louse, G. (2004). Gender differences in

spatial orientation: A review. Journal of Environmen-

tal Psychology, 24(3):329 – 340.

Cruz-Neira, C., Sandin, D., DeFanti, T., Kenyon, R., and

Hart, J. (1992). The cave: audio visual experience au-

tomatic virtual environment. Communications of the

ACM, 35(6):65–72.

Duran, L., Fernandez-Carmona, M., Urdiales, C., Peula, J.,

and Sandoval, F. (2009). Conventional joystick vs. wi-

imote for holonomic wheelchair control. 5517:1153–

1160.

Fajnerova, I., Rodriguez, M., Spaniel, F., Horacek, J., Vl-

cek, K., Levcik, D., Stuchlik, A., and Brom, C. (2015).

Spatial navigation in virtual reality : from animal

models towards schizophrenia: Spatial cognition tests

based on animal research. In International Conference

on Virtual Rehabilitation (ICVR), pages 44–50.

HUCAPP 2017 - International Conference on Human Computer Interaction Theory and Applications

82

Fikkert, F., Hoeijmakers, N., van der Vet, P., and Nijholt, A.

(2009). Navigating a maze with balance board and wi-

imote. Intelligent Technologies for Interactive Enter-

tainment, Lecture Notes of the Institute for Computer

Sciences, Social Informatics and Telecommunications

Engineering, 9:187–192.

Fikkert, F., Hoeijmakers, N., van der Vet, P., and Nijholt,

A. (2010). Fun and efficiency of the wii balance in-

terface. International Journal of Arts and Technology

(IJART), 3(4):357–373.

Gaona, P. A. G., Moncunill, D. M., Gordillo, K., and

Crespo, R. G. (2016). Navigation and visualiza-

tion of knowledge organization systems using virtual

reality glasses. IEEE Latin America Transactions,

14(6):2915–2920.

Granum, E. and Musaeus, P. (2002). Constructing vir-

tual environments for visual explorers, pages 112–

138. Springer-Verlag, London, UK.

Gray, J., Chaudhuri, S., Bosworth, A., Layman, A., Re-

ichart, D., Venkatrao, M., Pellow, F., and Pirahesh, H.

(1997). A relational aggregation operator generalizing

group-by cross-tab, and sub-totals. pages 152–159.

IEEE International Conference on Data Engineering.

Guiard, Y. (1987). Asymmetric division of labor in hu-

man skilled bimanual action: The kinematic chain as

a model. Journal of Motor Behavior, 19:486–517.

Han, J. and Kamber, M. (2000). Data Mining: Con-

cepts and Techniques (The Morgan Kaufmann Series

in Data Management Systems). Morgan Kaufmann.

Hand, C. (1997). A survey of 3d interaction techniques.

Computer Graphics Forum, 16:269281.

Mine, M. R. (1995). Isaac: A virtual environment tool for

the interactive construction of virtual worlds. Techni-

cal report, University of North California, Chapel Hill

USA.

Nagel, H. R., Granum, E., Bovbjerg, S., and Vittrup, M.

(2008). chapter immersive visual data mining the

3dvdm approach. Visual Data Mining, pages 281–

311.

Nagel, H. R., Granum, E., and Musaeus, P. (2001). Methods

for visual mining of data in virtual reality. PKDD:

International Workshop on Visual Data Mining.

Nelson, L., Cook, D., and Cruz-Neira, C. (1999). Xgobi

vs the c2: Results of an experiment comparing data

visualization in a 3-d immersive virtual reality envi-

ronment with a 2-d workstation display. In Computa-

tional Statistics: Special Issue on Interactive Graph-

ical Data Analysis, pages 39–51. Communications of

the ACM.

Ogi, T., Tateyama, Y., and S, S. (2009). Methods for visual

mining of data in virtual reality. In 3

rd

International

Conference on Virtual and Mixed Reality, pages 13–

27.

Sawant, N., Scharver, C., Leigh, J., Johnson, A., Rein-

hart, G., Creel, E., Batchu, S., Bailey, S., and Gross-

man, R. (2000). The tele-immersive data explorer :

A distributed architecture for collaborative interactive

visualization of large data-sets. In 4

th

International

Immersive Projection Technology Workshop, Ames,

Iowa, USA.

Schlomer, T., Poppinga, B., Henze, S., and Boll, S. (2008).

Gesture recognition with a wii controller. In Tangible

and Embedded Interaction.

Symanzik, J., Cook, D., Kohlmeyer, B., Lechner, U., and

Cruz-Neira, C. (1997). Dynamic statistical graphics in

the c2 virtual reality environment. Computing Science

and Statistics, 29:41–47.

Vald

´

es, J. J. (2005). Visual data mining of astronomic data

with virtual reality spaces, understanding the underly-

ing structure of large data sets. In Astronomical Data

Analysis Software and Systems XIV ASP Conference

Series, page 51.

Vera, L., Campos, R., Herrera, G., and Romero, C. (2007).

Computer graphics applications in the education pro-

cess of people with learning difficulties. Computers &

Graphics, 31(4):649–658.

Vila, J., Beccue, B., and Anandikar, S. (2003). The gender

factor in virtual reality navigation and wayfinding. In

Proceedings of the 36

th

Annual Hawaii International

Conference on System Sciences, page 7.

Wegman, E. J. and Symanzik, J. (2002). Immersive projec-

tion technology for visual data mining. Computational

and graphical statistics, 11:163–188.

Wilkinson, L. (1999). The grammar of graphics. Springer-

Verlag New York, Inc, New York, NY, USA.

Wong, P. C. and Bergeron, R. D. (1997). 30 years of

multidimensional multivariate visualization. In Sci-

entific Visualization, Overviews, Methodologies, and

Techniques, pages 3–33, Washington, DC, USA. IEEE

Computer Society Press.

Yamaguchi, T., Chamaret, D., and Richard, P. (2011). Eval-

uation of 3d interaction techniques for google earth

exploration using nintendo wii devices. In 6

th

Inter-

national Conference on Computer Graphics Theory

and Applications (GRAPP’11), pages 334–338, Vil-

amoura, Portugal.

Zeleznik, R. C., Forsberg, S. A., and Strauss, P. S. (1997).

Two pointer input for 3d interaction. In symposium on

Interactive 3D graphics, pages 115–120, New York,

NY, USA. ACM.

Virtual Reality Techniques for 3D Data-Warehouse Exploration

83