MODELLING AND MANAGING KNOWLEDGE THROUGH

DIALOGUE: A MODEL OF COMMUNICATION-BASED

KNOWLEDGE MANAGEMENT

Violaine Prince

University of Montpellier and LIRMM-CNRS

161 Ada Street 34392 Montpellier cedex 5 France

Keywords:

Knowledge Management, Knowledge sharing, Multi-agent systems, heterogeneous Agents.

Abstract:

In this paper, we describle a model that relies on the following assumption; ontology negotiation and creation

is necessary to make knowledge sharing and KM successful through communication. We mostly focus on the

modifying process, i.e. dialogue, and we show a dynamic modification of agents knowledge bases could occur

through messages exchanges, messages being knowledge chunks to be mapped with agents KB . Dialogue

takes account of both success and failure in mapping. We show that the same process helps repair its own

anomalies. We describe an architecture for agents knowledge exchange through dialogue. Last we conclude

about the benefits of introducing dialogue features in knowledge management.

1 INTRODUCTION

Knowledge has muted from a personal expertise to-

wards a collective lore. Thus, knowledge manage-

ment (KM) and modelling contains social features

and deals with society of agents. As a society, agents

interact, and interaction conventional aspects have

attracted the attention of the KM community, with

different points of view : (i) The deep relationship

between knowledge and knowledge communication

(Ravenscroft and Pilkington 2000); (ii)The power of

the communicative process as a knowledge modifier

(Parson et al. 1998), (Zhang et al. 2004 ).

Although communication is an active component in

KM, most studies have dealt with cases where agents

were sufficiently close in type and knowledge in or-

der to share common ontologies. In extensive KM

systems, knowledge sharing is hampered by the lack

of common ontologies between artificial agents. In-

tegrating different designations for the same concepts

has been tackled by (Williams 2004) in a shared en-

vironment named DOGGIE ( Distributed Ontology

Gathering Group Integration Environment) allowing

negotiation of terminology among different ontolo-

gies. The author’s approach is that of a ’peer-to-peer’

situation that allows agents to share knowledge and

learn. His aims was more to demonstrate how hetero-

geneity in representations could be overcome, than to

focus on the dynamic process that underlies it, that is,

dialogue.

Our approach is in the same main line of thought, but

the added value is that we focus on the properties of

dialogue as a process of incremental knowledge ad-

justment. Human dialogue occurs between interlocu-

tors distinct in state, knowledge and intents. It hap-

pens when a need for knowledge occurs. It is used

when discrepancies in representations appear.

Applying dialogue requirements to KM is an issue

we will address in section 2. Although difference is

a mandatory component for dialogue existence, too

great a distance between agents might not even let the

chance for a dialogue to occur. Section 2 states the

likely requirements for dialogue success and conse-

quently those for an adequate KM through interac-

tion. Section 3 presents an architecture implementing

the process between artificial agents, an instantiation

of which, dedicated to agent teaching, has been pre-

sented in (Yousfi and Prince, 2005). Teaching is one

of the tasks in which knowledge sharing is tracked

at best (Williams 2000). This architecture has been

evaluated through its applications: the teaching appli-

cation, dedicated to conceptual knowledge revision,

presently runs as a prototype. Another application

about risk management ontologies acquisition (Makki

et al. 2006) is developed according to both model and

architecture described in this paper.

266

Prince V. (2006).

MODELLING AND MANAGING KNOWLEDGE THROUGH DIALOGUE: A MODEL OF COMMUNICATION-BASED KNOWLEDGE MANAGEMENT.

In Proceedings of the First International Conference on Software and Data Technologies, pages 266-271

DOI: 10.5220/0001314002660271

Copyright

c

SciTePress

2 DIALOGUE AS A MEAN FOR

SHARING KNOWLEDGE

Agents are considered heterogeneous when they dif-

fer in nature or in a major attribute. In this pa-

per we will restrain the definition of heterogeneity to

cognitive artificial agents with the following proper-

ties :(i)Different ontologies and knowledge bases (i.e

different world representations); (ii) Different tasks

within the system; (iii) Possibly belonging to different

applications that need to share knowledge, Web ser-

vices or activity. Heretogeneity produces variations

in building, sharing and communicating knowledge.

Theoretical Framework in Agents Knowledge

Sharing

Human beings favour dialogue as a major mean for

knowledge acquisition: Each agent considers any fel-

low agent as a knowledge source ’triggered’ through

questioning. Information is acquired, from the an-

swer, as an external possible hypothesis. This is the

starting point of both an acquisition and a revision-

based process, where the external fact, the message,

is subject to confrontation with the inner knowledge

source of the requiring agent. It drives the latter

to proceed to derivation by reasoning.The feedback

observed in natural dialogue is that the knowledge

source could be addressed for understanding confir-

mation. Along with other researchers,(Finin et al.

1997), (Zhang et al. 2004 ), we consider this process

as translatable into the software world and interesting

as an economical mean to acquire, mediate and share

knowledge.

The cognitive agents we are representing can be

seen as entities foregoing the following cycle (Prince

1996): (i)Capture, and symmetrically, edit data. Data

is every trace on a media; (ii)Transform the captured

data into information, as the output of an interpre-

tative process on data. This result will be either

stored as knowledge or discarded. (iii)Keep and in-

crease knowledge, which, in turn, is of various types:

(a) Stored information seen as useful; (b) Operat-

ing ’manuals’ to interpret data; (c) Deriving modes

to produce knowledge out of knowledge (reasoning

modes); (d) Strategies to organise and optimise data

capture, information storage, and knowledge deriva-

tion. Knowledge is by essence defined as explic-

itable knowledge, the only variety that could be im-

plementable. (iii) Acquire bypasses to data inter-

pretation and action on the information environment

through time-saving procedures: e.g. developping

know-how in the information field. The four parts

model is called DIKH (for Data, Information, Knowl-

edge and know-How). KM relies mostly in the two

last components : ”know-How”, and ”Know” are dis-

tinguished according to their properties involving ex-

plicitness vs implicitness, transferabilty vs non trans-

missibility. Since it is representable and conceptual-

isable, ”Know” or ”Knowledge” (K) has been mostly

investigated by KM. ”Know-how” has been claimed

as embedded in expert systems, but since it is implicit

it cannot be easily described. Therefore, know-how

has to be assumed as an important skill of cognitive

agents, but not as to be further investigated, unless in

very restricted areas. Since KM is at stake, we are

focusing on the K fourth of the model. DIKH as-

sumes a recursive modelling: Part of knowledge de-

rives from data, part of it derives from present knowl-

edge, and part of it is an economical optimisation of

its organisation. The K part puts in a nutshell sev-

eral known elements of KM. (i) Lexical Knowledge

represents tontological contents, relations, organisa-

tion. (ii)Produced knowledge is the kept part of in-

formation (interpreted data). It might rejoin either

lexical knowledge (new concepts to integrate in the

ontology), or production knowledge (rules, general

laws). (iii)Production knowledge has been mostly

investigated by research in AI: rules, reasoning, go-

ing further to meta-rules, and to strategies in organ-

ising KM is an important issue in cognitive agent

modelling. (iv) The”know-how” part of knowledge,

an originality of the DIKH model, strengthens upon

profiling, preferences, and presentation, as the agent

signature key. In single-application architectures, all

agents tend to share the same ”know-how” since the

latter belongs to the architecture designer. In hetero-

geneous systems, many architectures might be con-

fronted with each other. Web semantics and services

have integrated this aspect: Languages such as XML

have been devoted to emphasize the know-how about

knowledge presentation. The DIKH recursive mod-

elling might easily represent the KM part of a rational

natural agent (a person), as an extension from artifi-

cial agents environment, to Human-System environ-

ments. Since ”information” is a temporary status for

knowledge, the model reduces to the representation

given in figure 1. A rational cognitive agent might

be designed, from the static point of view, as: (i)A

set of lexical skills: ontological knowledge, relation-

ships between names and concepts, variables and their

domains; (ii) The core of a reasoning engine: Local

axioms, strong beliefs that help deriving other rules

and rules having a lesser status; (iii) Last, elements

of belief and knowledge that help optimising the en-

gine, those are the adaptive modes derived from ex-

perience.

A Message/Knowledge Chunk Exchange The-

ory:

A message (Jacobson and Halle 1956) can be de-

fined as a formated data set which: (i) is emited

as a sender’s intention concerning his recipients ;

(ii)follows a protocol (conventions in format and ex-

changes) ; (iii) has a content ; such that the whole

(form, intention, protocol, contents) is supposed to

MODELLING AND MANAGING KNOWLEDGE THROUGH DIALOGUE: A MODEL OF

COMMUNICATION-BASED KNOWLEDGE MANAGEMENT

267

Lexicon:

Classes,

Facts,

Value Domains

Axioms

Rules

Beliefs

Formalisms

Preferences

Strategies

Lexical skill

Knowledge

Production

Modes

«!Shortcuts!»

Adaptive

modes

Figure 1: the K-model: A static representation of the ratio-

nal cognitive agent.

modify the internal state of the recipient agent(s).

Related to artificial agents communication, the mes-

sage properties are the following: (i) presentation

: Formatting properties of the message as a meta-

format. (ii) content : it is in itself a complex system

that can be decomposed into: 1. how content is for-

malised; i.e.: (i) The selected elements in the chosen

language to designate different items ; (ii) composi-

tion rules used for the message.

2. The semantic content of the message: lexical data

meaning and formal compositions.

3. The informational content: What the recipient

agent has been able to understand from the received

message.

4. The Intentional content: what the sender agent

has wanted to transmit.

Definition : The formal structure of a message can

be described as a ternary structure composed of:(i)

Data: The lexical and syntaxic items composing the

message strata, equivalent to the rheme in the Speech

Act Theory (SAT)(Searle 1969).

(ii) Knowledge: which is itself decomposed into: 1.

the necessary knowledge to encode/decode data (1).

2. the message semantic content is knowledge (2),

equivalent to the topic in SAT. 3. the knowledge to

embed / derive the semantic content (intentional / in-

formational content) (3)

(iii) Formulation (the adapted terminology for ”pre-

sentation” in the formal structure) : Style and formal-

ism are qualitative indicators.

The preceding definition has a striking resemblance

with agents K-models. Hence, the formal structure

of a message and the K-model of the agent could be

seen as related by a strong morphism. (i) Data in

message definition is provided by the lexical skills of

both sender and recipient agents. (ii) The semantic

content is the knowledge chunk exchanged between

agents: It is used to enhance the recipient lexical skills

in ontological knowledge building or updating (iii)the

intentional versus informational contents of the mes-

sage, tackles the issue of confronting the knowledge

production modes or engine of both locutors. (iv)

Last, message formulation is the result of applying the

sender’s preferences about message exchanges, and

triggers the recipient’s adaptive modes to accept the

message or reject it if it is not properly designed. This

explains why the exchange of messages is a natural

mode of knowledge enhancement. If the message is

structurally compatible with the K-model of an agent,

then:

Let A

µ

be the K-model of agent µ. Let m be the for-

mal structure of an incoming message. The question

is: is A

µ

S

m a new possible state of µ’s K-model ?

For this, we need an interpreted form of the message.

Definition : An interpreted form of a message is

obtained through the following process: (i) Applying

decoding knowledge: unification algorithms and ab-

ductive rules are used to initiate this phase; (ii) Se-

mantic interpretation of the decoded form (informa-

tional content): Deductive and inductive reasoning is

used. (iii)If formulation is not a liability for interpre-

tation, the informational content should be equal or

close to semantic content of the message.

Given the preceding results, a message, seen from

its formal structure point of view, could be designed

as a knowledge bridge between agents. Its purpose

is to: (i)Allow agents to update their knowledge

through other agents knowledge; (ii)Fix knowledge

discrepancies between agents. Let m be the formal

structure of the message to be exchanged between

two agents µ and ν. The three components of m ,

data, knowledge and formulation, could be

designed as following; (i) The data of m belongs to

the lexicon of µ as a sender, and should also belong

to the lexicon of ν. (ii) The knowledge in m needs

the corresponding items of µ’s and ν’s knowledge en-

gines. However, if the message is supposed to in-

crease the recipient’s knowledge, this part also com-

prises knowledge that is either new or which exten-

sions are new to the recipient. (iii) Last, the formula-

tion is the formalism used for the bridge (language, or

protocol). It requires adaptation from the sender to the

recipient, and vice-versa.Figure 2 shows a representa-

tion of the formal structure of a message as bridge

between cognitive agents.

Dialogue as Knowledge Adjustment Seeking :

A theory of messages suggests dealing with the fol-

lowing cases: (i) Wether the message has been mis-

interpreted, or not decoded at all, which is a failure

in communication (an issue we will not tackle here);

(ii) Or if the message, being correctly interpreted has

roused contradictions and thus, has failed to reach its

goal; (iii) A combination of both cases, a common

situation in natural dialogues. We will focus here on

contradiction in knowledge or belief revision.

Belief revision appears as a compulsory process when:

(i) The recipient finds its knowledge contradicted by

ICSOFT 2006 - INTERNATIONAL CONFERENCE ON SOFTWARE AND DATA TECHNOLOGIES

268

Agent µ Agent ν

data

knowledge

Formu

-lation

Lexical

skills

Knowle-

dge engine

Adaptive

skills

message

Lexical

skills

Knowle-

dge engine

Adaptive

skills

Figure 2: Modelling the message as a bridge between two

K-models.

what the others know (this happens when launching

the primitive DetectConflict described in next

section) ; (ii)The informational content the recipient

has derived from the message seems irrelevant with

the dialogue situation. The first case is a pure KM

problem. An agent needs to receive a message, i.e., a

future knowledge chunk of its own K-model, and this

leeds to a major revision of its beliefs and its lexical

skills. Therefore a ”why” -type of dialogue is initi-

ated on the recipient’s behalf, and revision could be

undertaken as soon as the recipient is convinced of

the quality of the received knowledge. This has been

mostly investigated in (Parson et al. 1998) and (Zhang

et al. 2004 ). They have shown that not only the con-

tradicted agent is likely to change, but also its contra-

dictor, since the latter needs to restructure its own KB

in order to convince the other. Our theory reaches the

same results since the ”why-questions”, seen as mes-

sages from the former recipient, force the sender to

use its skills in formulation and in knowledge deriva-

tion. In (Yousfi and Prince, 2005), we have shown

how an artificial ”teacher” agent modifies its KB dur-

ing the process, at least at the student model level, but

furthermore, while teaching, it might spot its own de-

faults in knowledge.

The other case is the knowledgeable problem of mis-

understanding. Wether misunderstanding bursts out

from decoding errors or mistaken reasoning, the fact

is that the following equation : informational content

(INFC) = semantic content(SC) = intentional con-

tent (INTC) is sometimes not achieved between for-

mal agents. Until now, the latter tended to abort in-

teraction whenever it failed. However, since artifi-

cial agents need to be more robust, they have to be

provided with fonctionalities helping them to pursue

dialogue further. Our theory provides some heuris-

tics for approximating this double equality: (i) Re-

formulation dialogues tend to achieve the first equal-

ity : INFC= SC; by using other knowledge items

or other laws maybe closer its present K-model, the

recipient agent might reach a state where it finally un-

derstands the message ; (ii) Every careful choice of

formulation, on the sender’s behalf might help pro-

viding the second equality SC = INTC. Argumenta-

tion/explanation dialogues play an important role in

trying to reach a good approximation.

In conclusion, whenever agents need to interact, it is

not a problem if interaction is not a successful one

shot process. Dialogue is an incremental process act-

ing as a mutual adjustment mechanism, that repairs

both its own failures and agents mutual discrepan-

cies. However time consuming, dialogue is less costly

than a wrong action based on false beliefs. It is thus

very important in decision-making tasks where cru-

cial stakes are involved.

3 A GENERAL ARCHITECTURE

FOR KM THROUGH

DIALOGUE

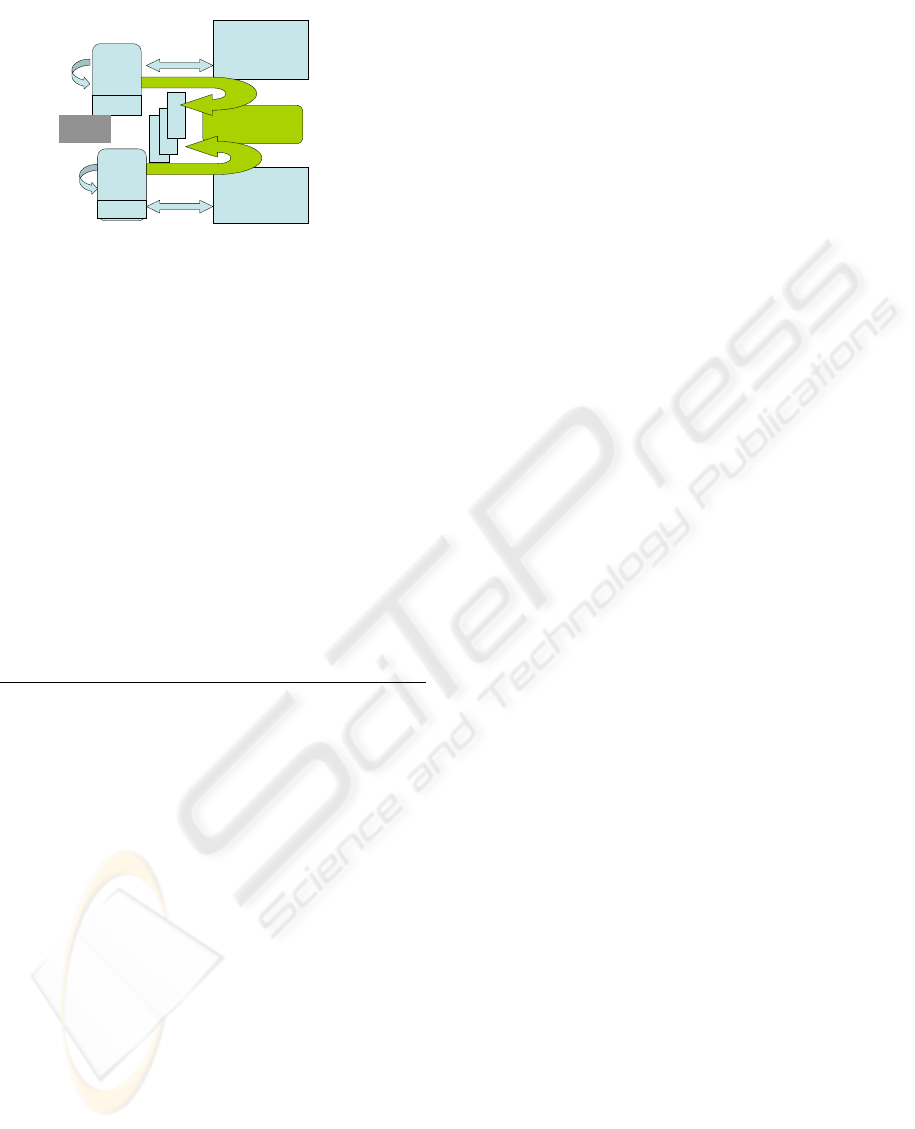

The general sofware architecture modelling a

knowledge sharing and revision activity between

two rational artificial agents is presented in figure

3. Components that have to be implemented are

the agents K-models comprizing the other agent

model, communication primitive allowing messages

exchanges, and dialogue strategies underlying the

communication protocol. World and activity models

are provided either from each agent environment or

activity, or from applications and services they need

to address or from which they are issued.

Implementing Agents:

Implementing the K-model is an easy task since it

involves : (i) The local agent ontology and lexical

skills; (ii) A set of Reasoning Primitives, and an

access to Dialogue Strategies (sharable between

rational agents) and a set of local rules for knowledge

and message construction; (iii) One or many repre-

sentation formalisms with which the agent processes

( e.g.XML for structure, KQML for communication

acts ((Finin et al. 1997) etc... ).

Sharable Reasoning Primitives are the following:

1.Add(K) ; adds knowledge to the K-Model

2. Revise (K,O); triggers an algorithm trying to

attach parts of K to the Ontology. Described in (Yousfi

and Prince, 2005). Provides a flag REVISEF indicating

success (or not) in attachment, and where it happens.

3. DetectConflict (K) ; if

REVISEF = false detects conflictual attachments. Its

result is issuing a CreateMessage(M,OtherAgent)

(see next subsection);

Two other modal primitives are necessary for a situa-

tion where knowledge has to be shared: (i) Wanted

MODELLING AND MANAGING KNOWLEDGE THROUGH DIALOGUE: A MODEL OF

COMMUNICATION-BASED KNOWLEDGE MANAGEMENT

269

; applied on knowledge (from O or A parts), is the

situation that launches dialogue; (ii) Believed :

applied on knowledge from O, A or P parts, is what

the agent assumes as true without have checked it.

By default, all knowledge in the K-model that is nei-

ther wanted nor believed is considered ’known’

until contradicted. Accessible Dialogue Strategies

are common and shared rules about dialogue protocol

(cf Dialogue Strategies component). Whenever an

agent is in a situation to communicate, it launches the

creation of the ’Other Agent Model’. The latter is a

subcomponent of the K-model created and updated

with a procedure inspired from the following (we

have chosen here an application where agents accept

several programming languages):

Create OtherAgentModel(Ag) ;

Proc main

New O; New A; New P;

Add (wanted (K), O);

Believed (A) : <-Add; Revise;

DetectConflict;

Believed (P) : <- KQML; XML; Java

endproc

Comment: The agent creates a new K-model of its

interlocutor agent with its three parts : Ontology

(O), Axioms (A) and P (presentation). It boot-

straps the ontology with the knowledge it wants

(it assumes that the other has it). By default, the

OtherAgentModel A part gets the sharable Rea-

soning Primitives, and the P part gets the languages

the agent itself understands. Message sending and

especially message reply will make it know if its as-

sumptions are right or wrong. An agent creates such

a model to trigger communication. The next logical

step is to create a message asking the interlocutor

about the wanted knowledge.

Communication Primitives

Three basic communication primitives are

necessary: One is CreateMessage the

second is AcceptMessage, and the last

isRejectMessage. All deal with three vari-

ables: D standing for Data, K standing for knowledge

and F for formulation. The first receives receives the

lexical choice after the K part has been expressed in

a logical form the INTC (intentional content) and

translated in the language instantiating the F part. As

an example, we present CreateMessage below.

The other primitives follow a similar description.

RejectMessage returns the value of the part re-

sponsible for rejection (language, or unknown data).

Whereas AcceptMessage triggers a matching

and revision procedure within the recipient agent

K-model, that might in turn end up with another

CreateMessage primitive. These primitives

use also basic functions Sendto and Ack (for

acknowledge) that deal directly in interfacing with

the other agent.

CreateMessage(M,Ag, L) ; arguments are the

Message, to the Agent, in a Language

Proc main New D; New K; New F;

Set F to L; sets the formulation in a given language.

Helps adjusting to the other agent language if L value is not

accepted

INTC <- Wanted (Kn) ; The intentional content of

the message is the wanted knowledge

F <- TranslateIn (L, INTC)

D <- D(F) ; Message Data is the data part of the trans-

lated message

K <- INTC ; Message knowledge is the wanted knowl-

edge

Sendto (M,Ag, R); sends the message to the agent

typing it with a ’role’ (to be explained in next subsection)

Endproc;

Dialogue Strategies : Message-level and Scripts:

Messages Roles: The exchange of communication

primitives follow dialogue strategies available to ev-

ery artificial agent. A strategy is related to the agent

goal and satisfaction of its needs. It can be typed in

order to extract information from the other agent or

to make it perform an action or a task.Thus, every

message plays a ’role’ in a dialogue instantiation,

according to a strategy. Roles have been labelled

after the Speech Act Theory illocutionary functions

(Searle 1969) or according to the functional roles

theory (as in (Yousfi and Prince, 2005)) and depend

on the task or activity type.At the message level,

roles are the materialisation of Dialogue Strategies.

For instance, the most used speech acts in agents

modelling and communication are performative

(i.e the message runs an applet) or directive ( the

message is a command to the other agent). The

functional roles we have used atmost in agents

learning are askfor-knowkedge, askfor-explanation,

give-knowledge, give-explanation, assert-satisfaction

or assert-unsatisfaction. Those were modals applied

to the CreateMessage primitive and transmited with

the message. In this paper, we present a generali-

sation of the architecture and components, and one

can notice that the role R is sent as an argument of

the Sendto command. Dialogue Strategies from

expectations: When an agent issues a message with

a given role, then it expects in turn a reply with a

compatible role. The adjustment script available to

agents follows these guidelines:

CreateMessage(M,Ag,L);

Expect(AcceptMessage(M’,OtherAgent, L),

R); the agents expects an understandable reply with a

given role

If no (AcceptMessage(M’,OtherAgent, L))

or RejectMessage(M’,Reason) then when no

answer is or an answer with decoding problems is provided

Call RepairCommunication else the strategy

ICSOFT 2006 - INTERNATIONAL CONFERENCE ON SOFTWARE AND DATA TECHNOLOGIES

270

K-Model

Agent A

Model of B

K-Model

Agent B

Model of A

Applications

Web Services

Model of the world

And activity (A)

Model of the world

And activity (B)

Dialogue strategies:

Adjustment and repair

Mes-

Sag-

ges

Applications

Web Services

Knowledge

feedback

communicating

communicating

Figure 3: Architecture of Communicating Agents Sharing

Dialogue Strategies, but Addressing Different Applications

and Activities.

shifts to the other script

TransformIn (M, O, A) , the message is trans-

formed into its parts and matched with ontology and

axioms

Revise (O, A) reasoning is applied on the added

elements

if Wanted(Kn) then R<- ’ok’

CreateMessage (M, OtherAgent, L) the

agent has found the wanted knowledge and asserts its

satisfaction

else R <- need else the agent sets the role to its

need

Call Adjusment and calls recursively the strategy

Let us note that their is a timeout associated to re-

cursive calls ie if no replies are given or if the dia-

logue enters an endless loop, then the dialogue strat-

egy component stops communication.

Unfortunately we have no room here to present the

FailureCommunication script but let us say that it

deals with reformulation (shifting languages) and ex-

planation roles and strategies in messages exchanges.

4 CONCLUSION

The model presented here and some elements of its ar-

chitecture have instantiated in a learning environment

for cognitive artificial agents. It is sufficiently general

to be implemented within different applications and

activities, as long as they need an advanced commu-

nication framework for knowledge sharing, revision

and for communication. The originality of the model

relies in modelling the dynamic process in KM, ie,

dialogue, as the crucial component in knowledge re-

vision, and not only considering the static dimension

of KM. What has not been explicitely detailed here is

that the same theory applies to Human-Computer in-

teraction and to Collective vs Individual agents KM.

The issue that is dealt with goes much further than ar-

tificial agents programming. But what we have shown

here is that even restricted to a formal and decidable

framework, the theroy takes into account knowledge

conflict and provides it with solutions inspired from

natural agents’ behaviour.

REFERENCES

J.L. Austin. How to Do Things with Words ed. J. O.

Urmson and Marina Sbis

´

a, Mass: Harvard University

Press.Cambridge.1975 (3rd edition).

J. Castelfranchi. Through the Minds of the Agents Jour-

nal of Artificial Societies and Social Simulation , 1(1).

1998.

T. Finin, Y. Labrou, and J. Mayfield. KQML as an agent

communication language in Jeff Bradshaw (Ed.), Soft-

ware Agents. MIT Press, Cambridge. 1997.

S. Franklin , A.Graesser. Is it an Agent, or just a Program?:

A Taxonomy for Autonomous Agents Proceedings of

the Third International Workshop on Agent Theories,

Architectures, and Languages Springer-Verlag, 1996.

R. Jacobson and M. Halle Fundamentals of Language Mou-

ton and co. the Hague 1956.

J. Makki, A.M. Alquier and V. Prince Learning risk ontolo-

gies from both corpora and cognitive agents. LIRMM-

CNRS research report. Montpellier France. 2006

S. Parsons , C. Sierra and N. Jennings. Agents that rea-

son and negotiate by arguing. Journal of Logic and

Computation, 8(3), pp 261–292. 1998

V. Prince. Pour une informatique cognitive dans les organ-

isations: le r

ˆ

ole central du langage (Towards Cogni-

tive Informatics in Organisations: the Central Role of

Language) Editions Masson. Paris, France. 1996.

A. Ravenscroft and R.M. Pilkington. Investigation by De-

sign: Developing Dialogue Models to Support Rea-

soning and Conceptual Change. International Journal

of Artificial Intelligence in Education ,11(1), pp.273–

298, 2000.

J. Searle. Speech Acts: An Essay in the Philosophy of Lan-

guage Cambridge University Press. Cambridge.1969

Andrew B. Williams. The Role of Multiagent Learning

in Ontology-based Knowledge Management. AAAI

2000 Spring Symposium on Bringing Knowledge to

Business Processes. 2000 pp175-177,

Andrew B. Williams. Learning to Share Meaning in a

Multi-Agent System. Autonomous Agents and Multi-

Agent Systems, volume 8, number 2,2004 pp-165-193

M. Wooldridge and S. Parsons. Languages for negotiation.

Proceedings of ECAI2000 pp 393–400,2000.

M. Yousfi-Monod and V. Prince. Knowledge acquisition

modelling through dialogue between cognitive agents.

Proceedings of ICEIS05, International Conference on

Enterprise Information Systems. pp. 201-206.

D.Zhang , N.Foo ,T. Meyer and R. Kwok. Negotiation as

Mutual Belief Revision. Proceedings of AAAI 2004,

2004.

MODELLING AND MANAGING KNOWLEDGE THROUGH DIALOGUE: A MODEL OF

COMMUNICATION-BASED KNOWLEDGE MANAGEMENT

271