IMPROVED OFF-LINE INTRUSION DETECTION

USING A GENETIC ALGORITHM

Pedro A. Diaz-Gomez

∗

Ingenieria de Sistemas, Universidad El Bosque

Bogota, Colombia

Dean F. Hougen

Robotics, Evolution, Adaptation and Learning Laboratory (REAL Lab)

School of Computer Science, University of Oklahoma

Norman, OK, USA

Keywords:

Genetic Algorithms, Intrusion Detection, Off-Line Intrusion Detection, Misuse Detection.

Abstract:

One of the primary approaches to the increasingly important problem of computer security is the Intrusion

Detection System. Various architectures and approaches have been proposed including: Statistical, rule-based

approaches; Neural Networks; Immune Systems; Genetic Algorithms; and Genetic Programming. This paper

focuses on the development of an off-line Intrusion Detection System to analyze a Sun audit trail file. Off-line

intrusion detection can be accomplished by searching audit trail logs of user activities for matches to patterns

of events required for known attacks. Because such search is NP-complete, heuristic methods will need to

be employed as databases of events and attacks grow. Genetic Algorithms can provide appropriate heuristic

search methods. However, balancing the need to detect all possible attacks found in an audit trail with the need

to avoid false positives (warnings of attacks that do not exist) is a challenge, given the scalar fitness values

required by Genetic Algorithms. This study discusses a fitness function independent of variable parameters to

overcome this problem. This fitness function allows the IDS to significantly reduce both its false positive and

false negative rate. This paper also describes extending the system to account for the possibility that intrusions

are either mutually exclusive or not mutually exclusive.

1 INTRODUCTION

The goal of a security system is to protect the most

valuable assets of an organization: data and informa-

tion. Different organizations will have very differ-

ent security policies and requirements depending on

their missions. This is the case for a bank, an Inter-

net Service Provider, a university, and a consulting

firm. However, all have data in some form, and their

security mechanisms are tasked with protecting the

privacy, integrity, and availability of the data. Many

efforts have been made to accomplish this goal: se-

curity policies, firewalls, Intrusion Detection Systems

(IDSs), anti-virus software, and standards to config-

ure services in operating systems and networks (Bace,

2000). This paper focuses on one of those topics: In-

trusion Detection Systems using the audit trail file.

The need for automated audit trail analysis was out-

lined a quarter century ago (Anderson, 1980) and it is

still present. Audit records are used and statistics are

∗

Conducting research at the Robotics, Evolution, Adap-

tation and Learning Laboratory (REAL Lab), School of

Computer Science, University of Oklahoma.

gathered and matched against profiles. Information

in the matching profiles then determines what rules to

apply to update the profiles, check for abnormal activ-

ity, and report anomalies detected (Denning, 1986).

A key aspect of an IDS is the hypothesis that ex-

ploitation of a system’s vulnerabilities is based in the

abnormal use of the system (Denning, 1986). In one

form or another, all IDSs take into account this as-

sumption. Some, like IDES, NIDES, and EMER-

ALD (Bace, 2000), use statistics, while others use

a learning approach like Neural Networks (Bace,

2000), immune Systems (Forrest et al., 1994), Ge-

netic Algorithms (M

´

e, 1998), and Genetic Program-

ming (Crosbie and Spafford, 1995).

This paper presents a tool to perform misuse detec-

tion, using Sun audit trail files (Anonymous, 2000),

and uses the guidelines of a previously proposed

IDS (M

´

e, 1998) based on Genetic Algorithms (GAs).

To understand this, we first present the basics of com-

puter security (Section 2) and Genetic Algorithms

(Section 3). This is followed by a brief introduction

to the previous GA–based IDS (Section 4). Next, our

own improved IDS is covered thoroughly (Section 5),

followed by conclusions and future work (Section 6).

66

A. Diaz-Gomez P. and F. Hougen D. (2005).

IMPROVED OFF-LINE INTRUSION DETECTION USING A GENETIC ALGORITHM.

In Proceedings of the Seventh Inter national Conference on Enterprise Information Systems, pages 66-73

DOI: 10.5220/0002553100660073

Copyright

c

SciTePress

2 COMPUTER SECURITY

An Intrusion Detection System (IDS) is a system that

monitors and detects intrusions, or abnormal activi-

ties, in a computer or computer network. The IDS re-

ports corresponding alarms and may take immediate

action on the intrusions (Tjaden, 2004).

An intrusion is defined as an attempt to gain ac-

cess to a system by an unauthorized user. Misuse

refers to attempts to exploit weak points in the com-

puter or the abuse of existing system privileges. Ab-

normal activity means significant deviations from the

normal operation of the system or use of the system

by users (Tjaden, 2004).

Intrusion detection, then, is the process of monitor-

ing computer networks and systems for violations of

security policy. In the simplest terms, intrusion detec-

tion systems consist of three functional components:

1. an information source that provides a stream of

event records,

2. an analysis engine that finds signs of intrusions, and

3. a response component that generates reactions

based on the outcome of the analysis engine (Bace,

2000).

In order to get information for intrusion analysis, an

audit trail is often used. According with the Rainbow

Series of computer security documents, outlined by

the Department of Defense (Bace, 2000), the goals of

the audit mechanism are:

• to allow the review of patterns of access,

• to allow the discovery of both insider and outsider

attempts to bypass protection mechanisms,

• to allow the discovery of a transaction of a user

from a lower to a higher privilege level,

• to serve as a deterrent to users’ attempts to bypass

system-protection mechanisms, and

• to serve as a yet another form of user assurance that

attempts to bypass the protection will be recorded

as discovered.

The need for automatic audit trail review to sup-

port security goals has been well documented with the

matrix in Table 1 suggested for classifying risks and

threats to computer systems (Anderson, 1980).

Table 1: Threat matrix. Redrawn with minor modifications

from Anderson, 1980

Not authorized to Authorized to

use data/program use data/program

Not authorized CASE A Blank

to use computer External Penetration

Authorized to CASE B CASE C

use computer Internal Penetration Misfeasance

This suggests a taxonomy for classifying risks and

threats to computer systems that differentiates be-

tween external and internal sources of problems. This

articulation has been useful in structuring require-

ments for audit trail content (Bace, 2000). Accord-

ing to this classification, this paper focuses on internal

penetration—an audit trail file of a authorized user is

analyzed in order to get misuse.

A formal definition of security says that it must

guaranty confidentiality, integrity, and availability.

Confidentiality refers to the fact that the information is

only known by authorized users. Integrity means that

the information is protected from alteration. Avail-

ability means that the system operates as it was de-

signed; it means, for example, that users have access

to it when they need it, where they need it, and in the

form they need it.

Another crucial aspect of any system’s security is

its security policy. A security policy is the set of

practices that is explicitly stated by an organization

in order to protect sensitive information (Crosbie and

Spafford, 1995).

The content of most security policies is driven by

a desire to address threats. A threat is defined as any

event that has the potential to harm a system. This

harm can be access of data by an unauthorized user,

destruction or modification of data, or denial of ser-

vice (Bace, 2000).

Security problems in computer systems result from

vulnerabilities. Vulnerabilities are weaknesses in sys-

tems that can be exploited in ways that violate secu-

rity policy. Although threat and vulnerability are in-

trinsically related, they are not the same. Threat is the

result of exploiting one or more vulnerabilities. Intru-

sion detection is designed to identify and respond to

both (Bace, 2000).

IDSs can be classified as host-based, multihost-

based, and network-based (Tjaden, 2004). Host-

based IDSs monitor a single computer using the audit

trail of the operating system whereas network-based

IDSs monitor computers on a network by scrutinizing

the audit trail of multiple hosts and network traffic.

A multihost-based IDS analyzes data from multi-

ple computers. Usually a module of the IDS runs

on each individual computer and sends reports to a

special module, sometimes called a director, running

on one machine. Since the director receives informa-

tion from the other computers, it can correlate this in-

formation to recognize intrusions that host-based sys-

tems would probably miss, such as worms. A host-

based IDS may not notice that type of intrusion. A

multihost-based IDS, with its data from a number of

different computers, would have a much better chance

of recognizing a worm as it spreads (Tjaden, 2004).

This paper deals with a host-based IDS, and an au-

dit trail file generated by a Sun machine is analyzed.

IMPROVED OFF-LINE INTRUSION DETECTION USING A GENETIC ALGORITHM

67

3 GENETIC ALGORITHMS

Genetic Algorithms (GAs) belong to the field of evo-

lutionary computation and have been widely used in

problems that require searching through a huge num-

ber of possibilities for solutions (Mitchell, 1998). The

strength behind GAs resides in the fact that the search

space is traversed in parallel by proposing solutions,

at the beginning randomly generated, and those solu-

tions are continuously evaluated with a fitness func-

tion.

Each set of solutions proposed is called a popula-

tion, and the first set is called the initial population.

Once all members in the initial population are evalu-

ated and assigned a fitness value, the selection mech-

anism is applied in order to produce the next genera-

tion.

The selection mechanism is applied in order to

choose parents that are going to reproduce. Often par-

ents are selected proportionally to their fitness value.

One common way to conceive of this is to imag-

ine mapping the fitness value of each individual to

a roulette wheel, where a greater fitness equates to a

larger space on the wheel. Once parents are chosen,

crossover and mutation are applied to them in order

to produce offspring.

Crossover exchanges subparts of parents (typically

two), while mutation changes randomly a particu-

lar part of an individual. The process of selection,

crossover, and mutation gives the next generation, and

the process is repeated until a solution is found or un-

til resources are exhausted.

4 GASSATA

One motivation for the topic of this research was the

paper “GASSATA, A Genetic Algorithm as an Alter-

native Tool for Security Audit Trail Analysis” (M

´

e,

1998), so let us analyze the theory behind it.

GASSATA is an off-line tool that increases security

audit trail analysis efficiency. The goals of this ap-

proach are the following:

• to investigate misuse detection, i.e., to determine if

the events generated by a user correspond to known

attacks, and

• to search in the audit trail file for the occurrence of

attacks by using a heuristic method, Genetic Al-

gorithms, because this search is an NP-complete

problem.

This approach is shown schematically in Figure 1.

The audit subsystem recognizes various kinds of

events (such as changing to a particular directory or

copying a file) which are recorded in the audit trail

file. The Syntax Analyzer classifies those audit events

Vector

Weighted

Attack−Event

Audit Trail

In

Matrix

I

Syntax

Analyser

Genetic

Algorithm

(OV)

(W) (AE)

Observed

Vector

Figure 1: Prototype of GASSATA.

and generates the Observed Vector (OV ). The Ge-

netic Algorithm module finds the hypothesized vec-

tor I that maximizes the product W · I, subject to

the constraint (AE · I)

i

≤ OV

i

profiles where W is

a weight vector that reflects the priorities of the se-

curity manager, AE is the Attacks-Event matrix that

correlates sets of events with known attack profiles,

1 ≤ i ≤ N

a

, and N

a

is the number of known attack

profiles. The duration of the audit session was cho-

sen as 30 minutes and four (4) types of user were de-

fined: inexperienced, novice developer, professional,

and UNIX–intensive user.

The fitness function suggested in GASSATA is:

F (I) = α +

N

a

X

i=1

W

i

∗ I

i

− β ∗ T

2

. (1)

The goal of each component of the equation is as fol-

lows:

• α: To maintain F(I) > 0, and therefore maintain

diversity in the population.

• I: To allow for hypotheses of attacks. The system

is rewarded for hypothesizing attacks, particularly

those of greatest concern to the security manager.

This is generated randomly in the first generation.

• β: To provide a slope for the penalty function.

• T: To count the number of times (AE · I)

i

> OV

i

.

The system is penalized for hypothesizing sets of

attacks that could not have occurred, given the ob-

servations.

Experimental results for simulated attacks have

been reported (M

´

e, 1998).

5 AN IMPROVED IDS

As outlined before, intrusion detection systems con-

sist principally of three functional components:

1. an information source that provides a stream of

event records,

2. an analysis engine that finds signs of intrusions, and

ICEIS 2005 - ARTIFICIAL INTELLIGENCE AND DECISION SUPPORT SYSTEMS

68

3. a response component that generates reactions

based on the outcome of the analysis engine (Bace,

2000).

In order to get information for intrusion analysis,

an audit trail is often used. In this research we used

the Sun audit trail file (Anonymous, 2000).

5.1 The Security Audit Trail

Security auditing is the formal tracking and analysis

of actions taken by computers’ users. This process is

necessary in order to control current security policies

and detect anomalies, abuse, or misuse of the system

by users.

In order to provide individual user accountabil-

ity, the computing system identifies and authenticates

each user. Besides that, computers provide the pos-

sibility to register actions taken by users. Audit data

corresponds, then, to recorded actions taken by iden-

tifiable users, associated under the unique user iden-

tifier (ID). All processes and activity made by users

are recorded in the audit trail file. In a sense, audit

data is the complete recorded history of a users’ sys-

tem activity that can be gathered for an after-the-fact

investigation or to determine the effectiveness of ex-

isting security controls (Anonymous, 2000).

The audit trail data used in this research was down-

loaded from the MIT Lincoln Laboratory (Fried and

Zissman, 1998), and it has activity from various users.

Since GASSATA (M

´

e, 1998) works its input by user,

the file from was filtered by user.

The Solaris audit subsystem, called the Sun-

SHIELD Basic Security Module (BSM), is the mod-

ule responsible for the accountability of users’ ac-

tivity on the system. The file generated by the

BSM has records that describe user-level and kernel

events. Each record consists of tokens that identify

the process that performed the event, the objects on

which it was performed, and the objects’ attributes,

such as the owners or modes.

5.2 The Analysis Engine

Once the audit data is recorded, it must be reviewed

on a regular basis in order to maintain effective oper-

ational security. Administrators who review the audit

data must watch for events that may signify abnormal

use of the system. Some examples include:

• trying to change sensitive information on records

of files requiring higher privilege,

• killing critical processes,

• trying to access different user’s files,

• probing the system,

• installing of unauthorized, potentially damaging

software, and

• exploiting a security vulnerability to gain higher or

different privileges.

In order to provide system administrators with the

ability to effectively audit user actions, the software

developed, as suggested for GASSATA (M

´

e, 1998),

provides the capability to read the audit trail and per-

form analysis of those records based on known intru-

sions. The program developed to simulate GASSATA

consists of two parts: the first one, the scanner, reads

the audit trail to classify and count the user’s events,

and the second one, the analysis engine, is a Genetic

Algorithm. In schematic form, the software devel-

oped has the same architecture shown in Figure 1.

5.2.1 Data Structures

The scanner looks at all the audit trail records for a

user and counts the different kind of events. The out-

put of this program is an array of size N

a

called the

Observed Vector (OV ). Each position of this array

corresponds to the number of occurrences of an event

according with the classification stated before.

The Genetic Algorithm receives as input the Ob-

served Vector and the Attack-Events matrix (AE) of

known attacks (M

´

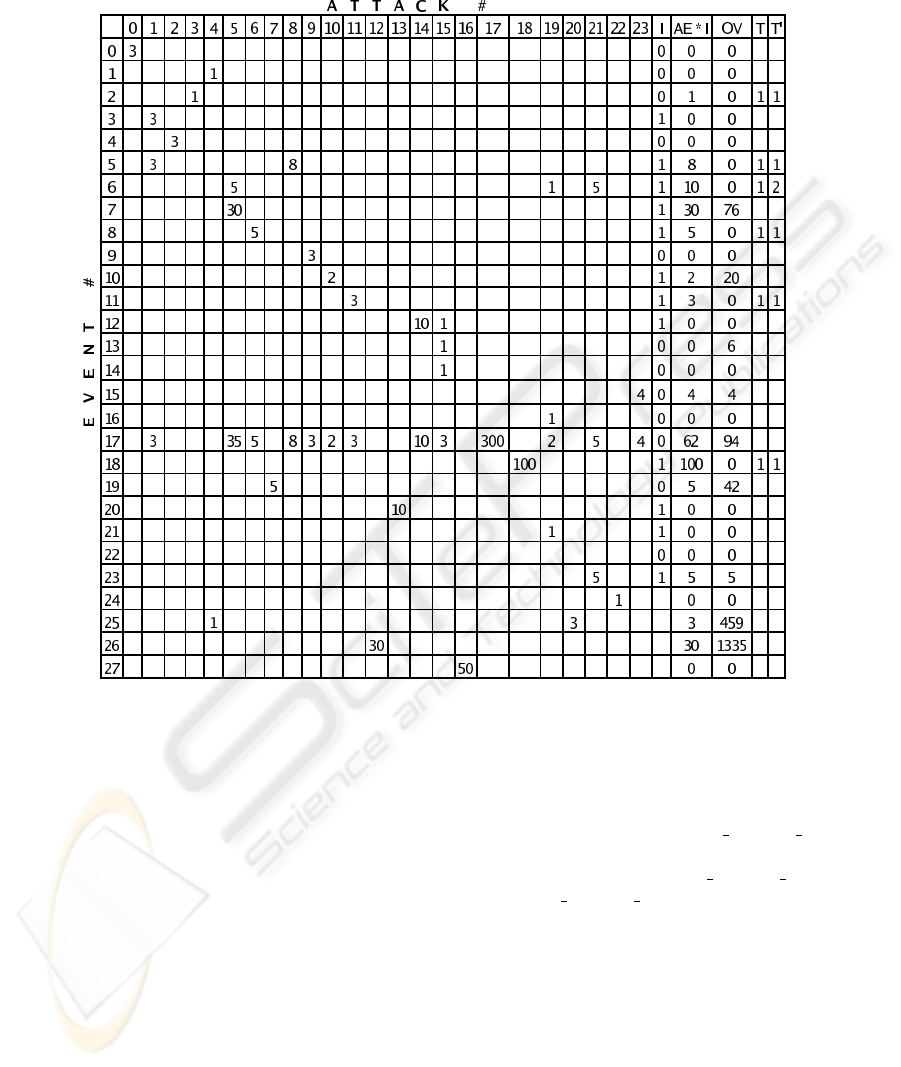

e, 1998). In Table 2, each row corre-

sponds to an event type. Each numeric column—i.e.,

0 through 23—corresponds to a known attack; col-

umn I corresponds to a hypothesis that is being eval-

uated, column AE ∗ I corresponds to the multiplica-

tion of matrix AE with hypothesis vector I, column

OV corresponds to the actual number of events that

occurred—this is the output of the scanner program,

and column entry i in T is set to 1 if (AE ·I)

i

> OV

i

,

i.e., if the number of hypothesized events of type i are

greater than the actual number of events of that type

registered in the audit trail file. Column T

′

shows

the number of of hypothesized attacks that require

more events of type i than actually occurred (see Sec-

tion 5.2.3).

The Observed Vector contains the number of sys-

tem events performed by a user in a session. As

shown in Table 3, the first row is the type of event

and the second row is the number of events of that

type.

The first position of row 2 shows 1, which means

that there was a 1 event of type User

login fail,

the eighth position shows 40, which means that there

were 40 events of type ls fail. Other event types

are listed elsewhere (M

´

e, 1998).

5.2.2 The Genetic Algorithm

The analysis engine is a Genetic Algorithm that works

as follows:

Generation of the First Population

Do

IMPROVED OFF-LINE INTRUSION DETECTION USING A GENETIC ALGORITHM

69

Table 2: The Attack Events matrix AE, an example hypothesis Vector I, the resulting Multiplication Vector MV (= AE ∗ I),

an example Observed Vector OV , and the count of overestimates in columns T and T

′

Selection of Parents

Reproduction with crossover and mutation

Until an acceptable Solution is found or

resources are exhausted

An individual (I) in the population is an array of 24

positions. Each position corresponds to an attack that

is being hypothesized.

The First Population is generated randomly. The

algorithm is guessing the possible occurrence of at-

tacks. 40 individuals are generated.

Selection of Parents. Parents are selected according

to their fitness value. The fitness function guides the

algorithm to find the possible intrusions.

Crossover and Mutation. Parents that are chosen

are crossed with a probability equal to 60% and mu-

tated with a probability of 2.4%. Mitchell (1998) sug-

gests values like 70% for crossover and 0.1% for mu-

tation as commonly appropriate.

Number of generations. The number of genera-

tions for each test was variable. At the very be-

ginning of our experiments, for example, we keep

track of the fitness values and stop after 500,000

generations; we found, then, the greatest fitness

value of all the generations and used it with a

threshold equal to STDV(Max

fitness value)/4,

so the algorithm begins to run, and if the fit-

ness value goes less than Max fitness v alue)/4 −

STDV(Max

fitness value)/4 the algorithm stops.

The Attack-Event matrix, which gives the number

of events by attack, is multiplied by the individual

I that hypothesized the occurrence of attacks. The

result is the Multiplication Vector (MV) that gives

the classified total number of events hypothesized

(= AE ∗ I). This vector is evaluated using the Ob-

served Vector that records the number of events that

have occurred. Each position of the result vector MV

is compared with the corresponding position in OV .

If M V

i

, the hypothesized number of events of type

i, is less than OV

i

(the audit trail observed number

of events of type i), then the hypothesis is possible.

ICEIS 2005 - ARTIFICIAL INTELLIGENCE AND DECISION SUPPORT SYSTEMS

70

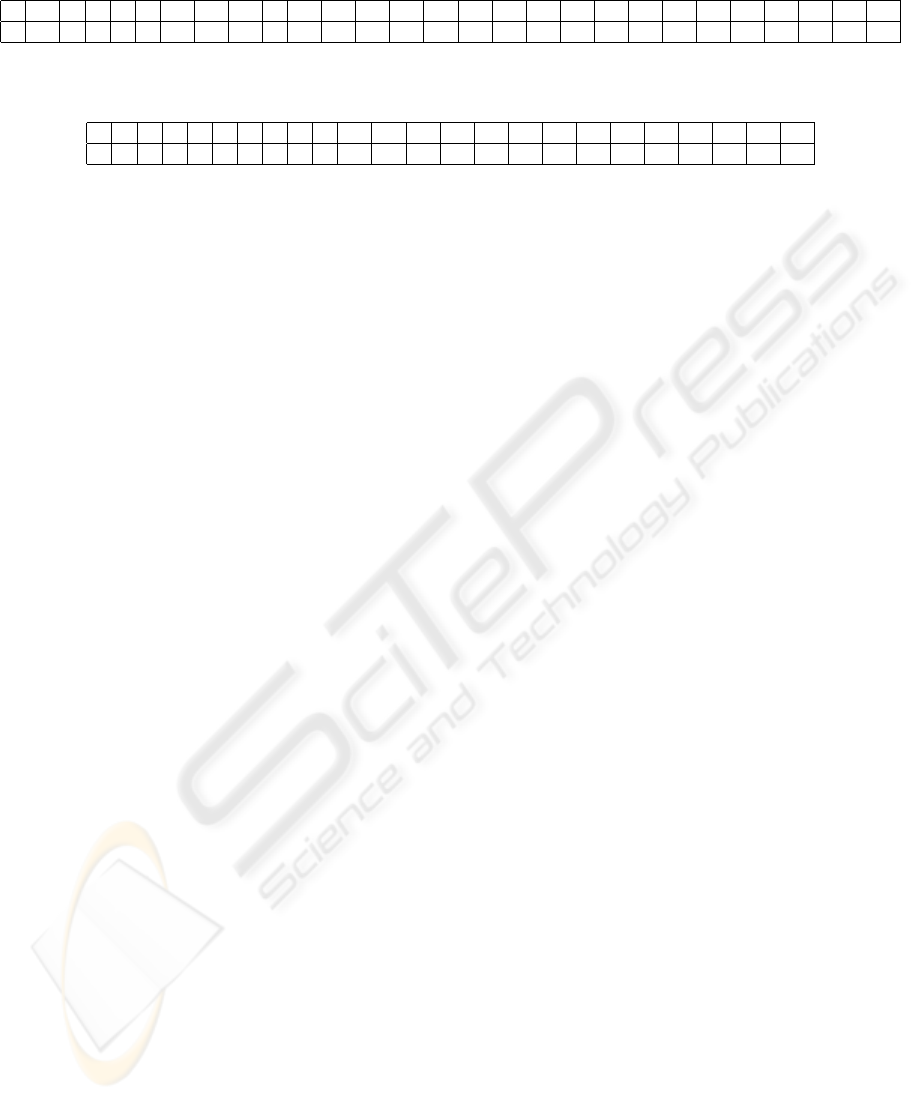

Table 3: An example of an Observed Vector

0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27

1 12 6 0 4 9 11 40 34 9 5 45 0 0 2 7 0 29 0 0 0 0 0 0 0 0 0 0

Table 4: An example of an Individual. Positions 4, 5, and 8 that have 1 are showing the possible occurrence of attacks 4, 5,

and 8

0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23

0 0 0 0 1 1 0 0 1 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

But if, on the contrary, M V

i

is greater than OV

i

, that

means that the algorithm hypothesized more intru-

sions than actually occurred, a situation that must be

take into account using a penalty factor in the fitness

function. The penalty term is then related with the

number of events in MV

i

that are greater than the ob-

served events in OV

i

.

5.2.3 An Improved Fitness Function

A genetic algorithm needs a fitness function that

combines objectives and constraints into a single

value (Coello, 1998). The problem is not only to find

the appropriate function but also to provide accurate

values to the parameters that produce the correct so-

lution to the problem for as many instances as pos-

sible. It appears that the fitness function proposed

in GASSATA, a combination of objectives and con-

straints into a single value using arithmetical opera-

tions, should be correct. However, it is difficult to set

the parameters so that the algorithm finds intrusions

and converges.

For this problem GASSATA uses a fitness function

that has principally two terms:

P

N

a

i=1

W

i

∗ I

i

that is

rewarding, and β ∗ T

2

that is penalizing.

As the fitness function is giving a payoff to the

highest valued individual, the term

P

N

a

i=1

W

i

∗ I

i

is

guiding the solution to have the maximum number of

intrusions. However, this is good enough until the

correct set of intrusions are found. Later on, i.e., if

more intrusions than that are hypothesized, the prob-

lem of false positives occurs. On the other hand, the

term β ∗ T

2

is diminishing the fitness but in doing

so various intrusions can hit the same event. When

this happens the counting of overestimates is wrong.

These two facts were tested and false positives and

false negatives were found. (Results of this testing

and an analysis of the reason for it will be published

elsewhere (Diaz-Gomez and Hougen, 2005). For the

present work, we concentrate on improvements to the

IDS).

The solution proposed has two parts:

1. remove the positive term

P

N

a

i=1

I

i

, and

2. count overestimates in the correct way; this means,

if two intrusions require the same event in numbers

in excess of the number of actual events then count

them both, and so forth. Call this T

′

.

With this in mind, the fitness function only has one

term, the penalty function and the new fitness function

suggested is

F (I) = N

e

− T

′

(2)

where N

e

corresponds to the total number of classi-

fied events. For testing this value is 28. T

′

corre-

sponds to the number of overestimates, i.e., the num-

ber of times (AE · I)

i

> OV

i

.

It must be taken into account that the role of α cor-

responds now to N

e

and that β is equal to one. How-

ever, the term

P

N

a

i=1

I

i

was suppressed, as stated be-

fore. It must be reinforced that the reason for doing

that is because the term

P

N

a

i=1

I

i

is giving the number

of intrusions hypothesized but those intrusions have

not been evaluated yet. In doing the evaluations, that

may produce an incorrect count of overestimates.

Now, the hypothesized vector I is really evaluated

in T

′

; the better the hypothesized vector, the smaller

T

′

is, and of course, F (I) → N

e

, the maximum. The

fitness function is evaluating only the T

′

term. There

is a maximum when T = 0.

In this study we divided the set of intrusions into

two subsets: mutually exclusive and not mutually ex-

clusive intrusions. We define mutually exclusive in-

trusions those that can not occur at the same time, de-

pending of the Observed Vector in the analysis, i.e.,

if in considering each intrusion alone T = 0 but in

considering them at the same time T 6= 0. That is the

case for all intrusions that share some event type; for

example, intrusions number 5, 19 and 21, in each of

which event number 6 is present (see Table 2).

As our genetic algorithm runs, it creates aggre-

gate solution sets of all possible compatible intrusions

found. To do this, it records all the realistic solutions

(those where T = 0) found in the search space and

keeps track of each intrusion it finds within each real-

istic solution. The algorithm then checks if the intru-

sion already exists in its current solution set and, if it

does not, then it checks if it is mutually exclusive or

not in order to add it to the corresponding aggregate

solution set. In this way, the algorithm builds up sets

of all compatible solutions. This mechanism can be

seen as a replacement for the positive term

P

N

a

i=1

I

i

IMPROVED OFF-LINE INTRUSION DETECTION USING A GENETIC ALGORITHM

71

which was misleading the genetic algorithm in GAS-

SATA.

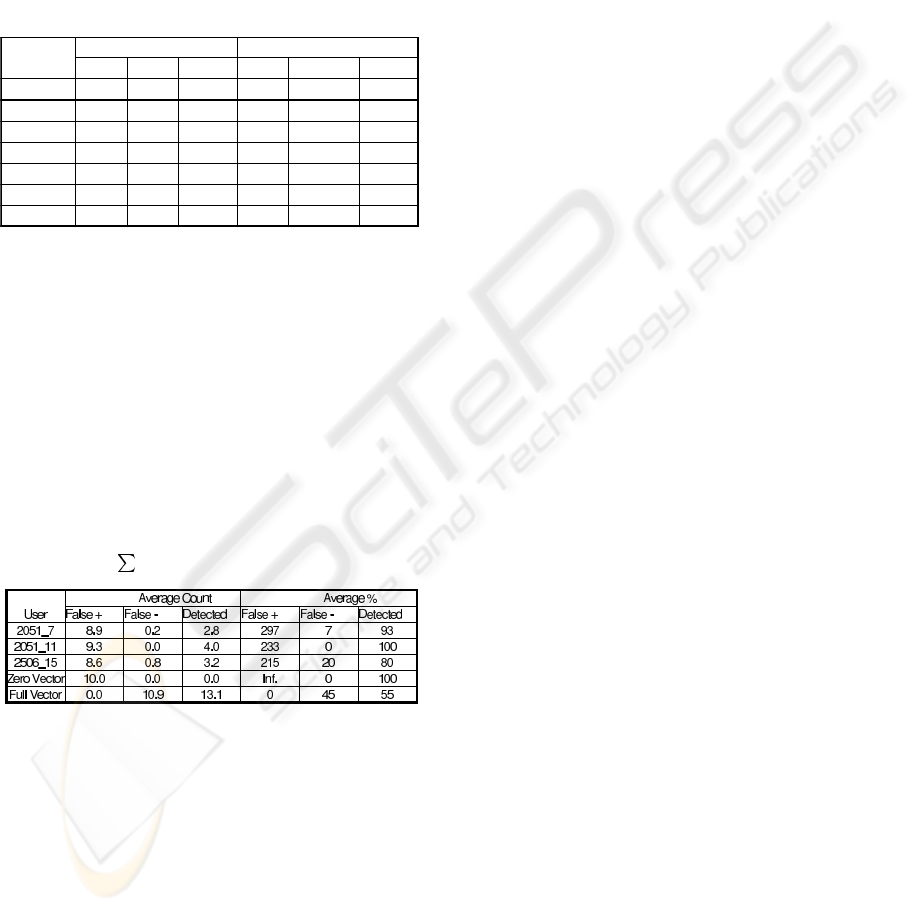

The results found with this fitness function are

shown in Table 5. The data corresponding to users

2051 and 2506 was extracted by our scanner from au-

dit data files downloaded from the Lincoln Laboratory

at MIT (Fried and Zissman, 1998).

Table 5: Results with fitness function F (I) = N

e

− T

averaged over 10 runs

Average Count Average %

User False + False - Detected False + False - Detected

2051_7 0 0 3 0 0 100

2051_11 0 0 4 0 0 100

2506_15 0 0 4 0 0 100

Zero Vector 0 0 0 0 0 100

0 0.1 0.9 0 10 90

0 0 2 0 0 100

0 0 3 0 0 100

One Intrus.

Two Intrus.

Three Intrus.

As can be seen, with the fitness function proposed

there are no false positives and the number of false

negatives decreases dramatically. This time 70 runs

were performed with different data and only one time

a false negative was present.

These results are significantly better than any of

those found with any of the parameters we tested for

the original GASSATA fitness function. For example,

setting α = N

2

e

/2,

P

N

a

i=1

W

i

> N

2

e

/2, and β = 1

resulted in the performance shown in Table 6.

Table 6: Results with Fitness Function as in Equation 1 us-

ing α = N

2

e

/2,

N

a

i=1

W

i

> N

2

e

/2, and β = 1

5.3 Improvements

As with many heuristic tools, we have difficulties in

the implementation of GASSATA. Some difficulties

arise in providing accurate values for the fitness pa-

rameters α, W , and β. In doing that we found a high

percentage of false positives, so this study made the

following improvements in order to:

• dismiss false positives and false negatives,

• find the maximum set of intrusions and disaggre-

gate them as mutually exclusive or not,

• record all events not considered in the intrusion

analysis.

As was explained in Section 5.2.3 the term

P

N

a

i=1

W

i

∗ I

i

proposed for the fitness function in

Equation 1 is guiding the algorithm to give false pos-

itives, so with the new fitness function proposed in

this research (see Equation 2), we have discarded

that term. Experimentally, as is shown in Table 5,

there were no false positives with our fitness function

F (I) = N

e

− T .

We also managed to all but eliminate false neg-

atives by building up aggregate solution sets of all

compatible intrusions found, as also explained in Sec-

tion 5.2.3.

Another improvement is related with the recording

of events not considered in the analysis. That is, the

scanner is looking for predefined events; if there are

events not defined, those are recorded, so the system

administrator can check them and evaluate if there are

critical events not considered and that can be included

in the scanner.

6 CONCLUSIONS AND FUTURE

WORK

This paper proposes a fitness function independent

of variable parameters, making the fitness function

to solve this particular problem quite general and in-

dependent of the audit trail data. This approach can

be generalized to similar multi-objective fitness func-

tions for genetic algorithms. At the same time the

system proposed improves the one suggested previ-

ously (M

´

e, 1998) by recording all the events not con-

sidered in the intrusion analysis, finding the maxi-

mum set of intrusions and disaggregating them as mu-

tually exclusive or not, and diminishing the false pos-

itives and false negatives.

One topic that can be addressed in future work

is to investigate system performance using different

crossover and mutation rates.

We also consider it important for the future of intru-

sion detection systems to consider the standardization

of audit trail files. Such a standard has the principal

benefit that it would enable logs generated by differ-

ent operating systems to be reconciled. With the au-

dit trail standardized, the analysis of logs by a central

engine would be simpler, because that engine would

deal with only one file structure.

Another improvement of the specific intrusion sys-

tem developed is the use of real-time intrusion detec-

tion, because such a system can catch a range of intru-

sions like viruses, Trojan horses, and masquerading

before these attacks have time to do extensive dam-

age to a system.

ICEIS 2005 - ARTIFICIAL INTELLIGENCE AND DECISION SUPPORT SYSTEMS

72

This paper presented some of this new research in

intrusion detection, by using a GA as an analytical

engine that performs intrusion detection. However,

the field of IDSs is quite diverse and other approaches

such as immune systems and neural networks have

been developed in order to improve this mechanism.

The field is deep and there are promising new ways

to think about it. Evolutionary computation offers

a chance to see intrusion detection systems with the

ability to evolve—evolution that could sometimes ex-

ceed human programmers. There are new paradigms

to explore and we can use computers themselves as

the vehicle.

REFERENCES

Anderson, J. P. (1980). Computer security threat monitoring

and surveillance. Technical Report 79F296400, James

P. Anderson, Co., Fort Washington, PA.

Anonymous (2000). SunSHIELD basic security mod-

ule guide (Solaris 8). Technical Report 806-

1789-10, Sun Microsystems, Inc., Palo Alto, CA.

http://docs.sun.com/db/doc/806-1789, accessed July

2004.

Bace, R. G. (2000). Intrusion Detection. MacMillan Tech-

nical Publishing, USA.

Coello, C. A. C. (1998). A comprehensive survey

of evolutionary-based multiobjective optimization

techniques. Knowledge and Information Systems,

1(3):269–308.

Crosbie, M. and Spafford, G. (1995). Applying genetic pro-

gramming to intrusion detection. In Papers from the

1995 AAAI Fall Symposium, pages 1–8.

Denning, D. E. (1986). An intrusion-detection model. In

Proceedings of the 1986 IEEE Symposium on Security

and Privacy, pages 118–131.

Diaz-Gomez, P. A. and Hougen, D. F. (2005). Analysis of

an off-line intrusion detection system: A case study

in multi-objective genetic algorithms. In Proceedings

of the Florida Artificial Intelligence Research Society

Conference. AAAI Press.

Forrest, S., Perelson, A. S., Allen, L., and Cherukuri, R.

(1994). Self-nonself discrimination in a computer.

In Proceedings of the 1994 IEEE Symposium on Re-

search in Security and Privacy, pages 202–212, Oak-

land, CA. IEEE Computer Society Press.

Fried, D. and Zissman, M. (1998). Intrusion detec-

tion evaluation. Technical report, Lincoln Labora-

tory, MIT. http://www.ll.mit.edu/IST/ideval/, accessed

March 2004.

M

´

e, L. (1998). GASSATA, a genetic algorithm as an al-

ternative tool for security audit trail analysis. In First

International Workshop on the Recent Advances in In-

trusion Detection, Belgium.

Mitchell, M. (1998). An Introduction to Genetic Algo-

rithms. MIT Press.

Tjaden, B. C. (2004). Fundamentals of Secure Computer

Systems. Franklin and Beedle & Associates.

IMPROVED OFF-LINE INTRUSION DETECTION USING A GENETIC ALGORITHM

73